SCA/HPC Asia 2026: Giga Computing Unveiled XN24-VC0-LA61 Server Built on Nvidia GB200 NVL4 Platform

XN24-VC0-LA61 is Nvidia Grace Blackwell server platform based on Nvidia MGX modular architecture, featuring 2U dual-processor design, and incorporates Direct Liquid Cooling (DLC) technology

This is a Press Release edited by StorageNewsletter.com on February 2, 2026 at 2:00 pmGiga Computing, a subsidiary of Gigabyte Technology Co., Ltd. and a provider in high-performance computing and data center solutions, launched its next-gen AI and HPC server, the XN24-VC0-LA61 powered by the Nvidia GB200 NVL4 platform.

Purpose-built for its heterogeneous architecture of CPU and GPU, the system has made its debut at SCA/HPC Asia 2026 in Osaka, Japan, January 27- 29, showcasing Giga Computing’s latest innovation in accelerated computing with liquid cooling technologies.

The XN24 server has been selected for the Riken Center for Computational Science (R-CCS) next-gen HPC-Quantum hybrid platform. The new platform integrates Gigabyte servers into the development of FugakuNEXT, the eventual successor to Japan’s flagship supercomputer, Fugaku. This integration will use the Nvidia CUDA-Q platform to support the development of hybrid quantum-GPU supercomputing systems and research into advanced scientific applications that bridge quantum and traditional high-performance computing.

Beyond the XN24, Giga Computing is also demonstrating a comprehensive deployment portfolio at the event – ranging from enterprise-grade AI infrastructure to rack-scale data center solutions – underscoring its role as a pivotal hardware provider for global high-end scientific computing data centers.

Liquid-Cooled: Accelerated Computing

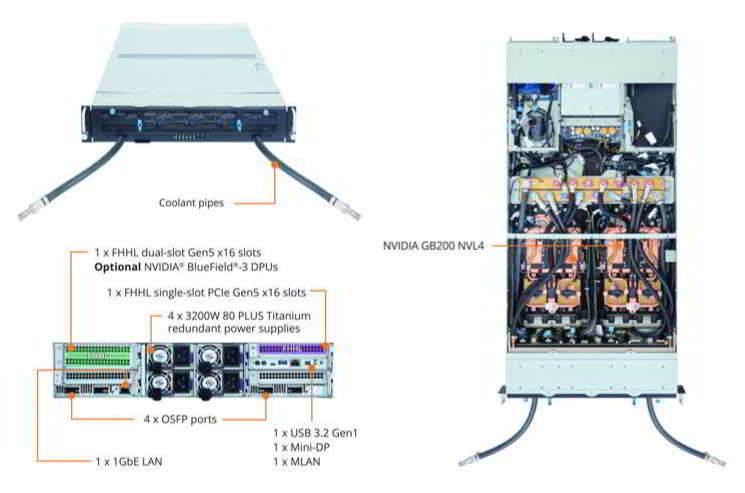

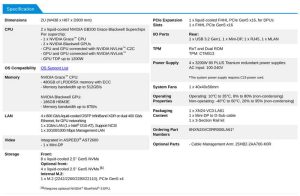

The company’s XN24-VC0-LA61 is a Nvidia Grace Blackwell server platform based on Nvidia MGX modular architecture. Featuring a 2U dual-processor design, it incorporates Direct Liquid Cooling (DLC) technology. Designed for modular scalability, the XN24 offers a flexible alternative for organizations to deploy Nvidia Blackwell-class computing power without the immediate requirement of a full rack-scale infrastructure. It provides a core foundation for building scalable, high-efficient AI infrastructure.

The company’s XN24-VC0-LA61 is a Nvidia Grace Blackwell server platform based on Nvidia MGX modular architecture. Featuring a 2U dual-processor design, it incorporates Direct Liquid Cooling (DLC) technology. Designed for modular scalability, the XN24 offers a flexible alternative for organizations to deploy Nvidia Blackwell-class computing power without the immediate requirement of a full rack-scale infrastructure. It provides a core foundation for building scalable, high-efficient AI infrastructure.

- Extreme Computing Density: Powered by Nvidia GB200 NVL4, the system integrates two Nvidia Grace CPUs based on the Arm architecture and four NVIDIA Blackwell GPUs. Each Superchip is equipped with 480GB of LPDDR5X ECC CPU memory, while the GPU provides up to 186GB of HBM3E memory, significantly accelerating scientific simulations, Large Language Model (LLM) training, and high-throughput inference tasks

- High-Speed Network Integration: The system supports the Nvidia Quantum-X800 InfiniBand or Spectrum-X Ethernet platform with 800Gb/s InfiniBand or 400Gb/s Ethernet per port, utilizing Nvidia ConnectX-8 SuperNIC solutions to ensure low-latency, high-bandwidth communication across multi-node clusters

- Flexible Storage and Expansion: It offers up to 12xPCIe Gen5 NVMe drive bays and supports optional DPUs (data processing units), such as Nvidia BlueField, for hardware-accelerated offloading of compute and security tasks. The system is also equipped with 80 PLUS Titanium redundant power supplies to ensure efficient and stable data center operations

Comprehensive AI and HPC Portfolio at SCA/HPC Asia

The company has showcased a full spectrum of solutions across GPU-accelerated, Arm, and x86 architectures:

-

GIGAPOD: Rack-Scale AI Solution: A customizable rack-scale solution integrating 32 GIGABYTE GPU servers with optimized networking and storage. The featured G4L3 series is based on Nvidia HGX platform, supporting Intel Xeon processors and designed for AI factories and intensive LLM workloads

XL44-SX2-AAS1

-

XL44-SX2-AAS1 built on Nvidia MGX Platform: An air-cooled modular server ideal for enterprise AI training, inference, and visual computing. It supports dual Intel Xeon processors, up to 8 Nvidia RTX PRO 6000 Blackwell, 1 Nvidia BlueField-3 DPU, and 4 NVIDIA ConnectX-8 SuperNICs

TO25-ZU5

-

AMD EPYC Compute Nodes TO25-ZU4 and TO25-ZU5: Compliant with OCP ORV3 (Open Rack v3) standards, these nodes use AMD EPYC 9005/9004 series processors. Designed for hyperscale data centers and CSPs, they offer scalability with E1.S SSD support and flexible PCIe Gen5 NVMe configurations

The company will host the ‘Giga Computing AI/HPC Partner Seminar’ on January 28, 2026, at the Osaka International Convention Center (Room 803). Together with ecosystem partners in processors, storage, and HPC, we will discuss deployment experiences and future industry trends.

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter