Advancing AI 2025: AMD Unveils Vision for Open AI Ecosystem

Detailing new silicon, software and systems, including Instinct MI350 Series accelerators, AMD ROCm ecosystem, and open rack-scale designs

This is a Press Release edited by StorageNewsletter.com on June 16, 2025 at 2:02 pmAMD (Advanced Micro Devices, Inc.) delivered its end-to-end integrated AI platform vision and introduced its open, scalable rack-scale AI infrastructure built on industry standards at its 2025 Advancing AI event.

AMD and its partners showcased:

- How they are building the open AI ecosystem with the new AMD Instinct MI350 Series accelerators

- The continued growth of the AMD ROCm ecosystem

- The company’s new, open rack-scale designs and roadmap that bring leadership rack-scale AI performance beyond 2027

“AMD is driving AI innovation at an unprecedented pace, highlighted by the launch of our AMD Instinct MI350 series accelerators, advances in our next generation AMD ‘Helios’ rack-scale solutions, and growing momentum for our ROCm open software stack,” said Dr. Lisa Su, chair and CEO, AMD. “We are entering the next phase of AI, driven by open standards, shared innovation and AMD’s expanding leadership across a broad ecosystem of hardware and software partners who are collaborating to define the future of AI.”

AMD Delivers Solutions to Accelerate an Open AI Ecosystem

The company announced a broad portfolio of hardware, software and solutions to power the full spectrum of AI:

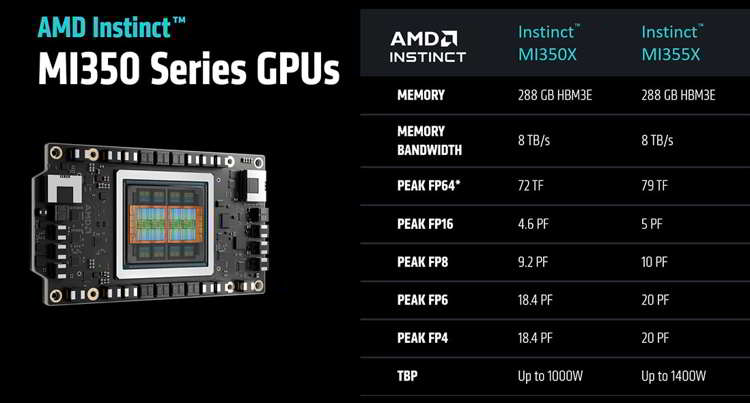

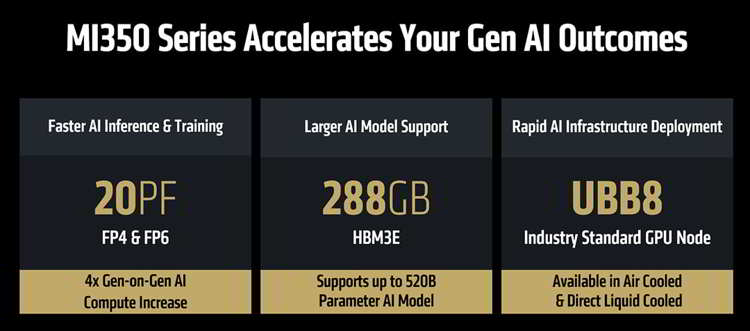

- Unveiled the Instinct MI350 Series GPUs, setting a new benchmark for performance, efficiency and scalability in GenAI and HPC. The MI350 Series, consisting of both Instinct MI350X and MI355X GPUs and platforms, delivers a 4x, generation-on-generation AI compute increase (1) and a 35x generational leap in inferencing (2), paving the way for transformative AI solutions across industries. MI355X also delivers significant price-performance gains, generating up to 40% more tokens-per-dollar compared to competing solutions (3). (See blog : AMD Instinct MI350 Series and Beyond: Accelerating the Future of AI and HPC)

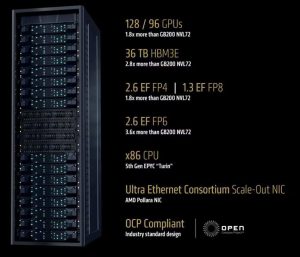

- Demonstrated end-to-end, open-standards rack-scale AI infrastructure- already rolling out with Instinct MI350 Series accelerators, 5th Gen EPYC processors and Pensando Pollara NICs in hyperscaler deployments such as Oracle Cloud Infrastructure (OCI) and set for broad availability in 2H 2025.

- AMD also previewed its next-gen AI rack called ‘Helios.’ It will be built on the next-gen Instinct MI400 Series GPUs – which compared to the previous-gen are expected to deliver up to 10x more performance running inference on Mixture of Experts models (4), the ‘Zen 6’-based EPYC ‘Venice’ CPUs and Pensando ‘Vulcano’ NICs. (see blog: AMD Delivering Open Rack Scale AI Infrastructure).

- The latest version of the AMD open-source AI software stack, ROCm 7, is engineered to meet the growing demands of generative AI and high-performance computing workloads – while improving developer experience across the board. ROCm 7 features improved support for industry-standard frameworks, expanded hardware compatibility and new development tools, drivers, APIs and libraries to accelerate AI development and deployment. (see blog: Enabling the Future of AI: Introducing AMD ROCm 7 and AMD Developer Cloud).

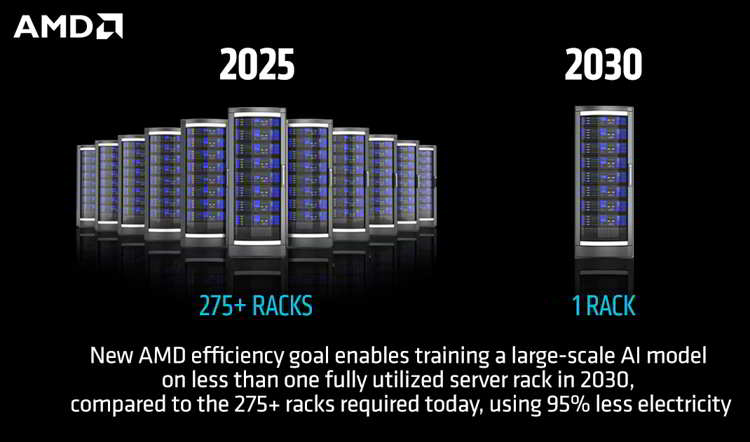

- The Instinct MI350 Series exceeded AMD’s 5-year goal to improve the energy efficiency of AI training and HPC nodes by 30x, ultimately delivering a 38x improvement (5). The company also unveiled a new 2030 goal to deliver a 20x increase in rack-scale energy efficiency from a 2024 base year (6), enabling a typical AI model that today requires more than 275 racks to be trained in fewer than 1 fully utilized rack by 2030, using 95% less electricity (7). (See blog: AMD Surpasses 30×25 Goal, Sets Ambitious New 20x Efficiency Target).

- AMD also announced the broad availability of the Developer Cloud for the global developer and open-source communities. Purpose-built for rapid, high-performance AI development, users will have access to a fully managed cloud environment with the tools and flexibility to get started with AI projects – and grow without limits. With ROCm 7 and the AMD Developer Cloud, AMD is lowering barriers and expanding access to next-gen compute. Strategic collaborations with leaders like Hugging Face, OpenAI and Grok are proving the power of co-developed, open solutions.

AMD open scale rack infrastructure

Broad Partner Ecosystem Showcases AI Progress Powered by AMD

Today, seven of the 10 largest model builders and Al companies are running production workloads on Instinct accelerators. Among those companies are Meta, OpenAI, Microsoft and xAI, who joined AMD and other partners at Advancing AI, to discuss how they are working with AMD for AI solutions to train today’s leading AI models, power inference at scale and accelerate AI exploration and development:

- Meta detailed how Instinct MI300X is broadly deployed for Llama 3 and Llama 4 inference. Meta shared excitement for MI350 and its compute power, performance-per-TCO and next-gen memory. Meta continues to collaborate closely with AMD on AI roadmaps, including plans for the Instinct MI400 Series platform.

- Sam Altman, CEO, OpenAI, discussed the importance of holistically optimized hardware, software and algorithms and OpenAI’s close partnership with AMD on AI infrastructure, with research and GPT models on Azure in production on MI300X, as well as deep design engagements on MI400 Series platforms.

- Oracle Cloud Infrastructure (OCI) is among the 1st industry leaders to adopt the AMD open rack-scale AI infrastructure with Instinct MI355X GPUs. OCI leverages AMD CPUs and GPUs to deliver balanced, scalable performance for AI clusters, and announced it will offer zettascale AI clusters accelerated by the latest AMD Instinct processors with up to 131,072 MI355X GPUs to enable customers to build, train and inference AI at scale.

- Humain discussed its landmark agreement with AMD to build open, scalable, resilient and cost-efficient AI infrastructure leveraging the full spectrum of computing platforms only AMD can provide.

- Microsoft announced Instinct MI300X is now powering both proprietary and open-source models in production on Azure.

- Cohere shared that its high-performance, scalable Command models are deployed on Instinct MI300X, powering enterprise-grade LLM inference with high throughput, efficiency and data privacy.

- Red Hat described how its expanded collaboration with AMD enables production-ready AI environments, with AMD Instinct GPUs on Red Hat OpenShift AI delivering powerful, efficient AI processing across hybrid cloud environments.

- Astera Labs highlighted how the open UALink ecosystem accelerates innovation and delivers greater value to customers and shared plans to offer a comprehensive portfolio of UALink products to support next-gen AI infrastructure.

- Marvell Technology, Inc. joined AMD to highlight its collaboration as part of the UALink Consortium developing an open interconnect, bringing the ultimate flexibility for AI infrastructure.

(1) Based on calculations by AMD Performance Labs in May 2025, to determine the peak theoretical precision performance of eight (8) AMD Instinct MI355X and MI350X GPUs (Platform) and eight (8) AMD Instinct MI325X, MI300X, MI250X and MI100 GPUs (Platform) using the FP16, FP8, FP6 and FP4 datatypes with Matrix. Server manufacturers may vary configurations, yielding different results. Results may vary based on use of the latest drivers and optimizations.

(2) MI350-044: Based on AMD internal testing as of 6/9/2025. Using 8 GPU AMD Instinct MI355X Platform measuring text generated online serving inference throughput for Llama 3.1-405B chat model (FP4) compared 8 GPU AMD Instinct MI300X Platform performance with (FP8). Test was performed using input length of 32768 tokens and an output length of 1024 tokens with concurrency set to best available throughput to achieve 60ms on each platform, 1 for MI300X (35.3ms) and 64ms for MI355X platforms (50.6ms). Server manufacturers may vary configurations, yielding different results. Performance may vary based on use of latest drivers and optimizations.

(3) Based on performance testing by AMD Labs as of 6/6/2025, measuring the text generated inference throughput on the LLaMA 3.1-405B model using the FP4 datatype with various combinations of input, output token length with AMD Instinct MI355X 8x GPU, and published results for the NVIDIA B200 HGX 8xGPU. Performance per dollar calculated with current pricing for NVIDIA B200 available from Coreweave website and expected Instinct MI355X based cloud instance pricing. Server manufacturers may vary configurations, yielding different results. Performance may vary based on use of latest drivers and optimizations. Current customer pricing as of June 10, 2025, and subject to change. MI350-049

(4) MI400-001: Performance projection as of 06/05/2025 using engineering estimates based on the design of a future AMD Instinct MI400 Series GPU compared to the Instinct MI355x, with 2K and 16K prefill with TP8, EP8 and projected inference performance, and using a GenAI training model evaluated with GEMM and Attention algorithms for the Instinct MI400 Series. Results may vary when products are released in market. (MI400-001)

(5) EPYC-030a: Calculation includes 1) base case kWhr use projections in 2025 conducted with Koomey Analytics based on available research and data that includes segment specific projected 2025 deployment volumes and data center power utilization effectiveness (PUE) including GPU HPC and machine learning (ML) installations and 2) AMD CPU and GPU node power consumptions incorporating segment-specific utilization (active vs. idle) percentages and multiplied by PUE to determine actual total energy use for calculation of the performance per Watt. 38x is calculated using the following formula: (base case HPC node kWhr use projection in 2025 * AMD 2025 perf/Watt improvement using DGEMM and TEC +Base case ML node kWhr use projection in 2025 *AMD 2025 perf/Watt improvement using ML math and TEC) /(Base case projected kWhr usage in 2025). For more information.

(6) AMD based advanced racks for AI training/inference in each year (2024 to 2030) based on AMD roadmaps, also examining historical trends to inform rack design choices and technology improvements to align projected goals and historical trends. The 2024 rack is based on the MI300X node, which is comparable to the Nvidia H100 and reflects current common practice in AI deployments in 2024/2025 timeframe. The 2030 rack is based on an AMD system and silicon design expectations for that time frame. In each case, AMD specified components like GPUs, CPUs, DRAM, storage, cooling, and communications, tracking component and defined rack characteristics for power and performance. Calculations do not include power used for cooling air or water supply outside the racks but do include power for fans and pumps internal to the racks.

Performance improvements are estimated based on progress in compute output (delivered, sustained, not peak FLOPS), memory (HBM) bandwidth, and network (scale-up) bandwidth, expressed as indices and weighted by the following factors for training and inference.

|

Training |

FLOPS |

HBM BW |

Scale-up BW |

|

Inference |

70.0% |

10.0% |

20.0% |

|

|

45.0% |

32.5% |

22.5% |

Performance and power use per rack together imply trends in performance per watt over time for training and inference, then indices for progress in training and inference are weighted 50:50 to get the final estimate of AMD projected progress by 2030 (20x). The performance number assumes continued AI model progress in exploiting lower precision math formats for both training and inference which results in both an increase in effective FLOPS and a reduction in required bandwidth per FLOP.

(7) AMD estimated the number of racks to train a typical notable AI model based on EPOCH AI data. For this calculation we assume, based on these data, that a typical model takes 1025 floating point operations to train (based on the median of 2025 data), and that this training takes place over 1 month. FLOPs needed = 10^25 FLOPs/(seconds/month)/Model FLOPs utilization (MFU) = 10^25/(2.6298*10^6)/0.6. Racks = FLOPs needed/(FLOPS/rack in 2024 and 2030). The compute performance estimates from the AMD roadmap suggests that approximately 276 racks would be needed in 2025 to train a typical model over one month using the MI300X product (assuming 22.656 PFLOPS/rack with 60% MFU) and <1 fully utilized rack would be needed to train the same model in 2030 using a rack configuration based on an AMD roadmap projection. These calculations imply a >276-fold reduction in the number of racks to train the same model over this six-year period. Electricity use for a MI300X system to completely train a defined 2025 AI model using a 2024 rack is calculated at ~7GWh, whereas the future 2030 AMD system could train the same model using ~350 MWh, a 95% reduction. AMD then applied carbon intensities per kWh from the International Energy Agency World Energy Outlook 2024 [https://www.iea.org/reports/world-energy-outlook-2024]. IEA’s stated policy case gives carbon intensities for 2023 and 2030. We determined the average annual change in intensity from 2023 to 2030 and applied that to the 2023 intensity to get 2024 intensity (434 CO2 g/kWh) versus the 2030 intensity (312 CO2 g/kWh). Emissions for the 2024 baseline scenario of 7 GWh x 434 CO2 g/kWh equates to approximately 3000 metric tC02, versus the future 2030 scenario of 350 MWh x 312 CO2 g/kWh equates to around100 metric tCO2.

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter