Panmnesia Expands Into AI Accelerator Interconnects Including UALink and Ethernet

Securing ~$10M R&D project for controller and switch SoC development

This is a Press Release edited by StorageNewsletter.com on April 13, 2026 at 2:00 pmPanmnesia, a company specializing in AI semiconductor interconnect technologies, announced that it has secured an approximately $10M R&D project focused on next-generation interconnect technologies for AI data centers. The project encompasses the development of Link Controllers and Switches based on open standards such as UALink (Ultra Accelerator Link) and Ethernet-based interconnect protocols. (A technical specification that anyone can freely implement based on publicly available specification documents. Because it is not proprietary to any single company, it enables interoperable operation across products from different manufacturers.)

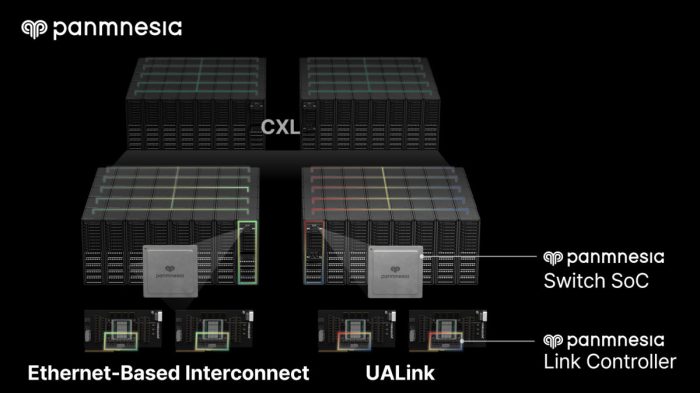

Having already established a strong portfolio of CXL (Compute Express Link) products specialized in memory expansion, Panmnesia is now expanding into accelerator-centric interconnects based on open standards such as UALink and Ethernet – broadening its coverage across the full spectrum of core interconnect technologies for AI data centers.

The Importance of Accelerator-to-Accelerator Connectivity

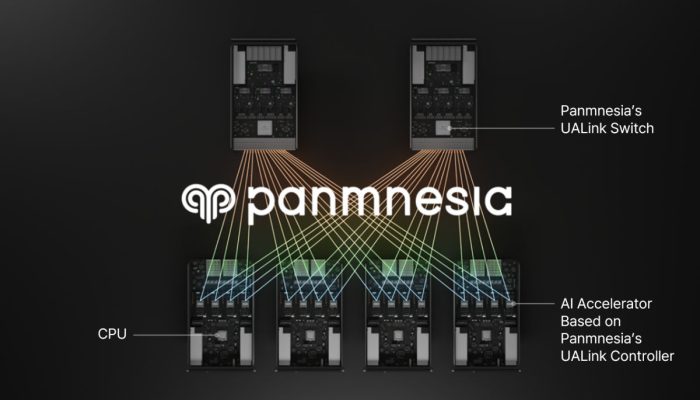

As large-scale AI models proliferate across industries, AI data centers increasingly rely on multiple AI accelerators working in tandem to perform computations. In this context, interconnect technologies that transfer data at high speed between accelerators have emerged as a critical factor determining overall AI system performance.

Expanding the Interconnect Ecosystem with Open Standards Including UALink and Ethernet

In this project, Panmnesia develops open standard-based interconnect technologies to enable high-speed connectivity among AI accelerators. At the core of this effort are UALink (Ultra Accelerator Link) and Ethernet-based interconnects.

UALink is an open interconnect standard jointly driven by global big tech companies including AMD, AWS, Google, Microsoft, and Meta, along with Panmnesia and many other member companies. It is designed to enable high-speed connectivity between AI accelerators regardless of manufacturer.

Ethernet-based interconnect technologies are also being proposed and developed. A notable example is ESUN (Ethernet for Scale-up Networking), led by the Open Compute Project (OCP), a leading open standards body for AI infrastructure. Panmnesia is also an active member of OCP.

Under this project, Panmnesia will develop controllers and switches supporting open interconnect standards such as UALink and Ethernet-based protocols. A controller is a core semiconductor logic block that enables AI accelerators to communicate with other devices at high speed via aforementioned accelerator-centric interconnect protocols. A switch acts as a bridge connecting multiple accelerators over UALink or Ethernet protocols, routing data traffic between accelerators to ensure it reaches the correct destination.

Furthermore, Panmnesia plans to configure the developed devices in an optimized topology to enable faster data exchange between them, and conduct rack-level validation. The switch SoC supporting accelerator-centric interconnects such as UALink is expected to be available from the second half of 2027.

Comprehensive Leadership in AI Data Center Interconnect Technologies

Meanwhile, Panmnesia has already built an established lineup of CXL (Compute Express Link) interconnect products, a technology that is highly effective for memory capacity expansion in data centers. The CXL product lineup comprises full-stack solutions, including PCIe/CXL link controllers and IP, hardware SoCs such as PCIe/CXL fabric switches, and custom silicon solutions.

A Panmnesia representative commented, “Securing this project is a significant milestone, as it validates both our core interconnect technology capabilities and our ability to expand across the broader AI infrastructure landscape,” adding, “We will continue to lead the development of next-generation interconnect technologies.”

Meanwhile, last year, Panmnesia unveiled the ‘CXL-over-XLink architecture,’ a comprehensive link technology that integrates accelerator-specific links (known as XLink), including UALink, with CXL to enable enhanced connectivity across large-scale AI data centers.

Read also :

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter