Arcfra Launches Neutree: Bridging the Gap Between AI Experimentation and Enterprise Production

Introducing a Model-as-a-Service (MaaS)

By Philippe Nicolas | March 30, 2026 at 2:01 pmBlog published February 27, 2026

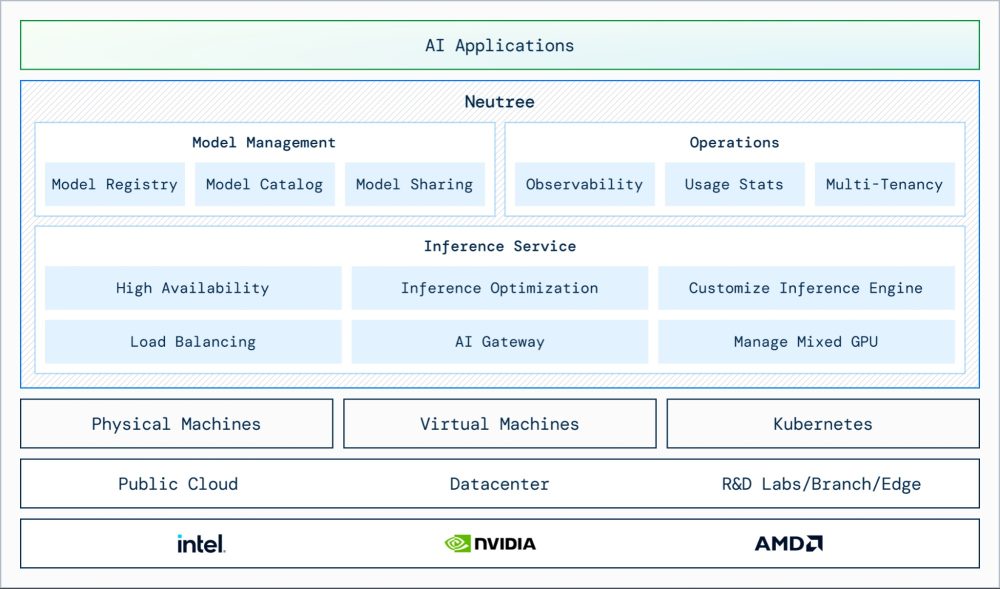

Arcfra, an innovator in cloud & AI-ready infrastructure, announced the launch of Arcfra Neutree, a transformative Model-as-a-Service (MaaS) platform designed to industrialize AI operations. As the centerpiece of the new Arcfra AI Infrastructure Solution, Neutree shifts the enterprise focus from merely “running” models to operating them as reliable, governable, and scalable services.

Bridging the “Production Gap”

While AI experimentation is booming, many enterprises struggle to move models into production due to fragmented GPU capacity, a lack of operational controls, and complex deployment workflows. Neutree addresses these bottlenecks by providing a unified management layer for model inference in private environments.

“Neutree project is a shift in philosophy,” says Yanzhen Yu, R&D manager, Arcfra. “We are moving away from ‘black box’ AI toward a transparent, vendor-agnostic ecosystem where AI is as manageable as any other mission-critical cloud service.”

Open-Source Foundation, Enterprise Strength

Neutree is built on a transparent, community-driven open-source project, ensuring agility and preventing vendor lock-in.

- Neutree (Open-Source): Focuses on core inference management, community innovation, and transparency

- Arcfra Neutree (Enterprise Edition): Adds “industrial-strength” features, including unlimited workspaces, professional 24/7 support, and deep integration with the Arcfra Enterprise Cloud Platform (AECP) for mission-critical stability

Key Features of the Neutree Platform

Deploy Anywhere (Vendor Agnostic)

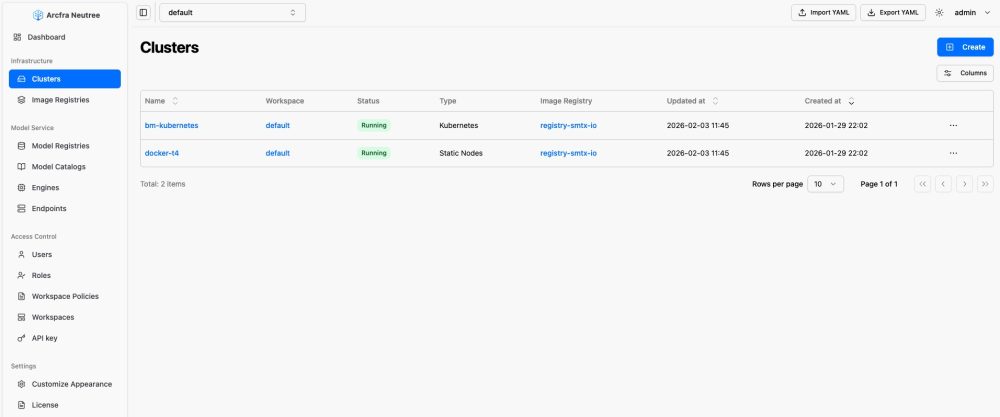

Neutree’s loosely coupled architecture allows it to run on any Kubernetes cluster, physical machine, or virtual machine. It seamlessly integrates heterogeneous accelerators (Nvidia, AMD, Intel) into a unified pool, allowing teams to move workloads from R&D labs to edge devices or data centers without refactoring.

Click to enlarge

Unified AI Chips and Models Resource Management

- Unified management of heterogeneous CPU/GPU computing resources across data centers, public clouds, branches, and edge sites, enabling centralized orchestration and monitoring of all deployment locations through a single control plane

- Unified management of models of different types and locations

- A local unified model registry that enables unified management and distribution of different types of model files

Click to enlarge

Three-Step “No-Code” Model Deployment

Neutree slashes deployment time from days to minutes through a streamlined UI:

- Download models from HF or import to the local model registry

- With pre-optimized inference parameters built into the Model Catalog, deployment is as simple as specifying compute requirements

- One-click deployment with automatic selection of the optimal built-in inference engine

Operational Observability & Enterprise-Grade Governance

Managing models at scale requires more than just compute. Neutree simplifies day 2 operations with:

- Multi-Tenancy: Isolated resource spaces for different business units

- Unified Observability: Real-time monitoring, logging, and health checks

- Cost & Access Control: Fine-grained RBAC and token-level usage statistics for precise ROI auditing

The AECP Advantage: Industrial-Strength AI Infrastructure

While Neutree provides the AI software layer, Arcfra Enterprise Cloud Platform (AECP) provides the “industrial-strength” foundation. The optimized integration (Arcfra AI Infrastructure solution) transforms a flexible tool into a robust enterprise asset, featuring hybrid scheduling, distributed storage, security, and full observability, which enables efficient and scalable AI deployment for real-world business needs.

This combination transforms standard AI setups into an “industrial-strength” foundation with the following key advantages:

- Unified Infrastructure: A single management plane for VM and Kubernetes workloads

- High-Performance Storage: Robust block and file storage optimized for the full AI data lifecycle

- Secure Data Pipeline: Hardened data flow from Raw Data → Labeling → Model Registry → Vector DB

- Advanced Security: App-level micro-segmentation and real-time traffic visualization

- Total Observability: Integrated monitoring, logging, and alerts for proactive management

- Production-Ready: Built for high-availability, intelligent, and mission-critical environments

ConnectWave, a leading South Korean e-commerce provider, partnered with Arcfra to unify orchestration for model training, fine-tuning, and inference. By deploying Arcfra’s lightweight AI infrastructure, they successfully built a private hybrid environment that manages VM and Kubernetes workloads end-to-end. This transition has drastically reduced operational complexity and costs while providing a stable, secure foundation for the intelligent features now powering their global consumer and seller platforms.

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter