Scale Your AI Ambitions with Dell Storage and Nvidia

Dell Lightning File System and Dell Exascale Storage fuel AI performance for the largest, most demanding environments

By Philippe Nicolas | March 27, 2026 at 2:02 pmBlog written by David Noy, VP, Product Management, Dell published March 16, 2026

Advancing AI data access and orchestration at Nvidia GTC

AI innovation is moving faster than ever, and nowhere is that more evident than at Nvidia GTC 2026. As AI infrastructure becomes more powerful and models grow more complex, the question every AI factory must answer is simple: Can storage keep up with compute?

At this year’s GTC, Dell is addressing that challenge with two complementary innovations for extreme‑scale AI:

- Dell Lightning File System, now available globally

- A preview of Dell Exascale Storage, a software‑first storage architecture for AI

Together, they underpin the Dell AI Data Platform and the Dell AI Factory with Nvidia, helping customers move from pilot projects to massive, production AI services – scaling AI without limits by transforming how data is accessed, moved and orchestrated across the AI factory.

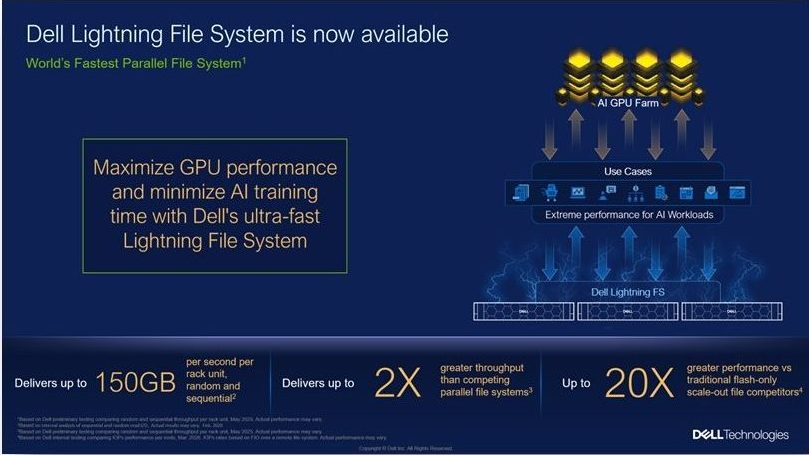

Dell Lightning File System: Extreme performance for extreme‑scale AI

Available globally starting today, Dell Lightning File System (Lightning FS) is the world’s fastest parallel file system.¹ Engineered for extreme-scale AI training and inference, Lightning FS also delivers unsurpassed performance density, reducing hardware space and power requirements. Lightning FS complements Dell PowerScale and Dell ObjectScale as a storage accelerator for the most demanding AI workloads.

Where PowerScale and ObjectScale power the broader AI data lifecycle – including ingest, curation, feature stores, archives and a wide range of training and inference workloads – Lightning FS focuses on a single imperative: Keep accelerated compute fully utilized at massive scale by delivering predictable, high-throughput data access.

Lightning FS is designed for organizations at the outer edge of AI scale – typically Tier 2 cloud service providers and GPU‑as‑a‑Service providers running tens of thousands of GPUs or over 4TB/sec. of aggregate throughput. In these environments, even small inefficiencies can lead to days of lost training time and millions of dollars in stranded GPU investment. Lightning FS is built to saturate large accelerated clusters and minimize training windows with up to 6TB/sec. per rack read performance across both random and sequential workloads,² so providers can deliver more AI capacity on the same footprint.

Fabric‑bound architecture with direct NVMe access

Unlike legacy parallel file systems that depend heavily on CPU‑bound controller nodes and complex write caching, Lightning FS uses a fabric‑bound architecture with direct NVMe access and redirect‑on‑write semantics. This design avoids common bottlenecks such as:

- Overloaded metadata or controller nodes

- Cache thrashing under mixed I/O profiles

- Performance cliffs as clusters grow

The result is near-line‑speed efficiency for both sequential and random reads, even under highly concurrent AI workloads. Lightning FS is engineered to deliver maximum throughput for mixed I/O patterns and to scale linearly as you add nodes, helping teams plan bandwidth and GPU feeding rates with confidence. Based on Dell internal testing, Lightning FS can deliver up to 2x greater throughput per rack unit than competing parallel file systems (actual results may vary),³ and up to 20X greater performance over traditional flash-only scale-out file competitors.⁴

Because Lightning FS provides direct data access to NVMe devices rather than relying on large, fragile caches, it maintains consistent performance across mixed workloads. This helps minimize data stalls, reduce tail latencies and keep GPU utilization high – whether you are training frontier models, fine‑tuning customer‑specific variants or powering large‑scale inference.

Integrated with Nvidia‑based AI architectures

Lightning FS is designed to integrate cleanly into Nvidia-based AI infrastructures, including deployments aligned with the Dell AI Factory with Nvidia. Building on earlier work under Project Lightning, Dell and Nvidia have collaborated on high‑performance inferencing solutions that combine Dell storage with Nvidia KV cache and Nvidia NIXL libraries to accelerate large‑scale LLM inference.

As part of that blueprint, Lightning FS helps customers saturate massive server farms and GPU clusters, enabling breakthrough performance for both training and inference while lowering integration risk. And because Lightning FS is software‑defined on qualified Dell PowerEdge servers, customers can take advantage of new server and fabric generations over time without re‑architecting their data layer.

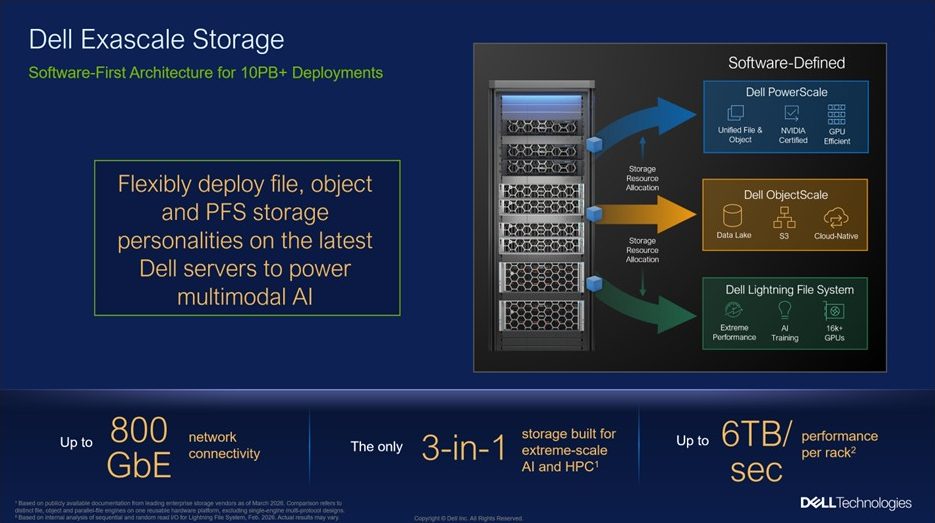

Dell Exascale Storage: software‑first storage for the largest AI environments

Dell Exascale Storage – the only 3-in-1 storage built for extreme-scale AI and HPC,⁵ gives IT teams the flexibility to deploy Dell’s best-of-breed file, object and parallel file system storage software on the latest Dell PowerEdge servers. Customers can allocate PowerScale, ObjectScale and/or Lightning File System storage resources on a common hardware platform to support the most demanding AI and HPC environments, including high-frequency trading and neoclouds. Instead of standing up separate storage appliances for each service or protocol, organizations can use this architecture to run multiple storage personalities on the same high-powered PowerEdge designs optimized for AI training and inference.

A flexible way to run file, object and parallel file

Under the covers, Dell Exascale Storage provides:

- A common, globally scalable architectural foundation

- A set of software-defined AI storage capabilities – including our proven PowerScale, ObjectScale and Lightning File System – delivered under one operating and control pattern

- A single model that can span capacity and archive, primary data services and ultra-low-latency AI training and inference workload tiers

- Up to 150GB/second per rack unit read performance,⁶

delivering high throughput to keep GPUs fed and reduce I/O bottlenecks in demanding AI workloads - Planned network connectivity of up to 800GbE, enabled by support for Nvidia ConnectX-8 and ConnectX-9 SuperNICs, delivering high‑bandwidth, low‑latency data paths

For large providers, Dell Exascale Storage is designed to improve hardware utilization and TCO by allowing capacity and performance to be repurposed across tenants, regions and services, rather than locked into isolated silos.

Organizations can turn storage into a programmable resource that can be orchestrated alongside compute and networking. As AI stacks become more composable – mixing foundation models, vector databases, KV cache layers and streaming pipelines – teams can focus on building services rather than rebuilding infrastructure. The architecture provides the flexibility multimodal AI workloads require as models increasingly combine text, images, video and sensor data.

Advancing data access and orchestration

With Dell Lightning FS and Dell Exascale Storage, we continue expanding the AI storage innovation at the heart of the Dell AI Data Platform and Dell AI Factory with Nvidia. In our storage portfolio:

- Lightning FS serves as extreme‑performance scratch and training/inference storage for environments at the very top end of AI scale

- Exascale provides the software-first architecture that lets the largest providers run and shift among Lightning FS, PowerScale and ObjectScale storage personalities on the same high-performance PowerEdge designs

Availability

- Dell Lightning File System: available globally today

- Dell Exascale Storage: targeted for availability in early H2 CY2026

1Based on Dell preliminary testing comparing random and sequential throughput per rack unit, May 2025. Actual performance may vary.

2Based on internal analysis of sequential and random read I/O, Feb. 2026. Actual results may vary.

3Based on Dell preliminary testing comparing random and sequential throughput per rack unit, May 2025. Actual performance may vary.

4Based on Dell internal testing comparing IOPs performance per node, Mar. 2026. IOPs rates based on FIO over a remote file system. Actual performance may vary.

5Based on publicly available documentation from leading enterprise storage vendors as of March 2026. Comparison refers to distinct file, object, and parallel‑file engines on one reusable hardware platform, excluding single‑engine multi‑protocol designs.

6Based on internal analysis of sequential and random read I/O, Feb. 2026. Actual results may vary.

Comments

Announced by Michael Dell at Dell World 2024 - nearly two years ago - Project Lightning has now become a reality.

It's a strong illustration of the pressure AI is placing on infrastructure vendors.

It also highlights a long-standing gap: Dell, and previously EMC, have not been deeply positioned in high-performance storage for HPC. This has been evident through partnerships with companies like ThinkparQ. Historically, Dell focused more on block storage backends and paid limited attention to this segment. AI, however, has changed the equation, acting as both a trigger and a wake-up call. Like others in the same position - NetApp and Pure Storage included - Dell had to respond.

As we've often said, modern workloads demand modern architectures. Acquisitions could have accelerated time to market, but that path appears to have been ruled out. Similarly, building a fully owned solution matters as customers increasingly evaluate long-term investment and control. This context also helps explain initiatives like DDN's Infinia project, despite its already strong HPC presence with ExaScaler.

Over the years, EMC and later Dell have acquired numerous storage companies, particularly in file storage: CrosStor in 2000, Isilon in 2010 now PowerScale, and Data General with Clariion, which evolved into IP4700, aka Cameleon. The file storage journey has been extensive, spanning Celerra, HighRoad, MPFS extensions, VNX, and Unity, reflecting a shift toward unified systems. Additional technologies acquisitions include Maginatics, Rainfinity, and CAS solutions like FilePool, which became Centera, as well as Storigen. We previously detailed this history in a 2019 article.

Dell's first response was to extend PowerScale with a parallel approach using pNFS. This aligns with similar moves from competitors: NetApp with AFX, Pure Storage with FlashBlade//EXA, Hammerspace with Data Platform, and others. Alternative "NFS+" models also exist from vendors like Vast Data and Huawei. Meanwhile, "traditional" parallel file systems continue to be offered by players such as DDN, IBM, Quobyte, ThinkParq, Vdura, Weka, and Lustre-based ecosystems.

The second - and more significant - response is the Lightning File System (LFS), although details remain limited. Based on available information, LFS is a pure software solution positioned as a software-defined parallel file system coupling metadata servers, client software and date server fulled of NVMe units. Performance is said to scale nearly linearly with node expansion and deliver high efficiency for both sequential and random reads.

Key architectural elements include redirect-write semantics with native indexing, and the removal of traditional file system layers on storage nodes. This allows direct access to NVMe blocks/extents/chunks/segments/fragments through what Dell calls a "fabric-bound architecture". By bypassing filesystem layers, the design aims to minimize latency and maximize I/O throughput - critical for continuously feeding GPUs.

LFS is clearly positioned for AI workloads. The open question is whether HPC will remain a secondary focus for Dell.

Overall, Dell's strategy appears to revolve around an "Exascale" model, combining PowerScale and ObjectScale to address diverse workloads and requirements. More details are expected at Dell World 2026, taking place May 18–21 in Las Vegas.

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter