Nvidia GTC 2026: Nvidia Launches BlueField-4 STX Storage Architecture With Broad Industry Adoption

Confirming the role of DPUs and BlueField line in particular

This is a Press Release edited by StorageNewsletter.com on March 17, 2026 at 2:02 pmSummary:

- New Nvidia STX reference architecture provides up to 5x token throughput and up to 4x energy efficiency with 2x faster data ingestion

- Early adopters of STX for context memory storage include CoreWeave, Crusoe, IREN, Lambda, Mistral AI, Nebius, Oracle Cloud Infrastructure (OCI) and Vultr

- Storage providers and manufacturing partners are building infrastructure using Nvidia modular reference designs to advance agentic AI, including AIC, Cloudian, DDN, Dell Technologies, Everpure, Hitachi Vantara, HPE, IBM, MinIO, NetApp, Nutanix, Supermicro, Quanta Cloud Technology (QCT), Vast Data and Weka

Nvidia Corp. announced Nvidia BlueField-4 STX, a modular reference architecture that enables enterprises, cloud and AI providers to easily deploy accelerated storage infrastructure capable of the long-context reasoning required for agentic AI.![]() Traditional data centers provide high-capacity, general-purpose storage but lack the responsiveness required for seamless interaction with AI agents that work across many steps, tools and sessions. Agentic AI demands real-time access to data and contextual working memory to keep conversations and tasks fast and coherent. As context grows, traditional storage and data paths can slow AI inference and reduce GPU utilization.

Traditional data centers provide high-capacity, general-purpose storage but lack the responsiveness required for seamless interaction with AI agents that work across many steps, tools and sessions. Agentic AI demands real-time access to data and contextual working memory to keep conversations and tasks fast and coherent. As context grows, traditional storage and data paths can slow AI inference and reduce GPU utilization.

Nvidia STX allows storage providers to build infrastructure that keeps data close and accessible at scale, so agentic AI factories can deliver higher throughput and responsiveness across inference, training and analytics.

The first rack-scale implementation includes the new Nvidia CMX context memory storage platform, which expands GPU memory with a high-performance context layer for scalable inference and agentic systems – providing up to 5x tokens per second compared with traditional storage.

“Agentic AI is redefining what software can do – and the computing infrastructure behind it must be reinvented to keep pace,” said Jensen Huang, founder and CEO, Nvidia. “AI systems that reason across massive context and continuously learn require a new class of storage. Nvidia STX reinvents the storage stack, providing a modular foundation for AI-native infrastructure that keeps AI factories operating at peak performance.”

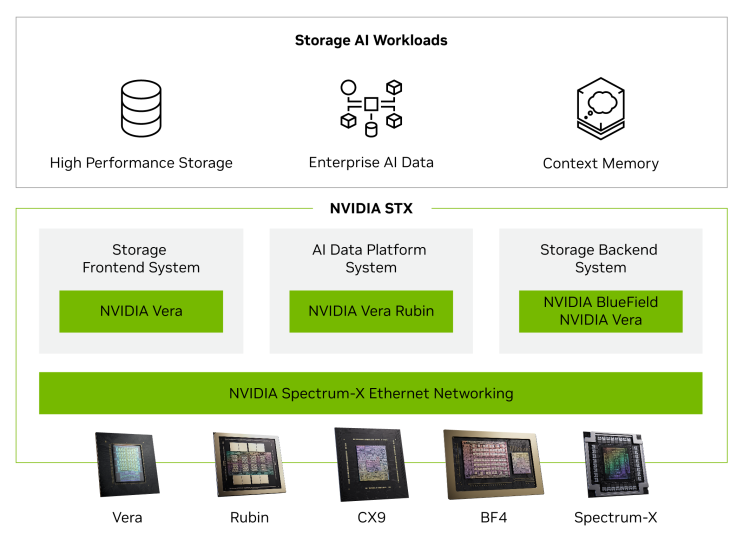

STX is accelerated by the Nvidia Vera Rubin platform and harnesses a new, storage-optimized Nvidia BlueField-4 processor that combines the Nvidia Vera CPU with Nvidia ConnectX-9 SuperNIC, together with Nvidia Spectrum-X Ethernet networking, Nvidia DOCA and Nvidia AI Enterprise software.

The STX architecture also enables 4x higher energy efficiency compared with traditional CPU architectures for high-performance storage and can ingest 2x more pages per second for enterprise AI data.

Storage providers partners codesigning next-generation AI infrastructure based on Nvidia STX include Cloudian, DDN, Dell Technologies, Everpure, Hitachi Vantara, HPE, IBM, MinIO, NetApp, Nutanix, Vast Data and Weka.

Manufacturing partners building STX-based systems include AIC, Supermicro and Quanta Cloud Technology (QCT).

Leading AI labs and cloud service providers planning to adopt STX for context memory storage include CoreWeave, Crusoe, IREN, Lambda, Mistral AI, Nebius, OCI and Vultr.

STX-based platforms will be available from partners in the second half of this year.

Comments

Nvidia GTC has become a key industry benchmark, where nearly all IT players - especially in today’s AI-driven landscape - come together to showcase new products, partnerships, technologies, and strategic initiatives. The event plays a critical role in shaping the direction of the industry.

It’s also the annual spotlight moment for Jensen Huang, who takes the stage to unveil the latest product iterations and set the tone for what’s next.

This year was no exception. On March 16, Nvidia delivered an impressive wave of announcements across multiple fronts, with around 20 press releases and blog posts published in a single day.

As Jensen Huang emphasized during his keynote, ecosystems and partnerships are central to advancing Nvidia's vision, particularly around the growing importance of algorithms. The recurring themes were “AI factory” and “AI data platform,” alongside the continued prominence of CUDA, supporting libraries, vertical approaches and the underlying hardware stack.

On the storage front, the key announcement is the Nvidia STX reference architecture, including the BlueField-4 STX storage architecture highlighted in the related news.

The STX model brings together Vera Rubin, BlueField-4 coupling Vera CPUs and ConnectX-9 SuperNIC, and Spectrum-X, co-designed with key Nvidia partners. This collaborative approach is crucial for accelerating market adoption and further expanding Nvidia's footprint in the industry.

In more detail, this translates into three main components:

-

Storage Frontend System - leveraging Vera CPUs, the goal is to enable partner software to be easily ported while significantly reducing I/O latency. MinIO and Weka are strong examples of this approach.

-

AI Data Platform System - powered by Rubin GPUs, designed to accelerate and scale AI data processing workloads.

-

Storage Backend System - built on Nvidia BlueField-4 DPUs combined with Vera CPUs, and enhanced by the Context Memory Storage platform to improve indexing and key-value cache performance.

More players are expected to join this ecosystem, and we’ll likely see new product iterations built on the STX architecture emerge in the near future.

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter