MWC 2026: GIGABYTE Powers Telecom AI Transformation with End-to-End Infrastructure

From AI factories and supercomputing to digital twins, cloud hosting, and edge AI, enables telecommunications operators to turn network data into intelligence, automation, and new revenue streams

This is a Press Release edited by StorageNewsletter.com on March 4, 2026 at 2:01 pmGigabyte Technology Co. Ltd. extends its end-to-end AI infrastructure portfolio designed specifically for the telecommunications industry at MWC 2026, 5F60, hall 5, Fira Gran Via.

The company’s end-to-end product solutions enable operators to convert massive volumes of network data into intelligence, automation, and new revenue streams, as telecommunications networks evolve from data carriers into AI-powered digital platforms.

From Network Data to AI Value: Building Telco’s AI Factory

At the core of the telco-to-AI transformation is the AI Factory, where network and subscriber data are converted into operational intelligence and commercialized AI services. Gigabyte addresses this need with GB300 NVL72, a liquid-cooled rack-scale platform integrating 72 Nvidia Blackwell Ultra GPUs and 36 Nvidia Grace CPUs in a single system. Connected via Nvidia Quantum-X800 InfiniBand or Spectrum-X Ethernet and ConnectX-8 SuperNIC, GB300 NVL72 is optimized for large-scale AI training and inference workloads, enabling operators to automate operations, optimize network planning, and deploy AI-driven services at telecom scale.

Powering Data-to-AI Pipeline with AI and HPC Supercomputing

G894-SD3-AAX7

To eliminate bottlenecks across the data-to-AI pipeline, Gigabyte extends its AI and HPC portfolio into the telecom domain with platforms built on Nvidia and AMD accelerator architectures. The G894-SD3-AAX7, powered by Nvidia HGX B300, supporting workloads such as real-time traffic analytics, reasoning models, and large-scale AI training.

XN24-VC0-LA61

For converged AI and HPC environments, the XN24-VC0-LA61, based on Nvidia MGX architecture and Nvidia GB200 Grace Blackwell NVL4 Superchips, uses direct liquid cooling to enable dense, energy-efficient deployment.

G893-ZX1-AAX4

Gigabyte also showcases G893-ZX1-AAX4, combining AMD EPYC 9005/9004 CPUs with Instinct MI355X GPUs, delivering high performance/watt for inference, simulation, and advanced modeling while helping operators manage power and cost.

Digital Twins for Intelligent, AI-Driven Network Operations

XL44-SX2-AAS1

Digital twins are becoming a critical tool for AI-driven network operations. Gigabyte enables high-fidelity, real-time simulation with XL44-SX2-AAS1, built on Nvidia MGX architecture and configured with 8 Nvidia RTX PRO 6000 Blackwell Server Edition GPUs. With 800GB/s bandwidth via Nvidia ConnectX-8 SuperNIC and PCIe Gen6 connectivity, the platform enables higher throughput and better scale-out capabilities, making it the choice for round-the-clock high-availability assignments.

Enabling Efficient Cloud Hosting at Telco Scale

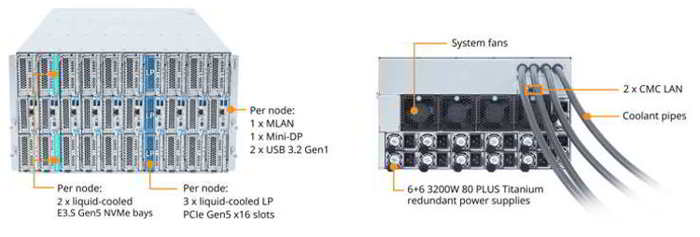

B683-Z80-LAS1 front and rear

As telecom operators expand into AI cloud and neocloud services, Gigabyte’s high-density blade servers provide a scalable foundation for AI and HPC hosting. At MWC, The company introduces B683-Z80-LAS1, a 6U, 10-node blade system powered by AMD EPYC processors with a 1:1 CPU-to-NIC configuration. Featuring full-system direct liquid cooling, the integrated piping removes over 90% of system heat, achieving sustainable green computing and optimized power usage effectiveness (PUE).

Extending AI from Core to Edge

W775-V10-L01

AI transformation extends to the network edge, where low latency, data proximity and privacy are essential. The firm’s AI workstations enable local AI development, inference, and deployment across enterprise and private network environments. The W775-V10-L01, powered by the Nvidia GB300 Grace Blackwell Ultra Desktop Superchip, supports up to 775GB of coherent memory for large-scale AI workloads at the desk. Additional platforms, including AMD EPYC-based and Intel Xeon-based workstations, provide flexibility for diverse edge AI and private network scenarios. The compact AI TOP ATOM, delivering up to 1 petaFLOP of AI compute, further enables rapid prototyping and edge AI deployment in a palm-sized form factor.

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter