Micron 9400 NVMe SSDs Explore Big Accelerator Memory Using Nividia Technology

Training for 100 iterations using single Nividia A100 80GB/s Tensor Core GPU and varied number of SSDs to provide range of results

This is a Press Release edited by StorageNewsletter.com on February 22, 2024 at 2:01 pm By John Mazzie, systems performance engineer, Micron Technology, Inc.

By John Mazzie, systems performance engineer, Micron Technology, Inc.

Dataset training sizes continue to grow beyond billions of parameters. While some models can fit in system memory completely, larger models cannot. In this situation, data loaders need to access models located on flash storage through various methods. One such method is a memory mapped file stored on SSDs. This allows the data loader to access the file as if it were in memory, but the overhead of the CPU and software stack reduces the performance of the training system. This is where Big accelerator Memory (BaM)* and the GPU-Initiated Direct Storage (GIDS)* data loader come in.

Micron 9400 NVMe SSD

What are BaM and GIDS?

What are BaM and GIDS?

BaM is a system architecture that utilizes the low latency, high throughput, large density, and endurance of SSDs. Its goal is to provide efficient abstractions that enable GPU threads to make fine-grained accesses to datasets on SSDs and achieve much higher performance than solutions requiring CPU(s) to provide storage requests to serve GPUs. BaM acceleration uses a custom storage driver that is designed to enable the inherent parallelism of GPUs to access storage devices directly. BaM is different from Nividia Magnum IO GPUDirect Storage (GDS), as it doesn’t rely on the CPU to prepare the communication from GPU to SSD.

Micron had done previous work with Nividia GDS as noted below:

The GIDS dataloader is built on the BaM subsystem to address memory capacity requirements for GPU-accelerated Graph Neural Network (GNN) training while also masking storage latency. It does this by storing the feature data of the graph on the SSD, since this data is typically the largest part of the total graph dataset for large-scale graphs. The graph structure data, which is typically much smaller compared to the feature data, is pinned into system memory to enable rapid GPU graph sampling. Lastly, the GIDS dataloader allocates a software-defined cache on the GPU memory for recently accessed nodes in order to reduce storage accesses.

Graph neural network training using GIDS

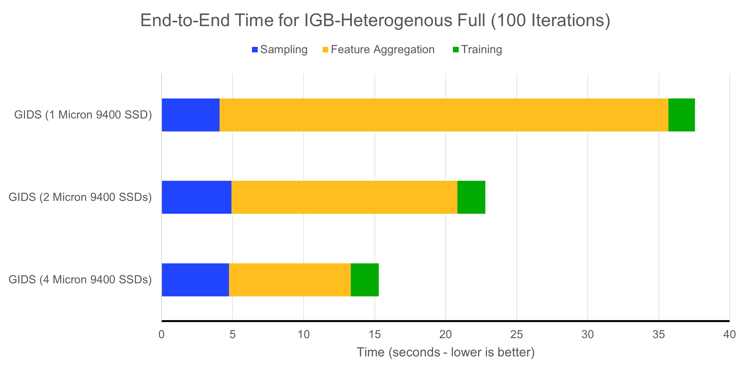

To show the benefits of BaM and GIDS, we performed GNN training using the Illinois Graph Benchmark (IGB) heterogeneous full dataset. This dataset is 2.28TB large and would not fit into most platforms’ system memory. We timed the training for 100 iterations using a single Nividia A100 80GB Tensor Core GPU and varied the number of SSDs to provide a range of results, as seen in Figure 1 and Table 1.

Figure 1: GIDS training time for IGB-Heterogenous full dataset – 100 iterations

Table 1: GIDS training time for IGB-Heterogenous full dataset – 100 iterations

The first part of the training is graph sampling done by the GPU and by accessing the graph structure data within system memory (seen in blue). This value varies little across the different test configurations because the structure stored in system memory does not change between these tests.

Another part is the actual training time (seen at the far right in green). This part is highly dependent on the GPU, and we can see that this does not change much between the multiple test configurations as expected.

The most important section, where we see the largest difference, is feature aggregation (shown in gold). As the feature data is stored on the Micron 9400 SSDs for this system, we see that scaling from 1 to 4 Micron 9400 SSDs improves (reduces) the feature aggregation processing time. Feature aggregation improves by 3.68x as we scale from 1 to 4 SSDs.

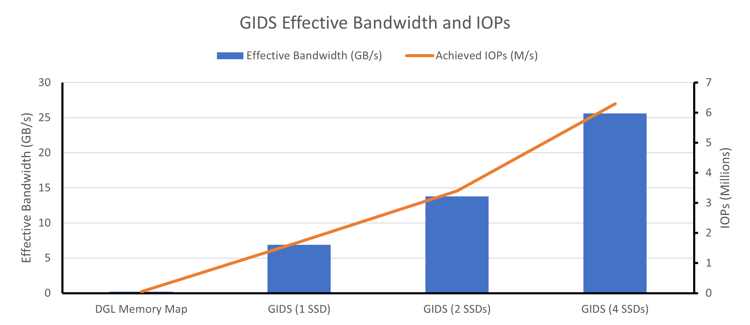

We also included a baseline calculation, which uses a memory map abstraction and the Deep Graph Library (DGL) data loader to access the feature data. Because this method of accessing the feature data requires the use of the CPU software stack instead of direct access by the GPU, we can see how inefficient the CPU software stack is at keeping the GPU saturated during training. The feature abstraction improvement vs. baseline is 35.76x for 1 Micron 9400 NVMe SSD using GIDS and 131.87x on 4 9400 NVMe SSDs. Another view of this data can be seen in Figure 2 and Table 2, which shows the effective bandwidth and IO/s during these tests.

Figure 2: Effective bandwidth and IOs of GIDS training vs baseline

Table 2: Effective bandwidth and IOs of GIDS training vs baseline

As datasets continue to grow, we can see the need for a shift in paradigm in order to train these models in a reasonable amount of time and to take advantage of the improvements provided by leading GPUs. BaM and GIDS are a great starting point, and we look forward to working with more of these types of systems in the future.

Test system:

|

|

|

|

Server |

Supermicro AS 4124GS-TNR |

|

CPU |

|

|

Memory |

1TB Micron DDR4-3200 |

|

GPU |

Memory clock: 1,512MHz SM clock: 1,410MHz |

|

SSDs |

4xMicron 9400 max. 6.4TB |

|

OS |

Ubuntu 22.04 LTS, Kernel 5.15.0.86 |

|

Nividia |

535.113.01 |

|

Software |

CUDA 12.2, DGL 1.1.2, Pytorch 2.1 running in Nividia Docker container |

Reference links :

Big Accelerator Memory paper and GitHub

GPU-Initiated On-Demand High-Throughput Storage Access in the BaM System Architecture (arxiv.org)

GitHub – ZaidQureshi/bam

GPU Initiated Direct Storage paper and GitHub

Accelerating Sampling and Aggregation Operations in GNN Frameworks with GPU Initiated Direct Storage Accesses (arxiv.org)

GitHub – jeongminpark417/GIDS

GitHub – IllinoisGraphBenchmark/IGB-Datasets: Largest real-world open-source graph dataset – Worked done under IBM-Illinois Discovery Accelerator Institute and Amazon Research Awards and in collaboration with Nividia Research.

* Note: Nividia Big Accelerator Memory (BaM) and Nividia GPU Initiated Direct Storage (GIDS) dataloader are prototype projects from Nividia Research and are not intended for general release.

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter