GigaOm Radar for Primary Storage for Midsize Businesses

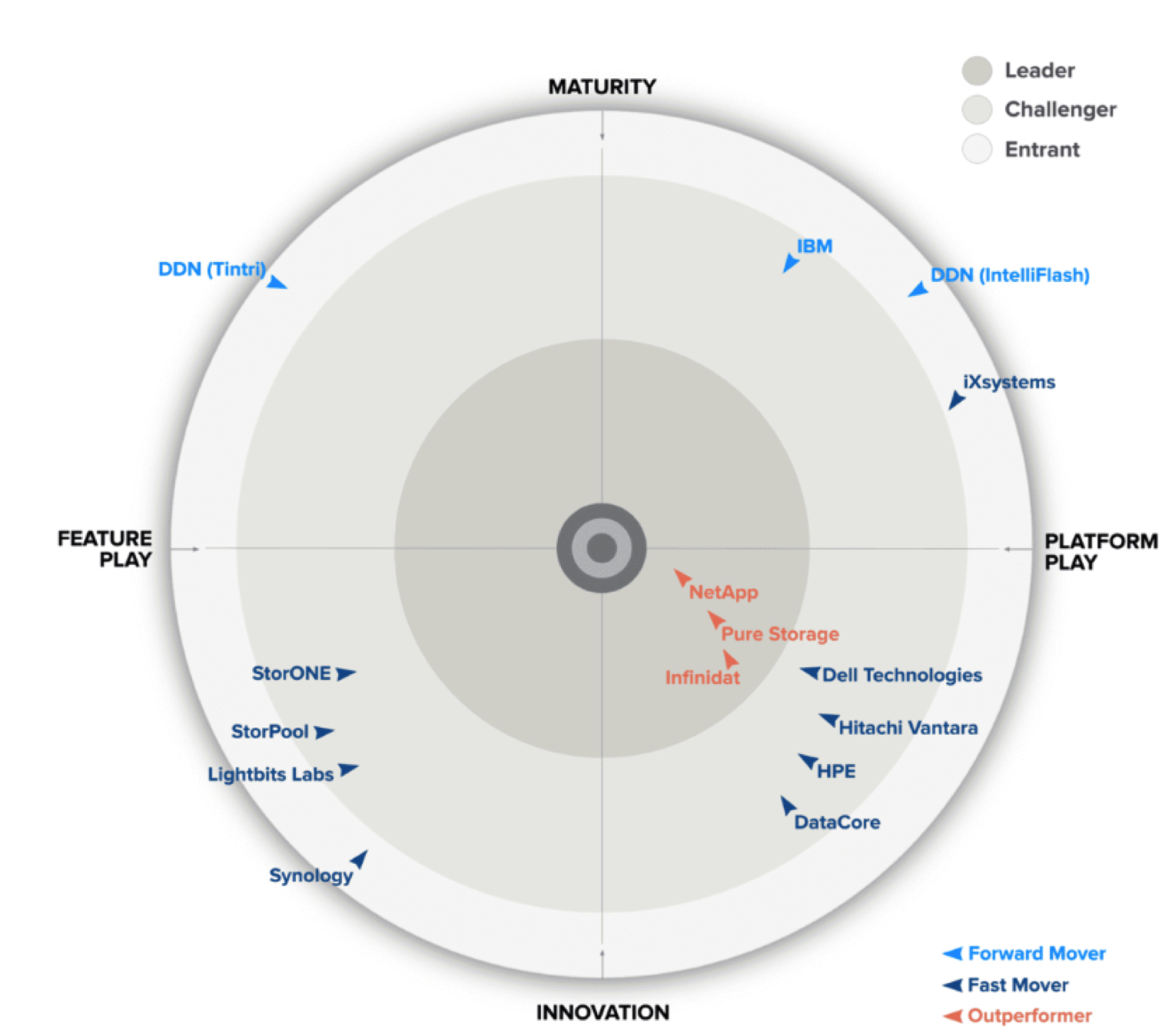

Selected and analyzed, NetApp, Pure Storage and Infinidat as leaders, IBM, Dell, Hitachi Vantara, HPE, DataCore, StorONE, StorPool and Lightbits Labs as challengers

This is a Press Release edited by StorageNewsletter.com on February 12, 2024 at 2:02 pmPublished on January 26, 2024, this market report was written by:

![]() Max Mortillaro, an independent industry analyst with a focus on storage, multi-cloud and hybrid cloud, data management, and data protection, and

Max Mortillaro, an independent industry analyst with a focus on storage, multi-cloud and hybrid cloud, data management, and data protection, and

Arjan Timmerman, an independent industry analyst and consultant with a focus on helping enterprises on their road to the cloud (multi/hybrid and on-prem), data management, storage, data protection, network, and security.

Arjan Timmerman, an independent industry analyst and consultant with a focus on helping enterprises on their road to the cloud (multi/hybrid and on-prem), data management, storage, data protection, network, and security.

GigaOm Radar for Primary Storage for Midsize Businessesv4.0

1. Executive Summary

Primary storage systems for midsize businesses have adapted quickly to new needs and business requirements, with data now accessed from both on-premises and cloud applications. We’re in a transition phase, moving from storage systems designed to be deployed in data centers to hybrid and multicloud solutions, with similar functionalities provided on physical or virtual appliances, as well as through managed services.

The concepts of primary storage, data, and workloads have changed over the past few years. Mission- and business-critical functions in enterprise organizations used to be concentrated in a few monolithic applications based on traditional relational databases. In that scenario, block storage was often synonymous with primary storage, and performance, availability, and resiliency were prioritized, usually at the expense of flexibility, ease of use, and low cost.

Now, after the virtualization wave and the exponential growth of microservices and container-based applications, organizations are shifting their focus to AI-based analytics, self-driven storage, improved automation, and deeper Kubernetes integration. In addition, the prevalence of cyberthreats such as ransomware attacks require organizations to implement a multilayered defense strategy that encompasses secure storage. To prevent downtime and data loss, protecting data assets at the source (in production and on primary storage systems) becomes a key aspect of any security strategy.

Furthermore, the thirst for performance is still strong, which means support for new storage types-including emerging CXL-compatible persistent memory types and NVMe transport protocols-are now being looked at with more interest.

Moreover, organizations have not lost their appetite for cost optimization. When it comes to TCO and flexibility, the emergence of STaaS means that cloud consumption models are increasingly being sought.

When it comes to modern storage, and block storage in particular, flash memory and high-speed Ethernet networks have commoditized performance and reduced costs, allowing more freedom in system design. FC remains a core component in many storage infrastructures for legacy reasons only.

At the same time, enterprises are working to align storage with broader infrastructure strategies, which address issues such as:

• Better infrastructure agility for speeding up response to business needs.

• Improved data mobility and integration with the cloud.

• Support for a larger number of concurrent applications and workloads on a single system.

• Simplified infrastructure.

• Automation and orchestration to speed up and scale operations.

• Drastic reduction of TCO along with a significant increase in the capacity per sysadmin under management.

• Better overall energy efficiency enabling achievement of environmental, social, and corporate governance (ESG) objectives and reduction of energy bills, especially when operating at scale.

These efforts have contributed to the increasing number of solutions as start-ups and established vendors move to address these needs. Traditional high-end and mid-range storage arrays have been joined by software-defined and specialized solutions, all aimed at serving similar market segments but differentiated by the focus they place on the various points described above. A one-size-fits-all primary storage solution doesn’t exist.

For this evaluation, we have 2 Radar reports: one on primary storage for large enterprises and the other on primary storage for midsize businesses.

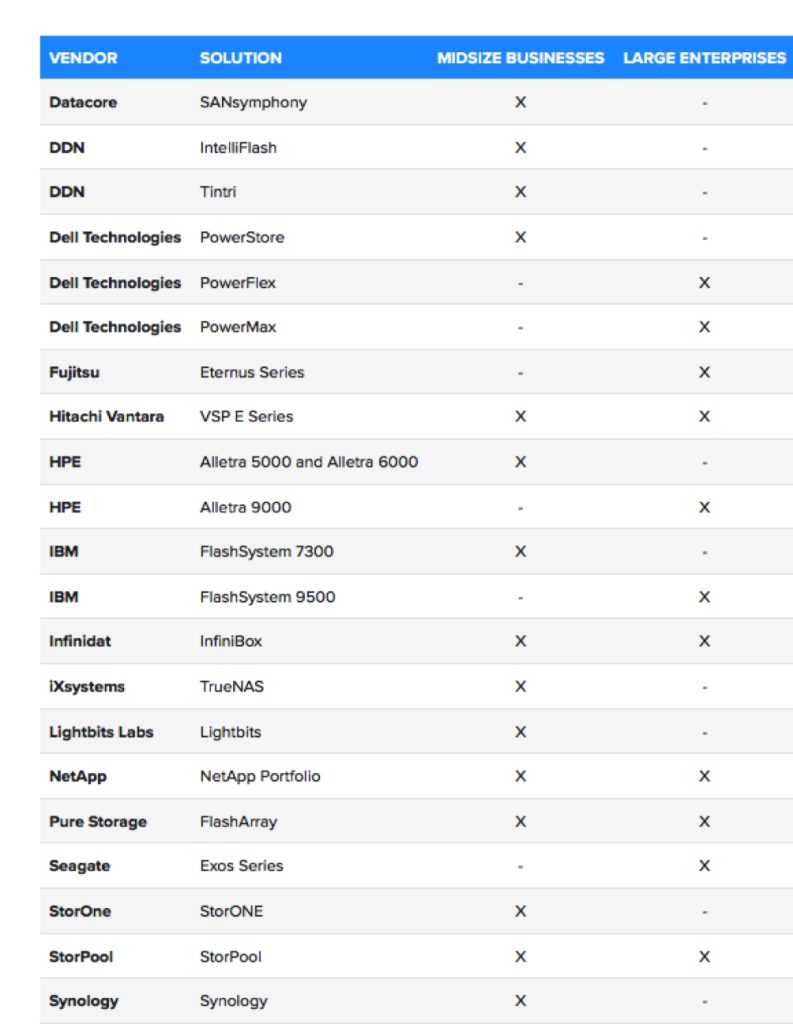

Table 1. Primary Storage Solutions for Midsize and Large Enterprises

This is our 4th year evaluating the primary storage space in the context of our Key Criteria and Radar reports. This report builds on our previous analysis and considers how the market has evolved over the last year.

This report examines 15 of the top primary storage solutions for midsize businesses in the market and compares offerings vs. the capabilities (table stakes, key features, and emerging features) and non-functional requirements (business criteria) outlined in the companion Key Criteria report. Together, these reports provide an overview of the category and its underlying technology, identify leading primary storage offerings, and help decision-makers evaluate these solutions so they can make a more informed investment decision.

2. Market Categories and Deployment Types

To help prospective customers find the best fit for their use case and business requirements, we assess how well primary storage solutions are designed to serve specific target markets and deployment models (Table 2).

For this report, we recognize the following market segments:

• Small businesses: In this category, we assess solutions on their ability to meet the needs of small businesses, for whom ease of use and $/GB are important areas of focus.

• Midsize businesses: In this category, we assess solutions on their ability to meet the needs of midsize companies. Also assessed are departmental use cases in large enterprises, where ease of use and deployment are more important than extensive management functionality, data mobility, and feature set.

In addition, we recognize the following deployment models:

• Hardware appliance: These solutions are provided as a self-contained physical device with all the components necessary to deliver primary storage capabilities. The device is fully supported by the vendor, and other than managing the platform, the customer needs only to apply hotfixes or patches. This deployment model delivers simplicity at the expense of flexibility.

• SDS: These solutions are meant to be deployed on commodity servers on-premises or in the cloud, allowing organizations to build hybrid or multicloud storage infrastructures. This option provides more flexibility in terms of deployment, cost, and hardware choice, but it can be more complex to deploy and manage.

Table 2. Vendor Positioning: Target Market and Deployment Model

Table 2 components are evaluated in a binary yes/no manner and do not factor into a vendor’s designation as a Leader, Challenger, or Entrant on the Radar chart (Figure 1).

“Target market” reflects which use cases each solution is recommended for, not simply whether it can be used by that group. For example, if it’s possible for an SMB to use a solution but doing so would be cost-prohibitive, that solution would be rated “no” for that market segment.

3. Decision Criteria Comparison

All solutions included in this Radar report meet the following table stakes-capabilities widely adopted and well implemented in the sector:

• Scale-up or scale-out

• Traditional vs. SDS

• Integration with upper layers

• Data protection

• Basic data services

• Resiliency and availability

• System analytics

• NVMe

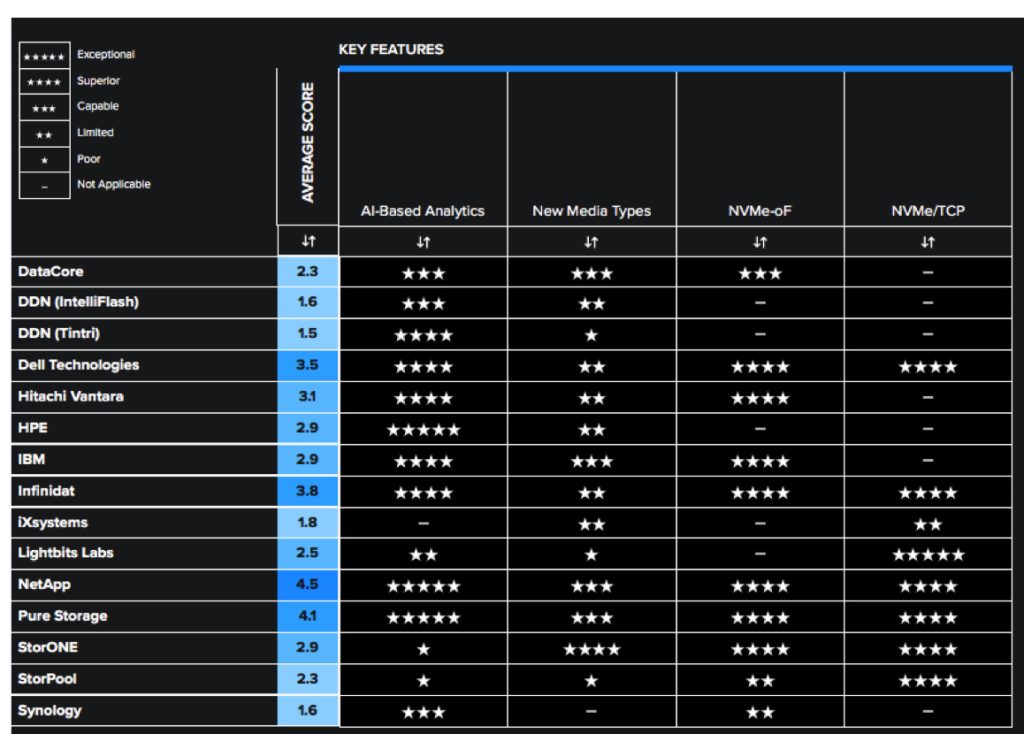

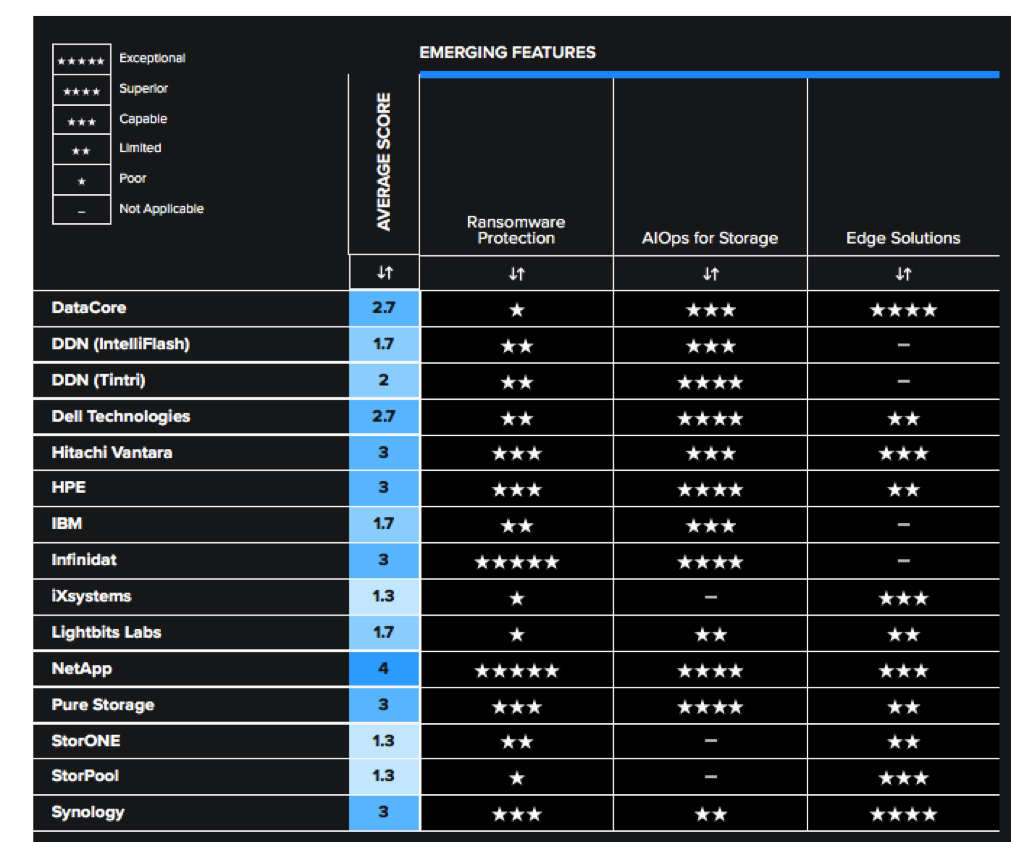

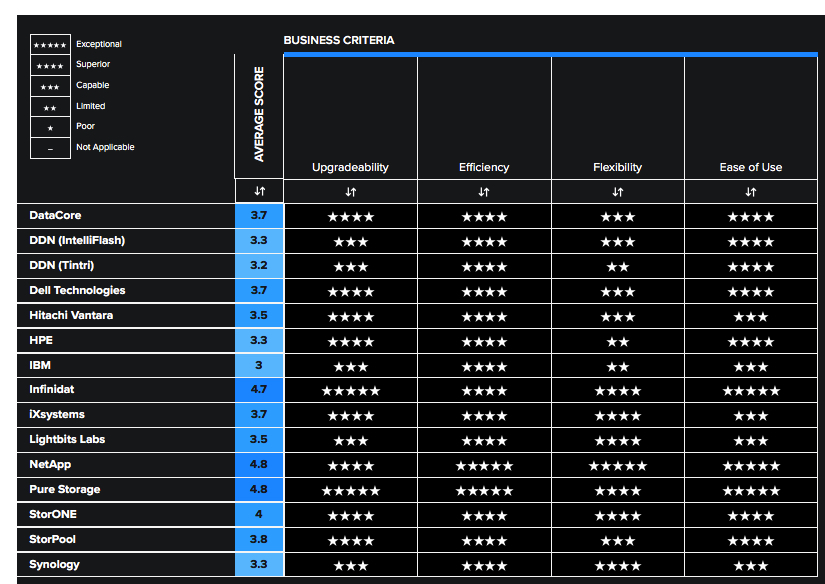

Tables 3, 4, and 5 summarize how each vendor included in this research performs in the areas we consider differentiating and critical in this sector. The objective is to give the reader a snapshot of the technical capabilities of available solutions, define the perimeter of the relevant market space, and gauge the potential impact on the business.

• Key features differentiate solutions, outlining the primary criteria to be considered when evaluating a primary storage solution.

• Emerging features show how well each vendor is implementing capabilities that are not yet mainstream but are expected to become more widespread and compelling within the next 12 to 18 months.

• Business criteria provide insight into the non-functional requirements that factor into a purchase decision and determine a solution’s impact on an organization.

These decision criteria are summarized below. More detailed descriptions can be found in the corresponding report, GigaOm Key Criteria for Evaluating Primary Storage Solutions.

Key Features

• AI-based analytics: The most advanced AI-based analytics is capable of collecting data at a massive scale and can be used to log data from sensors within a storage system, at a rate of up to several million data points per system per day. This data can then be consolidated in large cloud repositories and analyzed using ML and other advanced techniques.

• New media types: Finding the right combination of performance and capacity for block storage remains challenging. HDDs still provide the best $/GB cost ratio, but data access optimization schemes intended to boost their performance in standard enterprise arrays make them overly complex.

• NVMe-oF: It is very beneficial for applications that need absolute performance, including databases, big data analytics, and more generally, all tier-0 applications.

• NVMe/TCP: The next step in the evolution of NVMe is NVMe on TCP/IP. This implementation of the NVMe protocol loses some of the latency benefits of NVMe-oF, but it adds simplicity and flexibility and is able to take advantage of less-expensive Ethernet equipment.

• Cloud integration: Organizations of all sizes are taking advantage of this for modern applications that leverage native services, but they also need to move data to and from their premises to support other applications in a hybrid fashion, integrating block storage with public and private cloud services.

• API and automation tools: Decision-makers should stay abreast of the options offered by storage systems around API compatibility and command line interface (CLI) features. They should seek functional parity among GUIs, CLIs, and APIs.

• Kubernetes integration: Kubernetes is becoming the de facto standard for container application orchestration, and the community has found common ground on how to deal with persistent storage resources in a Kubernetes environment. The CSI spec enables vendors to create plug-ins that allow container orchestrators to operate seamlessly with the resources provided by the storage system.

• STaaS: Some vendors are proposing consumption models that follow cloud economics. Rather than pay up front with Capex funds, customers can instead consume capacity on demand according to a pay-as-you-go model based on recurrent subscription fees that reflect actual consumption.

Table 3. Key Features Comparison

Emerging Features

• Ransomware protection: It is a complex discipline requiring a combination of prevention, proactive detection, and mitigation and recovery techniques. Nevertheless, organizations should not disregard built-in ransomware protection capabilities available on primary storage systems, since they can potentially reduce downtime or even thwart an attack.

• AIO/s for storage: Storage vendors have been collecting data for many years now and are using it to train their ML systems. These systems are becoming more autonomous and more directed in their advice, offering steps that are consistent with best practices suggested by the vendors.

• Edge solutions: As primary workloads gradually shift from the data center to the cloud, the edge of the network is becoming more important. Organizations are deploying more small-scale storage solutions at the edge, and those solutions require better fleet management capabilities that can handle zero-touch deployment, central policy-based management, and security and ransomware protection.

Table 4. Emerging Features Comparison

Business Criteria

• Upgradeability: Choosing systems that can last longer without dramatic cost increases after the first few years in production helps keep costs from escalating while avoiding unnecessary data migrations and forklift upgrades.

• Efficiency: Solutions that implement an array of data reduction techniques along with tiering capabilities are best suited to tackle the data growth challenge. Besides capacity and tiering aspects, environmental efficiency is also being considered with greater scrutiny.

• Flexibility: In contrast to past models, in which highly siloed stacks and storage systems served a limited number of applications and workloads, today’s storage systems tend to be shared by a large number of servers, VMs, and applications.

• Ease of use: In many IT organizations, system administrators manage several aspects of the infrastructure. They become generalists without the time or skills to operate complex, difficult-to-use systems. GUIs and dashboards are usually welcomed, especially when supported by predictive analytics systems for troubleshooting and capacity planning.

• Cost per transaction ($/IO/s): Even though most primary block storage systems can perform incredibly well, what this performance actually costs determines the value of the entire system. Instead of comparing prices, organizations should track the price/IO/s metric because it gives a better idea of the trade-offs among different systems when data reduction and other services are enabled.

• Cost of storage ($/GB): The $/GB metric compares systems in terms of the capacity exposed to the clients and, by extension, the efficiency of data reduction mechanisms and, more generally, the way media is utilized. Combining $/GB and $/IO/s metrics can produce a pretty good idea of the overall efficiency of a system and, in particular, of the overall efficiency of its performance and capacity.

Table 5. Business Criteria Comparison

4. GigaOm Radar

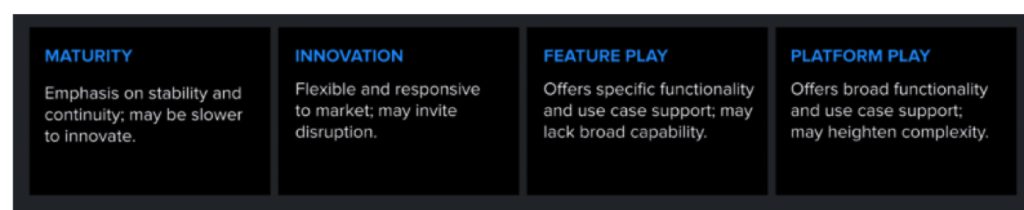

It plots vendor solutions across a series of concentric rings, with those set closer to the center judged to be of higher overall value. The chart characterizes each vendor on 2 axes-balancing Maturity vs. Innovation and Feature Play vs. Platform Play-while providing an arrowhead that projects each solution’s evolution over the coming 12 to 18 months.

As you can see in the Radar chart in Figure 1, there are 3 vendor clusters across the Radar, one in the Innovation/Platform Play quadrant, one in the Innovation/Feature Play quadrant, and one in the Maturity/Platform Play quadrant.

There’s also a single vendor in the Maturity/Feature Play area.

That large cluster in Innovation/Platform Play quadrant can be subdivided into 2 groups. The first one includes Infinidat, NetApp, and Pure Storage, all identified as Outperformers. The versatility of the solution and pace of execution vs. strong roadmaps are differentiators for the vendors in this first group compared to the second.

• Infinidat focuses on massive capacity and scalability with its InfiniBox rack-scale solution and offers the InfiniBox SSA II all-flash architecture designed for mission-critical workloads. Moreover, the company made significant improvements in ransomware protection and cyber resiliency and made its initial entry into hybrid cloud with InfuzeOS Cloud Edition for AWS, a direct port of their OS into their on-premise products in 2023.

• NetApp delivers outstanding capabilities for both on-premises and in the cloud (as a 1st-party offering) with solutions architected around its Ontap OS. The recently launched BlueXP service delivers thorough and seamless data mobility and data management capabilities, providing an unmatched, best-in-class experience. The company launched QLC-based systems (AFF C-Series) at the beginning of 2023.

• Pure Storage delivers no-compromise all-flash storage in this segment with FlashArray//XL and FlashArray//X, offering compelling storage capacity and density specifically with //XL series. It provides best-in-class, Portworx-based Kubernetes support, a proven non-disruptive upgrade architecture, and a choice of flexible consumption models, including STaaS options. The portfolio has been expanded to include //E series systems based on QLC flash and optimized for capacity.

The second group of innovators consists of Fast Movers. The solutions are innovative in their nature, but either their pace of execution is more moderate, or their roadmap is less aggressive and disruptive than those in the first group.

This group consists of DataCore, Dell Technologies, Hitachi Vantara, and HPE:

• DataCore has improved its positioning over last year thanks to an innovative and cost-free cloud-based AI analytics platform, which complements a broad set of data services that has been expanded with adaptive data placement, which uniquely combines the advantages of auto-tiering with de-dupe and compression.

• Dell Technologies continues to innovate with its comprehensive PowerStore platform, combining rich data services, the ML-based CloudIQ AIO/s platform, and scalability options that make PowerStore perfectly capable of serving a spectrum of workloads.

• Hitachi Vantara, known for its dependable storage solutions, has introduced new appliances with better scalability and now also supports non-disruptive upgrades. It is improving its STaaS offering, has closed the gap on Kubernetes integration, and offers multiple management options including ransomware detection and mitigation capabilities.

• Focusing primarily on HPE GreenLake, HPE proposes its Alletra 5000 and Alletra 6000 storage arrays for midsize businesses, combining advanced data reduction mechanisms, the excellent HPE InfoSight AI-based analytics platform, and a SaaS intent-based provisioning solution. It has moved from the Maturity to the Innovation side thanks to the introduction of a modern storage architecture, Alletra Storage MP, that will gradually replace the existing HPE Alletra product line.

In the Maturity/Platform Play quadrant are DDN, IBM, and iXSystems:

• DDN‘s Intelliflash solution puts it in this category. DDN was previously evaluated across its entire portfolio, but this year’s edition of the primary storage Radar evaluates Intelliflash and Tintri solutions separately. Noteworthy features of Intelliflash include AI-based analytics; the solution is otherwise mature and proven, focusing on performance and stability improvements.

• IBM‘s primary storage portfolio is based on the modern Spectrum Virtualize architecture, offering all-flash and hybrid flash options, and backed by company’s AI-based analytics suite, thus delivering robust capabilities to midsize organizations. The company also offers STaaS options and ransomware protection features.

• iXSystems‘ TrueNAS solution offers great scalability, flexible deployment models, and block, file, and object support. The company has a good roadmap ahead, moving it towards the Innovation half of the Radar.

In the Innovation/Feature Play quadrant are Lightbits Labs, StorONE, StorPool, and Synology:

• Lightbits Labs proposes a best-in-class implementation of the NVMe/TCP protocol, providing high performance and low latency for critical applications, whether for midsize organizations or large enterprise departmental use cases, with the ability to run in the cloud.

• StorONE has an interesting SDS solution focused on performance, with a good set of capabilities. The company has made improvements in ransomware protection and other areas.

• StorPool provides a block-based, software-defined primary storage solution with support for NVMe/TCP targeting mission-critical workloads. It can run on AWS and includes an innovative, albeit statistics-based, analytics platform. Previously a Maturity/Feature Play solution, Firm’s continued improvements have enabled the company to move to the Innovation half of the Radar.

• Synology is a new vendor in this year’s Radar. It has a portfolio of appliances with outstanding support for edge storage use cases, a unified management platform, and plans to introduce support for key technologies (including NVMe) in 2024.

Finally, one Maturity/Feature Play solution is present in this Radar: Tintri by DDN. Tintri was rated in the overall DDN portfolio in previous editions and is thus a new vendor and Entrant in this edition of the primary storage Radar. It is specifically designed to support virtualization and VDI workloads as well as databases. The solution sports a compelling AI-based analytics engine and excellent usability but cannot support general purpose block or file workloads.

In reviewing solutions, it’s important to keep in mind that there are no universal “best” or “worst” offerings; there are aspects of every solution that might make it a better or worse fit for specific customer requirements. Prospective customers should consider their current and future needs when comparing solutions and vendor roadmaps.

5. Solution Insights

DataCore, SANsymphony

DataCore is a company with a portfolio of SDS (SANsymphony), object storage (Swarm), and container-native storage (openEBS and openEBS Pro). The Swarm acquisition and hiring of open source openEBS project engineers in recent years is in line with firm’s goal of building DataCore.NEXT, the realization of the company’s vision to deliver data capabilities across core, edge, and cloud.

With SANsymphony, the company offers a software-defined block storage architecture that provides a rich set of data services. The solution is built on Windows and supports all locally attached media types, whether PCIe/NVMe)-based or connected through SCSI/serial advanced technology attachment SATA/SAS busses. It also pools third-party SAN storage connected via iSCSI and FC and enables auto-tiering among all the diverse hardware options.

SANsymphony is interesting because it supports 2 different deployment topologies. The 1st one is for SAN consolidation use cases: It uses either iSCSI or FC SAN protocols and acts as a front end to existing storage, allowing tiering and synchronous replication. The 2nd one follows an HCI architecture in which cluster nodes contribute local storage to the distributed cluster, and it supports vSphere (including vVols) or Hyper-V.

SANsymphony provides rich data services: snapshots, replication, and site recovery, for example, and the solution implements CDP and synchronous mirroring, as well as encryption. Storage efficiencies include thin provisioning, de-dupe and compression, and auto-tiering. For performance optimization, caching, parallel I/O, random write accelerator, and adaptive data placement are complemented by storage QoS.

In addition to SANsymphony’s management interface, organizations can also benefit from the SaaS DataCore Insight Services (DIS), which is free of charge to company’s term-license customers. DIS is an AI/ML-based predictive analytics platform that analyzes telemetry data, detects early signs of potential issues, and provides mitigation steps and recommendations. DIS can also provide optimization recommendations and capacity forecasting based on usage trends. The solution includes plug-ins, PowerShell cmdlets, and a REST API.

Strengths

SANsymphony offers multiple deployment options and a rich set of data services combined with an innovative and cost-free cloud-based AI analytics platform. Multiple performance improvements were made in 2023, along with new policy-based dynamic relocation of data across tiers, with adaptive de-dupe and compression that is transparent to apps. The roadmap is promising.

Challenges

Support for NVMe-oF (although successfully demonstrated) and NVMe/TCP is currently absent due to lack of interest from DataCore’s customer base; this may make the solution less suitable for supporting performance-oriented workloads. While this is not an immediate concern, it may become a point to be addressed as adoption of NVMe storage grows.

Purchase Considerations

Delivered as a SDS solution, SANsymphony provides organizations with ample flexibility in terms of hardware choices. Even though it can support multiple use cases, the current absence of NVMe-oF (albeit successfully demonstrated) and NVMe/TCP are aspects to consider, especially for mission-critical, performance-oriented workloads.

Overall, the solution is capable of covering multiple use cases and verticals. It also supports various deployment models by which storage can be delivered in the data center, at the edge (remote office/branch office use cases, starting at 2 nodes), or at DR sites. Clusters can scale out from 2 to 64 nodes and from a few terabytes to petabyte scale.

Radar Chart Overview

Overall, SANsymphony is a capable solution that delivers across nearly all key features, but most of its ratings are average, resulting in its positioning as a Challenger in the Innovation/Platform Play quadrant. Its dynamic roadmap should improve the solution’s positioning in the next edition of this Radar.

DDN, Intelliflash

DDN provides storage solutions for next-gen workloads such as HPC and AI. Over the years, it acquired a number of companies such as Tegile (Intelliflash), Tintri (VMstore), and Nexenta (NexentaStor) to build a portfolio of primary storage solutions. This year, the report covers DDN solutions in 2 separate capsules: one for Intelliflash, another one for Tintri VMstore. NexentaStor is no longer actively developed (except maintenance releases for existing customers) and has been removed from this year’s report.

Intelliflash is a unified flash storage platform that serves various use cases (SAN and NAS, or NAS) and supports virtualized (vSphere, Hyper-V, and Xen) and containerized workloads through a Kubernetes CSI plug-in. It is appliance-based, with all-flash NVMe and hybrid SSDs/HDDs configurations available, and it supports multiple protocols (FC, iSCSI, NFS, and or SMB). Although it supports NVMe-based systems, NVMe-oF and NVMe/TCP protocols are not supported yet.

It provides intelligent tiering capabilities: within an appliance, multiple storage tiers can be combined into a single storage pool, and it takes care of automated data placement on the most appropriate tier.

In terms of data services, it supports space-efficient snapshots and clones, synchronous and asynchronous replication, in-line compression and de-dupe, and thin provisioning. The solution embeds data resiliency schemes in all components, including caching algorithms and metadata separation from the data to accelerate storage operations.

Organizations deploying Intelliflash can benefit from Analytics for Intelliflash, an AI-driven, cloud-based analytics platform that monitors performance, health, and usage metrics across Intelliflash systems. This platform aggregates telemetry from all Intelliflash installations and uses AI to monitor trends, detect issues before they become critical, proactively alert administrators, and predict future usage trends. Intelliflash also allows the firm to help customers further through proactive support, automated ticket opening, and replacement-part dispatching. Access to Analytics for Intelliflash is provided without additional cost.

Intelliflash provides cloud integration capabilities through the Intelliflash S3 Cloud Connector, which enables connectivity to cloud providers or any S3-compatible object storage system. It supports Kubernetes through a CSI plug-in and supports VMware Tanzu and OpenStack as well; it also includes a complete REST API.

Strengths

Key differentiators for Intelliflash include excellent AI-based analytics, intelligent tiering, and overall commendable data services such as de-dupe, compression, and so forth. Intelliflash delivers block and file storage, providing organizations with a versatile unified storage solution.

Challenges

There are no particular cloud integration capabilities: besides the Intelliflash S3 Cloud Connector, Intelliflash does not offer a cloud or virtual appliance deployment model. There’s no NVMe-oF and NVMe/TCP support.

Purchase Considerations

Organizations seeking hybrid deployment models by which the same block storage system runs on-premises or in the cloud should note that Intelliflash doesn’t offer such models. From a performance perspective, the lack of support for NVMe-oF and NVMe/TCP will limit applicability for performance-intensive workloads, even if the solution supports NVMe drives.

Intelliflash is available in all-flash (including NVMe flash) and hybrid configurations, allowing the solution to cover a wide range of use cases at different capacity and cost price points. DDN’s relevance in next gen workload support (including AI and HPC) makes Intelliflash a common companion of other DDN solutions such as ExaScaler.

Radar Chart Overview

Intelliflash is a mature solution that includes average coverage of the evaluated key features and business criteria. Limited cloud integrations combined with the lack of support for QLC flash and the absence of NVMe-oF and NVMe/TCP protocols negatively impact its scoring and result in its placement in the Entrant ring in the Radar.

DDN, Tintri

DDN provides storage solutions for next-gen workloads such as HPC and AI. Over the years, it has acquired a number of companies such as Tegile (Intelliflash), Tintri (VMstore), and Nexenta (NexentaStor) to build a portfolio of primary storage solutions. This year, the report covers firm’s solutions in 2 separate capsules: one for Intelliflash, another one for Tintri VMstore. NexentaStor is no longer actively developed (except maintenance releases for existing customers) and has been removed from this year’s report.

VMstore is delivered as a hardware appliance (on the NVMe-based VMstore 7000 system), with a service-oriented architecture in which storage operations are related to applications instead of being tied to the usual storage constructs, such as RAID groups, LUNs, or datastores. In practice, VMstore manages the files that transform an application or workload into a storage object, enabling management and insight at the application level. VMstore does this natively on its scalable file system. Although NVMe drives are supported, the solution’s connectivity is based on the NFS protocol.

VMstore’s ML-based engine offers real-time analytics and anomaly-detection capabilities that identify unusual patterns in service latency, can automatically resolve most service latency issues, and provide less than 1ms latency to all applications and workloads with no administrative effort. Furthermore, organizations can use a SaaS offering branded Tintri Analytics that further improves the user experience by leveraging real-time analytics, capacity usage trends, and growth projections. Capacity planning and forecasting activities are made easy: customers can create application profiles for a variety of workloads and then execute what-if analysis to understand how changes can impact the infrastructure.

Cloud integration is made possible through VMstore Cloud Connector, which extends VMstore data protection, archival storage, and DR capabilities in the cloud, with support for AWS and IBM Cloud. Different schedules, retention periods, and protection targets can be configured granularly, with efficient snapshots that are compressed and de-duped in real time.

Strengths

Tintri is a great solution for organizations that are looking for a primary storage solution that supports virtualization and VDI workloads. It provides an excellent analytics engine and simple management.

Challenges

The solution’s architecture is designed to address very specific use cases and makes VMstore unsuitable for use as a general-purpose unified block and file primary storage solution.

Purchase Considerations

Tintri VMstore is a specific solution that focuses on a number of use cases such as VDI, virtualization, and DevOps and databases. Its service-oriented architecture is different from traditional block storage in the sense that it does not expose LUNs or volumes. It is also not designed to handle files.

When considering Tintri VMstore, understanding the scope of supported workloads is important due the focused nature of the solution. Organizations willing to evaluate Tintri for use cases other than those mentioned above should contact the commany to understand if the solution can support their workloads.

Radar Chart Overview

Tintri is positioned in the Maturity/Feature Play quadrant. Innovative when it was launched several years ago, the solution is now very mature and stable and has seen little change. It was originally architected around virtualization to provide simple-to-manage, abstracted virtual storage volumes to VMs, a precursor to VMware vVols. This limits the use cases that can be supported by the solution, and its design negatively impacts some of the evaluated key features. It does include noteworthy AI-based analytics, but the solution was designed to solve a niche use case and has little potential for evolution.

Dell Technologies, PowerStore

Formed in 2016, Dell Technologies inherits its storage DNA primarily from EMC Corporation (later known as Dell EMC). Although historically the company has had a very heterogeneous portfolio of storage solutions, the founding of Dell Technologies marked a turning point in terms of rationalization.

The PowerStore series is a unified storage platform that supports block and file storage and VMware vVols. Last year’s report mentioned the existence of 2 PowerStore appliance models (X and T). The X appliances are no longer available on Dell’s website and appear to have been retired.

The solution supports a range of transport protocols, such as iSCSI, FC, NVMe-oF, NVMe/TCP (with up to 4x100GbE ports), vVols, SMB, and NFS, as well as SmartFabric Storage Services, a software tool for automating NVMe/TCP infrastructure discovery and configuration. It’s worth mentioning that the latest PowerStore refresh supports in-chassis, non-disruptive, data-in-place controller upgrades with the Dell Anytime Upgrade program, prolonging the hardware lifespan and increasing the solution’s lifetime and efficiency. Organizations with stringent RPO/RTO requirements can take advantage of either PowerStore’s new native metro replication capability or PowerStore metro nodes, a specific hardware configuration delivered as a cluster and designed to offer synchronous, active-active replication within metro distances, with support for replication between different Dell and 3rd-party arrays.

Dell’s storage products incorporate native AIO/s capabilities, such as performance and efficiency balancing, automated recovery from drive failure, intelligent storage object creation, lifecycle management, storage class memory, metadata tiering, and programmable infrastructure. Organizations that need additional storage systems can leverage the CloudIQ solution to benefit from more advanced capabilities, such as health and cybersecurity recommendations, performance impact, anomaly and workload contention analysis, capacity forecasting, and more.

For API and automation support, organizations can either rely on CloudIQ (which provides unified webhook and REST API support across products) or directly access each platform’s own REST APIs. This latter option may, however, introduce complexity for organizations using different platforms.

Kubernetes support is currently available on PowerStore systems through regularly updated CSI plug-ins, which expose storage to Kubernetes clusters via FC, NFS, or iSCSI transport protocols.

Strengths

Dell has further improved the capabilities of its PowerStore platform with capacity, performance, and scalability improvements. Complemented with a solid AIO/s platform (CloudIQ), strong VMware integrations, and a compelling non-disruptive controller upgrade model that prolongs the solution lifespan, PowerStore represents a significant leap forward for the company.

Challenges

Cloud integration remains limited; there is no virtual appliance or cloud-based consumption model for PowerStore for all the major hyperscalers. All PowerStore appliances are currently based on TLC flash; the introduction of QLC flash could improve the TCO.

Purchase Considerations

PowerStore provides a comprehensive set of capabilities. The main point to consider before purchasing is ensuring the solution meets the organization’s needs in terms of cloud integration. The solution supports in-place, non-disruptive controller upgrades and organizations should also consider the benefits this can bring in terms of longevity, upgradeability, and continuity of operations.

As a versatile unified block and file storage solution, PowerStore is suited to meet the needs of many midsize organizations and small businesses alike. The manufacturer offers multiple appliance models with different capacities and scalability parameters. The solution will cover a range of use cases and can even support some high-performance workloads.

Radar Chart Overview

The PowerStore platform is very capable and is the strongest contender to the solutions in the Leaders circle, but it is negatively impacted by average cloud integrations and lack of QLC flash support, which in turn impact the overall flexibility and $/GB of the solution. Improving at least cloud integrations would likely have a positive impact on PowerStore’s placement.

Hitachi Vantara, VSP E Series

For over 60 years, Hitachi Vantara has specialized in data management for mission-critical digital and industrial environments. It develops intelligent data platforms and hybrid cloud infrastructures, and it offers digital consulting expertise as well.

It provides a range of storage solutions for midsize and large organizations. Its products use the same OS and feature set, allowing users to design their infrastructures with a consistent set of characteristics at both the core and edge levels.

The VSP E series is focused on performance, and its scalability is remarkable in performance and capacity. The VSP E590H and E790H can provide up to 10.9PB of capacity; the new E1090H even provides 26PB (and NVMe-oF capability). The latter offers more performance due to additional CPU cores and memory.

Hitachi Ops Center is an ML-powered management platform that simplifies and improves operations for the entire storage stack. This suite consists of several highly integrated components. Hitachi Ops Center Clear Sight provides cloud-based monitoring capabilities. Hitachi Ops Center Analyzer provides real-time observability and anomaly detection, while Hitachi Remote Ops handles infrastructure issue resolution, with up to 90% of problems being automatically resolved. In addition, a new capability called Secure System Updates allows non-disruptive in-place updates without the need to evacuate an array.

Hitachi has closed the gap in Kubernetes integration by adding support for Kubernetes, OpenShift, and Anthos. Hitachi Cloud Connect was added for cloud integration, allowing cloud-adjacent deployments of VSP in Equinix-based co-locations.

The company offers an interesting STaaS solution called Everflex that allows pay-as-you-go and flexible consumption with guaranteed SLA/SLOs, a fixed-rate card, and integrated analytics. The solution supports scaling up and down, has transparent pricing on a $/GB/month basis, and includes 5 storage service classes with 99.999% or 100% data availability. Recently, multitenancy was added to the STaaS offering and 3 levels of managed services are available, allowing granular control between the customer and Hitachi.

The manufacturer offers an SDS solution called Virtual Storage Software Block (VSS Block). It provides VSS block-ready nodes that allow organizations to scale their SDS system quickly and enable the data plane to be extended from the VSP solutions described above. VSS Blocks run virtualized and integrate with an organization’s core storage platform and existing hypervisor.

Strengths

The company offers reliable storage solutions with a resilient architecture that guarantees performance. Its STaaS offering, Everflex, provides greater flexibility to customers, allowing them to scale their storage resources without having to manage infrastructure. With the broad range of storage solutions and the soon-to-come VSP One offering, firm’s solutions are a good fit for almost all business environments.

Challenges

Organizations looking to leverage NVMe flash for critical workloads may face hurdles as NVMe/TCP is unsupported, and NVMe-oF is supported only in the VSP 5000 series and the VSP E1090 series. Cloud integration is also average compared to other market competitors. It’s important to consider performance and integration needs carefully when selecting a solution.

Purchase Considerations

The firm offers various purchase options for its data services and storage. Organizations can follow the normal route of hardware purchase through numerous partners around the globe or consider Hitachi’s STaaS offering.

It offers a very extended range of hardware solutions, and the use cases for these are very diverse and extend to all verticals. Databases, virtualization, containers, and many others are possible with VSP platform. With the introduction of VSP One (Hitachi’s offering for One Data Platform announced for early 2024), the possibilities will get even better.

Radar Chart Overview

Hitachi Vantara is a Challenger in the Innovation/Platform Play quadrant. Its rating is lowered by average cloud integration capabilities compared to the Leaders and a lack of support for new media types. In terms of business criteria, flexibility and ease of use are also average, impacting Hitachi Vantara’s overall positioning.

HPE, HPE 5000 and HPE Alletra 6000

A major storage and data center provider, HPE provides storage capabilities in the midsize storage segment with its Alletra product line, launched in 2021.

The Alletra 6000 series AFAs (available in all-NVMe systems) include data reduction mechanisms, such as variable block in-line de-dupe and compression, and support for native application-consistent snapshots as well as replication. In the currently available configurations, however, none of the Alletra 6000 systems incorporate support for NVMe/TCP and NVMe-oF. The vendor also offers the Alletra 5000, a hybrid array that combines flash and HDD, providing the same data services as the Alletra 6000, but at a more attractive price point for non-critical workloads.

HPE is moving away from traditional storage management approaches. Besides its InfoSight for AIO/s, organizations can take advantage of its Data Services Cloud Console. This SaaS intent-based provisioning solution enables a cloud-like experience that combines policy-based storage management and a self-service approach to workload provisioning with AI-driven workload placement. Data Services Cloud Console provides a rich and unified set of REST APIs across HPE products, allows workload movement to and from the cloud, and supports advanced security capabilities. HPE also supports cloud storage through HPE Cloud Volumes, a cloud-based platform that allows organizations to provision block volumes on AWS or Azure. Cloud Volumes also offers a backup capability, although it doesn’t support immutable snapshots yet. Finally, HPE also has a good roadmap regarding Kubernetes integration with dedicated CSI drivers for HPE Alletra systems.

The HPE GreenLake STaaS solution appeals to customers with subscription options ranging from traditional purchasing models to models that simplify the transition from Capex to Opex.

Note that HPE recently introduced GreenLake for Block Storage built on Alletra Storage MP, which is a new disaggregated/composable storage platform. The solution is available for both midsize and large enterprises; GreenLake understands how hardware components map to specific persona, and it helps compose storage according to customer requirements. This is achieved by dynamically configuring compute resources or JBOF blocks, as well as configuring software. GigaOm expects that Alletra Storage MP will replace existing Alletra solutions in the mid-term future.

Strengths

The Alletra 5000 and Alletra 6000 systems are capable platforms. The greatest strengths off firm’s offerings reside in InfoSight – an excellent, AI-driven analytics and management platform that greatly improves and eases storage management activities-and in new services such as Data Services Cloud Conole and GreenLake. GreenLake for Bock Storage built on Alletra Storage MP is promising.

Challenges

The manufacturer focus on delivering its solution portfolio through its GreenLake cloud consumption model aims to ease storage management and scalability challenges through obfuscation of individual storage system capabilities, which may put off organizations seeking to manage discrete traditional on-premises solutions. A limiting factor of Alletra 5000 and Alletra 6000 systems is the inability to deliver file services; however, the next-gen Alletra Storage MP platform addresses this challenge. Although the Alletra 6000 series supports NVMe flash, currently there are no NVMe-oF or NVMe/TCP capabilities. Here again, the new Alletra Storage MP platform supports NVMe-oF and NVMe/TCP.

Purchase Considerations

Alletra 5000 and Alletra 6000 systems provide outstanding analytics capabilities, but do not provide file protocol support. Organizations considering a unified storage solution may need to consider this when evaluating Alletra systems, or they may instead consider switching over to the new Alletra Storage MP platform.

The Alletra 5000 and Alletra 6000 systems are capable of covering a broad spectrum of use cases across multiple verticals. Alletra 5000 appliances will be for general-purpose workloads, VM farms, test/dev environments, and as a backup or DR target. Alletra 6000 appliances are suited for databases, VM and container farms, and test/dev environments.

Radar Chart Overview

Compared to last year, the company flipped over to an almost mirror position, from Maturity/Platform Play to Innovation/Platform Play, due mainly to the introduction of Alletra Storage MP, a modern and disruptive approach to storage. It has a respectable roadmap, but the pace of innovation remains average compared to other competitors, and the Alletra 5000 and Alletra 6000 systems are lacking in a few areas, such as the absence of file storage. Positioning may improve once Alletra Storage MP starts gaining momentum.

IBM, IBM FlashSystem 7300

One of the oldest storage companies still active, IBM continues to offer relevant storage solutions across multiple market segments, including primary storage. In this space, the company offers FlashSystem, a block storage portfolio that consists of various appliances targeted at midsize and large enterprises.

FlashSystem 7300 is an NVMe AFA that supports 2.5-inch NVMe FlashCore Modules from IBM (with higher densities and self-compression), industry-standard 2.5-inch NVMe flash drives, and SAS SSD drives. The system also supports storage-class memory; several configurations exist, achieving an effective capacity of up to 375TB (assuming 3:1 data reduction) in a 2U footprint.

The solution is versatile and capable of addressing multiple use cases by combining capacity, performance, and low latency. From a connectivity perspective, the storage array supports iSCSI (iSER-iWARP and RDMA over converged Ethernet or RoCE) as well as FC and NVMe-oF.

The FlashSystem 7300 is based on Spectrum Virtualize, a storage OS now common to entry-level, mid-range, and high-end company’s storage systems. Supported features include automated tiering and other resource optimization techniques to improve capacity consumption and $/GB ratio, such as compression, de-dupe, unmap, and automated thin provisioning. Several replication capabilities are available, including FlashCopy, Metro Mirror (synchronous replication), policy-based asynchronous replication, 3-site replication, and Global Mirror with change volumes. Additionally, a HA solution called HyperSwap can be implemented.

Storage Insights predictive analytics suite can monitor both IBM and several 3rd-party systems, helping to establish a complete view of the storage infrastructure from a single interface, and automation can be achieved by taking advantage of the Spectrum Virtualize REST APIs. These APIs are common to all firm’s systems based on Spectrum Virtualize, allowing organizations leveraging multiple Big Blue’s storage products based on Spectrum Virtualize to baseline their automation functions.

Integration with the cloud is achieved through cloud-tiering features embedded in the systems and virtual instances of Spectrum Virtualize deployed in the public cloud to provide a consistent user experience and set of features across different environments. Kubernetes clusters can provision block storage dynamically through company’s’s block storage CSI driver, but functions remain limited.

IBM Storage-as-a-Service allows organizations to consume capacity on demand. For primary storage, the solution is branded IBM Block Storage as a Service and is based on the FlashSystem storage.

Organizations can implement a ransomware protection strategy with FlashSystem through firm’s Safeguarded Copy, a technology that provides immutable copies of data on a FlashSystem or SAN volume controller, and on Copy Services Manager, an external automation and scheduling tool.

Strengths

Besides being based on a robust and proven architecture, one of the highlights of the FlashSystem series is the AI-based Storage Insights platform, which provides predictive analytics and proactive support capabilities. The solution also includes laudable data efficiency capabilities and a rich set of replication topologies. Finally, the 7300 version now offers a single platform compared to previous gens for which customers had to choose between an all-flash and a hybrid storage solution.

Challenges

Although capable in block storage, FlashSystem does not provide unified block and file storage capabilities, limiting its applicability in environments where multiprotocol support is desirable. Kubernetes support is limited. Lack of file support remains a concern.

Purchase Considerations

The primary purchase consideration when evaluating FlashSystem should be around file workload supportability, since the solution does not provide any native file support. Cloud integration is acceptable, but it may require manual configuration to deploy virtual instances of Spectrum Virtualize.

The solution will be relevant for all block-based primary storage workloads including virtualization, databases, and more. Putting the absence of file support aside, there is no particular limitation to be mentioned, and the solution is used across multiple industries.

Radar Chart Overview

The positioning as a Maturity/Platform Play is due to the fact that IBM FlashSystem architecture is mature and proven, with no changes foreseen. There are no plans to support file workloads; the roadmap focuses largely on performance and stability improvements.

Infinidat, InfiniBox

Infinidat was founded in 2011; its corporate HQs are in Herzliya, Israel, with US HQs in Waltham, MA. It focuses on primary storage systems (InfiniBox, InfiniBox SSA II) and data protection systems (InfiniGuard) for large and midsize businesses.

It offers modern, AI-based hybrid storage solutions that optimize data placement and reduce overall TCO. InfiniBox and InfiniBox SSA II leverage their Neural Caching technology optimizing DRAM and flash storage to optimize I/O, and InfiniRAID that optimizes data placement to HDD drives and flash storage, thus creating a highly performance optimized data path. The company has an easy-to-use, AI-driven management system and supports NVMe/TCP and NVMe-oF. Its SDS technology (InfuzeOS) is media-independent and can support other commodity-based media types in the future. In mid 2023, it ported InfuzeOS to AWS, providing a fully functioning version of its on-premises system in the public cloud. InfiniVerse provides infrastructure-wide predictive analytics, monitoring, and reporting on capacity and performance.

The firm delivers essential cyber resilience for storage with InfiniSafe built into its systems. In 2023, InfiniSafe was enhanced with InfiniSafe Cyber Detection, an optional deep scanning and analysis tool for identification of data that may be compromised by malware or ransomware. InfiniBox offers a clever implementation of Kubernetes CSI plug-in and has a complete set of integrations for major OS and virtualization platforms.

In October 2023, the manufacturer launched SSA Express, an addition to the InfiniBox system. It offers an all-flash functionality for midsize systems of up to 320TB within a traditional hybrid system. This feature caters to smaller workloads requiring all-flash performance and provides the same performance, availability, and ease of use as the traditional InfiniBox SSA, but at a smaller scale. With this feature, the company can consolidate workloads that require other point solutions and prevent small AFA sprawl since many of those arrays can provide consistent performance for only one or two workloads. This further extends the ROI and TCO because the software powering SSA Express is built into InfuzeOS and provided at no cost via a non-disruptive upgrade.

Strengths

The firm has high-end enterprise characteristics and a balanced AI-based architecture that enables users to consolidate a wide range of workloads in a single system and deliver a consistent performance experience. With the STaaS offerings, most midsize businesses are becoming potential customers for Infinidat solutions.

Challenges

The lack of support for QLC 3D NAND might become challenging for some customers in the future. The solution is designed for rack scale; although it offers laudable density, large organizations may prefer solutions with a more compact footprint for specific deployment scenarios. To address this demand, the SSA platform now provides partially populated versions that are 60% and 80% of full capacity and can be scaled up to 100% capacity non-disruptively. This helps in the midsize market where fully populated systems of the past may have been too much for customers considering capacity and entry cost in this space.

Although the focus is shifting to more all-flash capabilities on the Infinidat system, there is still a gap compared to some of the competition that boasts true all-flash and the use of QLC NAND.

Purchase Considerations

The company has an all-inclusive licensing model that incorporates all core features at no additional cost, including support and a technical advisor who acts as a liaison to the customer’s storage admin and architect teams. The only add-on product sold separately is the InfiniSafe Cyber Detection scanning system. It is sold as a multiple year subscription based on how much data a customer scans vs. their total storage footprint. For example, if a customer had a multiplePB InfiniBox but planned to scan only 500TB of data, they would subscribe to a 500TB license.

The firm offers 2 STaaS models: Capacity on Demand (COD) and FLX. The COD pricing model is a mix based on the amount of Capex storage upfront and cloud-like operating expansions to add capacity as needed; it is simply licensed and made available. The FLX model is Opex-based with cloud-like, pay-as-you-go consumption-up or down-of storage capacity on a month-to-month basis.

The firm provides enterprise storage solutions that are reliable, high-performing, and flexible enough to meet the demands of today’s data-intensive digital enterprises. Its portfolio includes robust storage and data protection capabilities that can be easily leveraged to address the biggest data management and protection challenges for private, public, and hybrid cloud architectures. Its solutions support primary storage, cloud storage, BC, and DR. Its backups its claims regarding its systems with a variety of guarantees for performance, availability and cyber resilience and recovery.

Radar Chart Overview

The firm is a Leader in this Radar due to its high scoring on most of the key features and its solid coverage across business criteria. It is innovating at a fast pace and has a core focus on cyber resiliency and AI-based analytics. Compared to the other Leaders, lack of support for QLC NAND and average cloud integrations impact Infinidat’s placement on the Radar.

iXsystems, TrueNAS

iXsystems has an interesting background in the open-source world and offers various primary storage options built around TrueNAS, an SDS solution based on OpenZFS that organizations can deploy either in a self-hosted fashion or on pre-configured iXsystems hardware appliances. It was founded in Silicon Valley, CA, in 2002.

The company offers 2 community editions of TrueNAS software: CORE and SCALE. Both community editions are free, open-source versions of TrueNAS with community support. CORE is based on FreeBSD for scale-up, and SCALE is based on Linux for scale-out and containerized apps. Users can migrate from CORE to SCALE if and when they choose to migrate and access scale-out features.

The firms also offers a range of TrueNAS enterprise appliances with production-grade deployments and professional support from iXsystems. The enterprise edition offers choice between scale-up (13.x) and scale-out (23.x). Over the past year, the company made significant improvements to its scale-out software, bringing it up to par with the enterprise edition in terms of capabilities, as well as introducing support for large-capacity QLC drives, thus enabling QLC-dense systems.

TrueNAS is built with flexibility in mind, with the TrueCommand management platform for managing any combination of enterprise appliances and community edition systems.

The firm offers single-controller and dual-controller systems with HA and failover. R-Series is the single-controller series largely oriented toward capacity that will meet the requirements of organizations seeking an optimized $/GB cost ratio. Available in all-flash or hybrid configurations, R-Series appliances are geared for scalability and will best serve capacity-oriented, read-intensive workloads, acting either as NAS, SAN, or an object storage target. R-Series also has one model (R30) that is all-NVMe.

Introduced in late 2023, F-Series systems are all-NVMe with dual-controllers and HA. These systems have enterprise, dual-ported Gen4 NVMe SSDs from 7.6TB to 30TB for density as high as 720TB and 30GB/s in a 2U form factor.

The M-Series systems can scale beyond R-Series, and are designed for performance-oriented workloads. They support NVDIMM (write caching) and NVMe flash (read caching), as well as choice among flash pools, HDD pools, or both (hybrid).

Midsize businesses may find a combination of both F-Series/M-Series and R-Series will offer a good balance between performance and price by taking advantage of TrueNAS replication capabilities.

X-Series, on the other hand, consists of compact appliances designed to provide entry-level storage capabilities without compromising on capacity, with up to 1PB raw capacity in 6U for the X20 model.

Strengths

The firm provides flexible deployment models, delivering block, file, and object storage through a broad range of appliances that can scale up or scale out as needed. The solution supports cloud backups, can be deployed on public clouds, and supports Kubernetes.

Challenges

The solution has no AI-based analytics. It also lacks intelligent data placement capabilities, a feature that would optimize storage consumption and cost of ownership. As it’s primarily a SDS solution, it also does not offer any STaaS consumption model. Cloud integration could be improved.

Purchase Considerations

TrueNAS comes in 2 different flavors, so understanding the initial project scope and growth figures is key to selecting the proper deployment model, even though users can migrate from Core to Scale editions at any time. TrueNAS can be deployed for free on a VM, allowing organizations to evaluate the feature set of both editions before making a decision. The lack of AI-based analytics capabilities and intelligent data placement are additional considerations to be taken into account before making a purchase.

The solution covers a broad set of use cases and verticals. It is a flexible, SDS solution that allows organizations to deploy primary storage at their own pace.

Radar Chart Overview

TrueNAS positioning reflects the lack of some evaluated capabilities for either of the software options for appliances. Compared to the competition, several of these capabilities are either not yet available or only partially supported. That said: the company is innovating and has a very decent roadmap, so improvements in positioning can be expected in the next 12 to 24 months, including a potential crossing over to the Innovation/Platform Play quadrant.

Lightbits Labs

Founded in 2016, Lightbits Labs implements high-performance block storage that’s simple, scalable, and cost-efficient for any cloud. It offers a cloud data platform that delivers efficiency, simplicity, and agility for modern data centers. It is the inventor of the NVMe/TCP protocol, which quickly became a standard, and provides SDS that is easy to deploy at scale and delivers performance equivalent to local flash to accelerate cloud-native applications in bare metal, virtual, or containerized environments.

Lightbits is a high-performance, highly available software-defined block storage solution. It delivers scale-out, composable NVMe/TCP storage that performs like local flash. It simplifies infrastructure management and operations while simultaneously lowering cost. Edge clouds and private clouds use Lightbits to provide “NVMe as-a-service” – logical volumes with consistently low latency to compute nodes. Lightbits removes the need for special fabrics and network protocols to achieve low latency, high IO/s, and high bandwidth. Customers can use ubiquitous TCP/IP on Ethernet and achieve all-flash-array performance as well as enriched data services.

The solution relies on standard x86 servers, standard NICs, and NVMe drives to deliver storage services through a standard TCP/IP Ethernet network via NVMe/TCP.

Lightbits software is available on both the Azure and AWS marketplaces. The software license is portable between on-premises and the public cloud. The solution delivers the same high-performance storage and feature-rich experience on-premises as it does on the public cloud, making it for hybrid implementations. With Lightbits, essential enterprise data services are included in the software license, so customers never pay extra for snapshots, clones, or restores. Lightbit’s self-managed capabilities include self-healing, auto-scaling, incremental backups, rolling updates, events monitoring, and more.

Strengths

As a founding partner of NVMe/TCP, the company Labs provides a showcase implementation of the NVMe/TCP protocol. It offers high-performance storage capabilities for low-latency, I/O sensitive workloads with support for the newest media types. The solution offers broad compatibility with OSs and virtualization or cloud-native container platforms and demonstrates outstanding HA features.

Challenges

The solution does not provide any AI-based analytics yet. The focus on high-performance use cases, along with the absence of unified storage support (Lightbits Labs supports only block storage) may lead organizations looking for a polyvalent solution that includes block and file capabilities to find other solutions.

Although the firm is the leader in NVMe/TCP, it does not offer or see the need for NVMe-oF. Environments where performance is less of a need might look at competitive solutions that utilize hybrid technologies.

Purchase Considerations

The solution is easily deployed on-premises as SDS on the hardware chosen by the customer. In the cloud, the software can be purchased through the marketplaces of both hyperscalers (AWS and Azure).

The use cases for Lightbits are plenty, although the focus on high-performing storage environments creates a more specific set of use cases. Databases, VMs, and other high-performance environments are most suitable for Lightbits solutions. With the ability to deploy the solution in two hyperscaler cloud environments, even more customers can take advantage of Lightbits blisteringly fast technology.

Radar Chart Overview

Due to its focus on specific use cases and the lack of some capabilities noted above, the firm is currently placed among the Feature Play solutions. The roadmap is good, execution in NVMe/TCP is exceptional, but implementation of other key features remains average, resulting in its positioning as a Challenger.

NetApp

Founded in 1992, it is headquartered in San Jose, CA. It’s well known for its file storage solutions; it also made a strategic bet a few years ago to extend its footprint in the public cloud, a key differentiator in this space.

The company offers primary storage capabilities across a range of storage solutions for organizations of all sizes. Its most popular primary storage solutions have been the Fabric-Attached Storage (FAS) platform, providing hybrid storage capabilities, and the AFF platform, providing all-flash storage. The vendor continues to deliver a seamless experience across on-premise and public cloud environments with storage systems and services based on the Ontap storage OS that provides multiprotocol data access and BlueXP, a unified control plane that comprises multiple storage and data services.

All entry, mid-range, and high-end systems, including NVMe-based and hybrid models, can count on a series of integrations at the high level common to all storage systems (such as with SnapMirror for data replication), as well as a unified platform for monitoring and analytics (Active IQ). AFF A-series products support end-to-end NVMe, encompassing both the back-end NVMe SSDs and front-end NVMe-oF connectivity to host. The firm provides both NVMe/FC and NVMe/TCP support, and the solutions help customers modernize their infrastructure with higher performance, lower latency, and simplicity of deployment.

It also offers ASA systems, which are all-flash, block-only storage systems. Those systems support either TLC or QLC flash or NVMe-oF and NVMe/TCP, and are also running Ontap. Those systems are suitable for SAN-based environments where internal policies mandate storage isolation and dedicated systems to support, for example, ERP systems or mission-critical databases.

The firm uses AIO/s to drive down administration costs for its customers through Active IQ, a digital advisor that simplifies the proactive care and optimization of NetApp storage. It also uses AIO/s to uncover opportunities to improve the overall health of the storage environment and provide the prescriptive guidance and automated actions to make it happen. The BlueXP platform provides advanced security measures vs. ransomware and suspicious user or file activities when combined with the native security features of Ontap storage.

Cloud integration is best-in-class thanks to BlueXP, the latest evolution of firm’s cloud management capabilities. Ontap technology ensures seamless operations across locations and clouds, simplifies management, and enables data-centric operations. NetApp storage can be consumed as a first-party cloud offering on AWS, Azure, and GCP. BlueXP also supports a host of other data services, such as observability, governance, data mobility, tiering, backup and recovery, edge caching, and operational health monitoring.

The company offers Kubernetes support on primary storage through its open-source Astra Trident CSI-compliant dynamic storage orchestrator. Moreover, its Astra Control service enables advanced data protection, DR, portability, and migration for Kubernetes workloads using the Cloud Volumes platform as a storage provider both within and across public clouds and for Ontap on-premises.

NetApp also offers a broad set of STaaS consumption options through its Keystone, providing multiple service levels for unified file and block, block only, and object storage.

Strengths

The company offers a comprehensive set of enterprise-grade capabilities that maintain consistency regardless of the deployment model. Its BlueXP offering provides next-level management and orchestration capabilities complemented by a host of SaaS-based data services, simplifying storage and data management at scale, whichever deployment model is chosen. It’s worth mentioning that Ontap now supports block, file, and object workloads.

Challenges

The company offers a large ecosystem of solutions and services, so perhaps the main challenge some organizations may face is understanding where to start. Using storage building blocks (appliances), customers can become familiar with the ecosystem and its capabilities, gradually gaining a better understanding and building confidence. Cloud native and cloud first customers are increasingly adopting firm’s primary storage services through first party cloud offerings, which provides an infrastructure free path to become familiar with NetApp.

Purchase Considerations

In the primary storage space, the only purchase considerations that organizations may have to take into account when evaluating company’s solutions are related to selecting the solutions that match their workload profiles: ASA systems aside, both AFF and FAS will provide multiprotocol support. As a rule of thumb, all-flash AFF systems will be the most versatile, providing a good balance of performance and capacity (with the QLC-based C-Series). For capacity-oriented use cases or small businesses, FAS systems may be more affordable.

Firm’s portfolio is among the most coherent and comprehensive in the storage industry. All of the systems, even the cloud-based offerings, leverage its Ontap OS, making the solution suitable for virtually any kind of workload or business use case, regardless of the location. The most common AFF and FAS systems deliver unified block, file, and object storage, while ASA systems are suitable for isolated, block-only environments.

Radar Chart Overview

NetApp is positioned as a Leader due to its consistent delivery of the majority of key features, emerging features, and business criteria evaluated in this Radar. The combination of fast-paced innovation (notably in cloud integrations) and the ability to cater to a broad range of workloads and use cases is why it’s positioned in the Innovation/Platform Play quadrant.

Pure Storage, FlashArray

Founded in 2009 and headquartered in Silicon Valley, Pure Storage has focused on flash storage since its inception. It develops proprietary storage systems (including proprietary flash hardware) and storage architectures that support multigenal, non-disruptive upgrades.

The FlashArray product line supports unified block and file storage and serves primary storage use cases with 4 products: the FlashArray//X and //XL, which are for business-critical applications and performance-oriented workloads; the FlashArray//C, which targets capacity-oriented workloads with an optimized $/GB price; and FlashArray //E, a capacity-optimized system with a competitive $/GB price point. In addition, organizations can also deploy Cloud Block Store, which brings primary storage and enterprise data services to the cloud.

All major activities, such as capacity expansions, controller upgrades, hardware replacement, and software upgrades, can be performed without incurring downtime or service interruption. This seamlessness is made possible by a highly available architecture in which all modules are hot-swappable, controllers are stateless, and all components are configured either in mirrored mode or in active-active HA configuration. In addition, firm’s systems embed proprietary Directflash NVMe modules that optimize data placement and erasure operations, increasing available capacity and improving media endurance, even with QLC flash.

All FlashArray systems use the same Purity OS. They are unified file and block storage systems that benefit from a common set of data services. Among these, data efficiency mechanisms, such as always-on in-line de-dupe, an improved compression method, and pattern removal, allow FlashArray systems to achieve the effective capacity highlighted above. The entire FlashArray product family supports FC, NVMe-oF (ROCE and FC), SMB, and NFS; NVMe/TCP was added in February 2023.

Pure1 combines AI-based analytics with AIO/s and self-driving storage capabilities to manage all company’s products, platforms, and services, as well as provide proactive monitoring and AI-driven recommendations. The solution has been expanded recently to include ransomware protection features such as anomaly detection. It also provides compelling container storage support with Portworx, manufacturer’s Kubernetes-native storage solution: the company provides an edition tailored for use on FlashArray systems.

Strengths

The FlashArray platform provides a comprehensive set of data services and advanced features, including AI-based analytics, AIO/s, and Kubernetes support. Organizations can combine FlashArray//X and //C models and define storage policies to optimize data placement and costs across storage tiers. Evergreen//One also provides an excellent STaaS implementation.

Challenges

The firm still lags behind in terms of cloud integrations, with only Cloud Block Store currently offered. The solution unsurprisingly provides block storage in the cloud, but it is not yet providing file services. With a portfolio that now covers all performance and capacity tiers, a policy engine capable of orchestrating data placement across tiers/appliance types would be welcome.

Purchase Considerations

Organizations that require cloud block storage along with on-premises storage (for replication purposes, for example) should assess whether Cloud Block Store can cover the cloud of their choice; the solution is currently available on AWS and Azure. In addition, unified block and file storage is available on-premises but not yet in the cloud.

Pure offers a comprehensive portfolio thanks to the addition of FlashArray //E in June 2023, bringing the $/GB ratio to parity with HDDs in this specific area. The platform is therefore capable of covering a very broad spectrum of use cases. Furthermore, all the FlashArray systems use the same OS and share the same set of services, making them suitable for organizations that require primary storage and for those who want to automate storage provisioning and move to a cloud-like consumption model.

Radar Chart Overview

The company is positioned as a Leader due to its consistent delivery of the majority of key features, emerging features, and business criteria evaluated in this Radar. It’s an innovative company that offers non-disruptive, multigenal controller upgrades, and it has a comprehensive product portfolio that suits nearly all use cases and workload footprints. This is why it’s positioned in the Innovation/Platform Play quadrant.

StorONE

It is a private company founded in 2012 and headquartered in New York, NY. It has focused since its inception on building a SDS platform optimized for use with a mixture of flash and HDD media.

It offers a versatile platform that aims to deliver optimal workload placement and maximal data protection with minimal TCO. The solution can be deployed either as a SDS or through firm’s S1:AFAn hardware appliance.

The SDS platform supports NVMe and SATA/SAS SSDs, as well as HDDs. In terms of protocols, the StorONE Enterprise Storage Platform supports block (FC, iSCSI, NVMe-oF, NVMe/TCP), file (NFS and SMB), and object (S3) storage.

Storage is provided through volumes, which are abstracted from the underlying storage layer. A data-centric approach enables per-volume configuration of data services and protection capabilities, as well as replication policies, snapshot policies (including immutable snapshots), different vRAID levels, and individual selection of protocols. Data can be replicated synchronously (sync mirror) or asynchronously to DR sites. The solution is optimized for fast RAID rebuilds, allowing rapid return to healthy status even for HDDs. A point of interest revolves around snapshot capabilities: up to millions of active, application-consistent snapshots can be supported, with automated tiering of old snapshots to reduce costs, with no performance impact.

Cloud integration exists with Microsoft Azure through the S1:Azure offering, which allows company’s workloads to run on top of Azure Managed Disk tiers and enables automated data tiering to provide TCO optimizations. S1:Azure can be used in several ways: cloud-native or as a hybrid cloud extension, and for backup, archive, and DR use cases. In hybrid cloud and DR use cases, continuous replication plays a key role, and for DR, workloads are stored in a native cloud format, making instant failover to the cloud possible.

A STaaS consumption model is offered for organizations with high resource utilization, starting at 18TB and without a long-term commitment requirement (it can be canceled with 90 days’ notice).

2023 improvements include a new management interface and AI/ML anomaly detection for production volumes (recoverable via snapshots), as reported in GigaOm’s Sonar reports for Block-Based Primary Storage Ransomware Protection and File-Based Primary Storage Ransomware Protection.

Strengths