Automatically Resize Amazon EBS Capacity with Zesty Disk for Cost Efficiency and Consistent Performance

Solution to maximize value derived from Amazon EBS

This is a Press Release edited by StorageNewsletter.com on December 7, 2023 at 2:01 pmBy Aviram Levy, director of product enablement and cloud alliance, Zesty, Adinah Brown, product marketing manager team lead, Zesty, and Siva Sadhu, Aaron Curtis, and Anudeep Burugula, AWS

Automatically Resize Amazon EBS Capacity with Zesty Disk for Cost Efficiency and Consistent Performance

Cloud computing has helped organizations adapt their infrastructure to changing business needs. One of the key drivers for companies moving to the cloud is the ability to scale up and down infrastructure capacity as needed.

By dynamically adjusting infrastructure capacity based on usage fluctuations, organizations gain both operational and cost benefits. Aligning resources with actual needs reduces the operational load required to ensure there’s sufficient capacity and mitigates the risk of application failure.

On the flip side, there are improvements to cost efficiency. Businesses no longer need to provision resources in excess of what they need. Instead, they pay only for the resources they require, minimizing waste and enhancing financial efficiency.

In this post, we will share how Zesty Disk helps achieve greater cost efficiency and consistent performance when managing Amazon Elastic Block Store (Amazon EBS) volumes. The cloud-native solution optimizes storage at run time without adding any complexity or being exposed to any data on the disk.

Zesty was built with the vision of helping AWS customers make their cloud infrastructure more dynamic. Zesty is an AWS Specialization Partner and AWS Marketplace Seller with the ‘Cloud Operations Competency.’ Zesty helps organizations to be more adaptable to changing business needs by making their cloud infrastructure more dynamic.

Modifying EBS volumes using elastic volumes

Amazon EBS Elastic Volumes allow you to increase volume size, change volume type, or adjust the performance of your EBS volumes. If your instance supports Elastic Volumes, the changes can be done without detaching the volume or restarting the instance. You can continue to use your application while the change takes effect.

Before modifying a volume that contains valuable data, it’s a best practice to create a snapshot of the volume in case you need to roll back your changes. Once you have the snapshot of the volume, you can request the volume modification.

If the size of the volume was modified, you must extend the volume’s file system to take advantage of the increased storage capacity.

There are a few approaches to automate the volume resizing process using AWS Step Functions and AWS Systems Manager which require engineering effort to set up the cloud infrastructure, catalog the existing EBS drives, use monitoring agents on Amazon Elastic Compute Cloud (Amazon EC2) instances, and so on. This generates noticeable overhead on implementation and maintenance.

Another key consideration is that you can only increase volume size. You can’t decrease the EBS volume size, but if a smaller volume is preferred you can create a smaller volume and then migrate your data to it using an application-level tool such as rsync.

How Zesty Disk delivers greater cost efficiency

With its proprietary algorithm, Zesty Disk makes it possible to automatically expand and shrink block storage without any risk to application stability. The block storage autoscaler shrinks and expands volumes at run time, effectively right-sizing storage, with value to be gained for application stability and cost reduction.

The solution’s AI algorithm responds to changing application demand, ensuring users pay only for the storage they need.

Let’s dive deeper into some of the benefits Zesty Disk delivers for AWS customers using Amazon EBS.

Zesty Disk brings about cost optimization by dynamically adjusting storage capacity based on data requirements. By continuously monitoring usage metrics and instance metadata, it effectively determines when to shrink or expand storage sizes to match the data volume. This flexible approach ensures resources are allocated optimally, minimizing unnecessary costs.

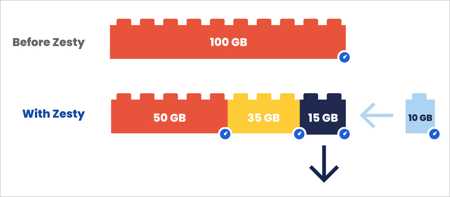

When there is less data, it automatically shrinks the storage capacity to avoid overprovisioning. By decoupling large filesystem volumes into smaller volumes, it can remove unused or underutilized storage, freeing up resources and reducing costs. This proactive approach to right-sizing storage ensures efficient resource allocation without compromising application performance.

Conversely, when there’s an increase in data Zesty Disk seamlessly expands the storage capacity. It can add smaller disks to accommodate the growing data volume, and this elasticity allows businesses to scale their storage infrastructure on-demand, avoiding overprovisioning and unnecessary expenses.

By dynamically adjusting storage capacity based on data fluctuations, Zesty Disk delivers cost optimization by ensuring businesses only pay for the storage they require and use, saving valuable resources and enhancing overall financial performance.

Eliminating downtime

By decoupling large filesystem volumes into smaller volumes, it can make real-time adjustments to support the application’s availability and ensure it doesn’t run into downtime due to insufficient disk space.

When a change in storage capacity is required, it leverages autoscaling technology to dynamically resize the storage volume. The ML algorithm analyzes usage metrics and instance metadata and accurately predicts the storage needs, adjusting the volume accordingly. This ensures the application has the optimal storage capacity to perform efficiently.

The process of adjusting the storage volume is executed smoothly. Zesty Disk serializes the filesystems on the disk, replacing a large volume with multiple smaller volumes. If additional storage is needed, it can add smaller disks seamlessly. On the other hand, when there is a need to reduce capacity, it can evict a disk by redistributing its data to other volumes before removing it. This is a process of redistributing data and adjusting the storage size.

By dynamically adjusting the storage volume without causing downtime, Zesty Disk provides uninterrupted application availability. Businesses can avoid costly disruptions and maintain continuous operations while efficiently managing their storage resources.

Improving IO/s performance

The decoupling of the filesystem into multiple smaller volumes, each with its own dedicated allocation of IO/s, entails a boost in performance, speed, and enhanced user experience.

Traditionally, a single large volume would have a limited number of IO/s allocated to it, but Zesty Disk breaks this limitation by splitting the volume into multiple smaller volumes. With each smaller volume having its own allocation of IO/s, the total IO/s capacity increases proportionately. This means the application can benefit from a higher level of concurrent I/O operations, resulting in improved performance and responsiveness.

Moreover, this approach allows Zesty Disk to optimize throughput performance. By distributing the workload across multiple smaller volumes, the overall throughput capacity is increased, enabling faster data transfer rates and more efficient data processing.

By decoupling large filesystem volumes into multiple smaller volumes with dedicated IOPS allocations, Zesty Disk harnesses the full potential of the underlying storage infrastructure, delivering improvements in both IO/s and throughput performance. This translates to a superior user experience, ensuring applications can operate at peak performance levels while efficiently utilizing available resources.

Zesty Disk under the hood

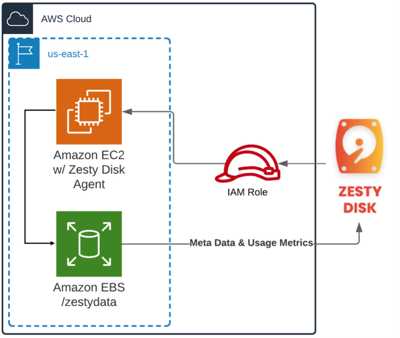

The solution works by creating a virtual disk composed of several small storage volumes. It utilizes native AWS block storage devices, allowing users to maintain their existing tools, procedures, and SLAs. Users retain ownership and exclusive access to their data.

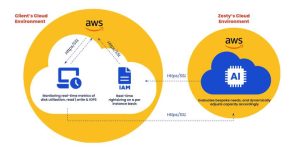

Figure 1 – Deployment architecture of Zesty Disk.

Step-by-step instructions to install Zesty disk on an Amazon EC2 instance are available on the Zesty website.

Zesty Disk continuously monitors usage metrics, including capacity, IO/s, and read/write throughput, as well as instance and disk metadata.

Figure 2 – Dashboard showing changing disk capacity and average RW IO/s

Click to enlarge

This data is securely sent to Zesty’s backend for processing. An AI model analyzes the metrics to generate a behavioral profile of the instance volume, predicting usage patterns and fluctuations.

Figure 3 – Zesty Disk’s operation in the client’s cloud environment

When a capacity change is required, Zesty’s backend issues API commands to the cloud provider, triggering the appropriate action. An update request is then sent to the Zesty Disk handler on the instance to adjust the capacity accordingly.

When a capacity change is required, Zesty’s backend issues API commands to the cloud provider, triggering the appropriate action. An update request is then sent to the Zesty Disk handler on the instance to adjust the capacity accordingly.

The filesystems on the disk are serialized, replacing a large volume with multiple smaller volumes. Disk eviction can occur by transferring data from a smaller volume to other disks before removing it.

Figure 4 – Defragmentation of the disk enables increase/ decrease in filesystem size.

Customer success story: Securonix

As a security, information, and event management (SIEM) solution that detects advanced threats using innovative machine learning algorithms, Securonix collects massive amounts of data in real-time.

The high volume of data entailed it had to frequently allocate large terabytes of disk storage per Amazon EC2 instance. However, once all of the available capacity was consumed by the ingested data produced by its analytics engine, Securonix was paying for block storage capacity it wasn’t using in the time this took to fill up.

Securonix uses Amazon Elastic MapReduce (Amazon EMR) to run HBase (Hadoop database) and Spark, and used Zesty Disk (ZD) as a seamless service to ensure storage persistence when there is high data usage, creating greater demand on the disk required for the database.

A custom Amazon Machine Image (AMI) was developed that uses the ZD filesystem (Amazon Linux 2 with ZD). The fragmenting into small storage volumes is what enables the elasticity for the volume to grow as data is ingested, and to shrink as data is removed when a snapshot is taken. In this process, all native tools, procedures, and SLAs remain unchanged, and Securonix remained the owner of its data and the only ones that have control over it.

After running its EMR clusters with EBS volumes fully managed by ZD, Securonix was pleased with the seamless service availability even in the case of large data ingestion peaks.

Operationally, ZD avoids the hassle of reallocating storage across instances and eliminates on-call developer tasks related to maintaining EBS. With a net capacity utilization of 40% on its prior provisioned storage and shrinking provisioned capacity, Securonix saves 66% off its earlier EBS cost.

“Prior to implementing Zesty Disk, we were seeing an average storage utilization of less than 35%. This was difficult to optimize due to the potential to run out of storage and cause a production outage,” says Derrick Harcey, chief architect, Securonix. “Once we implemented Zesty Disk, we are able to maintain more than 75% EBS storage utilization to significantly reduce storage costs. In addition to reducing storage costs, the performance of our EBS volumes has increased significantly due to the inherent parallelism introduced with virtual disks with multiple underlying EBS volumes.”

Conclusion

ZD offers a solution to maximize the value derived from Amazon EBS. With the ability to decouple large filesystem volumes into smaller volumes, organizations are able to experience improved performance and cost efficiency, achieving more with their existing storage resources.

The solution’s auto-scaling technology eliminates the need for manual adjustments, streamlining operations and reducing the cognitive load for developers. Overall, ZD provides a tool for organizations to enhance cost storage infrastructure, ensure cost-effectiveness and operational efficiency, and increase value from EBS investments.

“Spearheading this initiative was made easy by the amazing team that we worked with at Securonix,” says Uri Naiman, sales engineering team lead, Zesty. “We were able to show significant cost savings, whilst ensuring there was no negative impact to either performance or to their application.”

Resources:

More about Zesty Disk and request a demo.

Explore Zesty products in AWS Marketplace.

.

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter