Hammerspace Reference Architecture for Large Language Model Training

Within hyperscale environments

This is a Press Release edited by StorageNewsletter.com on November 22, 2023 at 2:01 pmHammerspace, Inc. released the data architecture being used for training inference for Large Language Models (LLMs) within hyperscale environments.

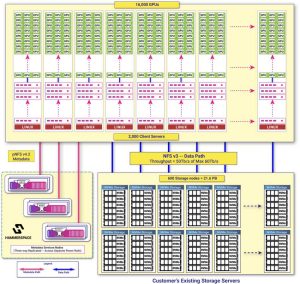

Hyperscale LLM Training Architecture with Hammerspace

Click to enlarge

This architecture is the only solution that enables AI technologists to design a unified data architecture that delivers the performance of a super computing-class parallel file system coupled with the ease of application and research access to standard NFS.

For AI strategies to succeed, organizations need the ability to scale to a massive number of GPUs, as well as the flexibility to access local and distributed data silos. Additionally, they need the ability to leverage data regardless of the hardware or cloud infrastructure on which it currently resides, as well as the security controls to uphold data governance policies. The magnitude of these requirements is particularly critical in the development of LLMs, which often necessitate utilizing hundreds of billions of parameters, tens of thousands of GPUs, and hundreds of petabytes of diverse types of unstructured data.

The company’s announcement unveils the proven architecture delivering the performance, ease of deployment, and standards-based software and hardware support required to meet the unique requirements of LLM data pipelines and data storage.

Hammerspace high-performance file system

AI architects and technologists may need to take advantage of existing networks, storage hardware, and compute clusters while strategically adding new infrastructure as their AI operations grow.

The company unifies the entire data pipeline into a single, parallel global file system that integrates existing infrastructure and data with new datasets and resources as they are added.

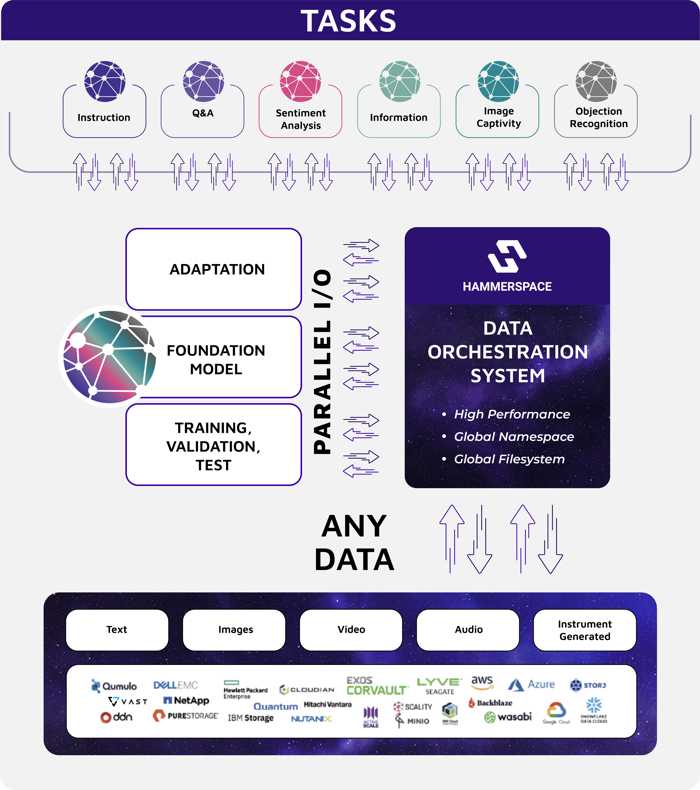

Orchestrated Data Pipelines with Hammerspace

The parallel file system architecture is critical for training AI as countless processes or nodes need to access the same data simultaneously. The firm delivers efficient and concurrent data access, reduces workflow bottlenecks, and improves overall system utilization of the client servers, GPUs, network, and data storage nodes.

- Hammerspace Standards-Based Software Approach

The firm’s parallel file system client is an NFS4.2 client built into Linux, leveraging Hammerspace’s contribution of FlexFiles into the Linux distribution. This approach uniquely enables existing standard Linux client servers to achieve direct, high-performance access to data via Hammerspace’s software. The use of a standard NAS interface empowers researchers and applications to easily access data over the widely adopted NFS protocol and enables the ability to tap into the larger user and vendor communities that are troubleshooting, updating and improving a standards-based environment.

- Hammerspace on Commodity Hardware

The company provides a software-defined data platform compatible with any standards-based hardware such as white box Linux servers, Open Compute Project (OCP) hardware, Supermicro, etc. This allows organizations to better leverage their existing hardware investment and benefit from cost-effective infrastructure at scale.

- Hammerspace Streamlined Data Pipelines

The company’s architecture creates a unified, high-performance global data environment that provides concurrent and continuous execution of all phases of LLM training and inference workloads. It is unique in its ability to break down data silos, accessing training data scattered across diverse data center and cloud storage systems from any vendor or location.By leveraging training data wherever it might be stored, the vendor streamlines AI workloads by minimizing the need to copy and move files into a consolidated new repository. This approach reduces overhead, as well as the risk of introducing errors and inaccuracies in LLMs. At the application level, data is accessed through a standard NFS file interface to ensure direct access to files in the standard format applications are typically designed for.

- Hammerspace high-speed data path

The solution reduces network transmissions and data hops at every point possible within the data path. This approach ensures near 100% utilization of the available infrastructure while delivering a streamlined high-bandwidth, low-latency data path between applications, compute, and storage nodes. More detail about the innovation and benefits can be found in the IEEE article, Overcoming Performance Bottlenecks With a Network File System in Solid State Drives by David Flynn and Thomas Coughlin.

- Fault-tolerant design

LLM environments are massive, complex systems with extensive power and infrastructure. These AI systems often rely on continuously updating models based on new data. The company is capable of operating at peak performance through a system outage, allowing AI technologies to focus less on recovery from power, network, or system failures and more on persistence through those failures.

- Objective-based data placement

The firm’s software decouples the file system layer from the storage layer, enabling independent scaling of I/O and IO/s at the data layer. High-performance NVMe storage can co-exist with lower cost, lower performing, and geographically distributed storage tiers – including the cloud – in a global data environment. Data orchestration between tiers and/or locations is controlled transparently as a background operation based on objective-based policies. These software objectives enable powerful automation to ensure data is automatically placed on the nodes, delivering the required performance when in use. When not in use, data can remain in high-performance storage nodes or be automatically placed in a more efficient location to reduce storage costs on inactive data. This approach ensures data is always available to saturate GPUs and network capacities when needed.

Integrated ML capabilities within the firm’s architecture will begin to place related data sets in high-performance, local NVMe storage when the first file from the data set is accessed.

“The most powerful AI initiatives will incorporate data from everywhere,” said David Flynn, founder and CEO. “A high-performance data environment is critical to the success of initial AI model training. But even more important, it provides the ability to orchestrate the data from multiple sources for continuous learning. Hammerspace has set the gold standard for AI architectures at scale.”

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter