SC23: Availability of Kalray NG-Box NVMe Storage Solution for Data-Intensive and AI Applications

Based on firm's DPU cards and Dell PowerEdge servers, offers fast and scalable on-premises storage for demanding data intensive and AI workflows.

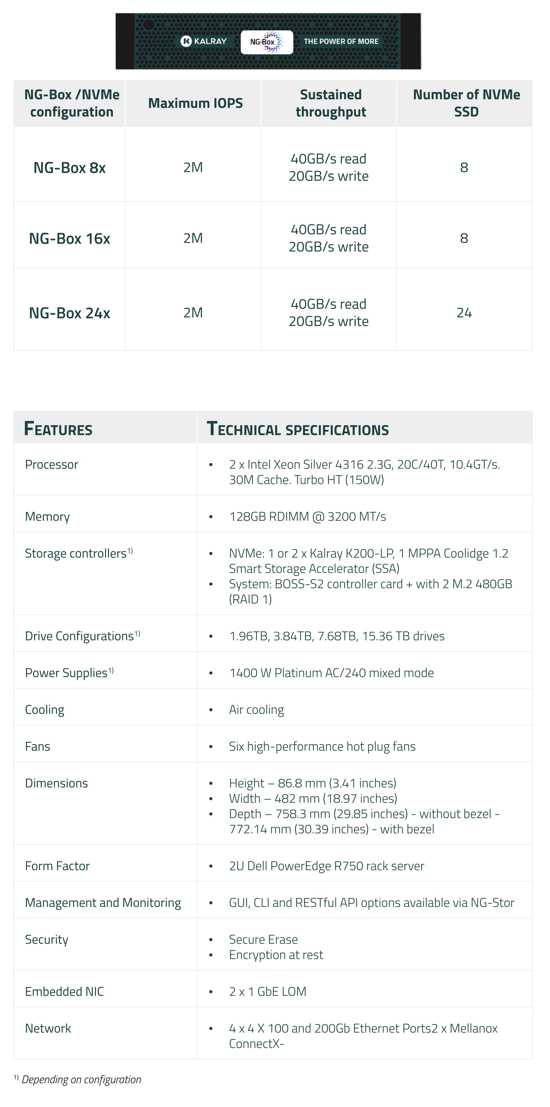

This is a Press Release edited by StorageNewsletter.com on November 20, 2023 at 2:02 pmKalray, Inc. announced the NG-Box, a disaggregated NVMe storage array based on Dell PowerEdge servers combined with Kalray DPU-based storage acceleration cards.

NG-Box is designed to excel at unstructured data workloads and to offer reliable, fast, automated, and scalable on-premises Tier-0 storage for the most demanding data intensive workflows which are increasingly AI-focused.

The company is showcasing the NG-Box at SuperComputing 2023 (SC23). The performance that the NG-Box delivers is over 80GB/s per server. Industry tests such as IO Zone and FIO show doubled performance compared to non-DPU accelerated versions of the server and a highly reduced transaction latency. In addition, NG-Box makes the adoption of the NVMe-over-Fabric standard easy, positioning it as a strong solution for AI and data-intensive usage.

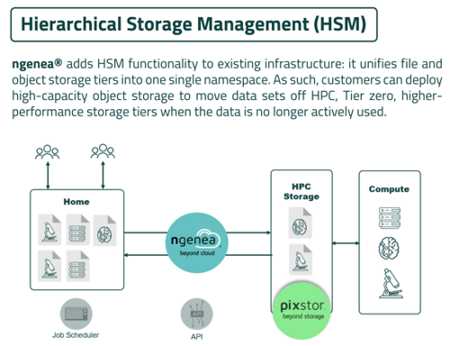

NG-Box is part of the firm’s NGenea data management platform which also includes NG-Stor and NG-Hub. NG-Stor is a high-performance storage tier for the most data-intensive workloads that’s powered by a proven high-performance parallel file system and trusted by thousands of organizations WW. NG-Stor can manage petabytes of data and billions of files. NG-Hub is an easy-to-use web interface that allows centralized control of all storage within a global namespace. Together, NGenea product suite offers organizations a global data management and storage solutions platform specialized for data intensive and AI workloads.

At SC23, the company will demonstrate how its differentiated data management and storage capabilities help organizations efficiently manage data across all stages of data intensive and AI pipelines to improve speed and ease deployment.

Additionally, the firm joined Dell Technologies at SC23 on November 14 to discuss data management for HPC and AI workloads. The 2 companies together provide industry solutions that address today’s most acute challenges in this space.

The NGenea solution, including NG-Box, is available through both Kalray and Dell Technologies. The company is a Dell Technologies partner and combines its solutions with Dell servers, storage, and networking to create industry-value propositions that address the challenges of data-intensive workloads.

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter