Micron 128GB DDR5 RDIMM Memory Using Monolithic 32Gb DRAM

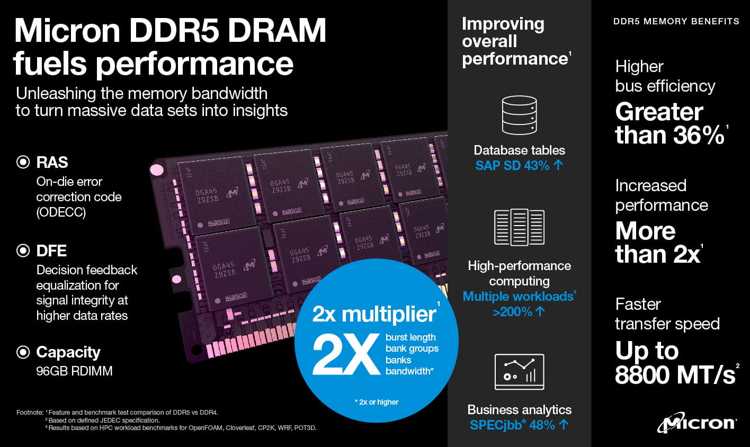

1β technology-based DRAM with speeds up to 8,000MT/s provides improved solutions for memory-intensive applications like generative AI.

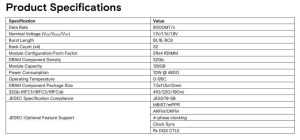

This is a Press Release edited by StorageNewsletter.com on November 20, 2023 at 2:01 pmMicron Technology, Inc. announced its 32Gb monolithic die-based 128GB DDR5 RDIMM memory featuring performance of up to 8,000MT/s (1) to support data center workloads today and into the future.

These high-capacity, fast memory modules are engineered to meet the performance and data-handling needs of a range of mission-critical applications in data center and cloud environments, including AI, in-memory databases (IMDBs) and processing for multithreaded, multicore count general compute workloads.

Powered by the company’s 1β (1-beta) technology, the 32Gb DDR5 DRAM die-based 128GB DDR5 RDIMM memory delivers the following enhancements over competitive 3DS through-silicon via (TSV) products:

-

more than 45% improved bit density

-

up to 24% improved energy efficiency

-

up to 16% lower latency (2)

-

up to a 28% improvement in AI training performance (3)

“We are proud to set a new standard for high-capacity, high-speed memory in the data center with Micron’s 128GB DDR5 RDIMMs, which delivers the memory bandwidth and capacity required for increasingly compute-intensive workloads,” said Praveen Vaidyanathan, VP and GM, compute products group. “Micron continues to enable improvements to the data center ecosystem with early access to our advanced technologies and support in the design and integration of leading-edge high-capacity memory solutions.”

The firm’s 32Gb DDR5 memory solution uses innovative die architecture choices for leading array efficiency and the densest monolithic DRAM die. Voltage domain and refresh management features help optimize the power delivery network providing much-needed energy efficiency improvements. Additionally, the die-dimension aspect ratio was optimized to advance the manufacturing efficiency of the 32Gb high-capacity DRAM die.

By leveraging AI-powered smart manufacturing methods to enable these world-class innovations, Micron’s 1β process technology node has achieved yield maturity in the fastest time in the company’s history. (4) The company’s 128GB RDIMMs will be shipping in platforms capable of 4,800MT/s, 5,600 MT/s, and 6,400 MT/s in 2024 and designed into future platforms capable of up to 8,000MT/s.

“Our latest 4th gen AMD EPYC processors will benefit from optimized memory capacity per core with Micron’s 128GB RDIMMs, which use 32Gb monolithic DRAM to provide an improved total cost of ownership solution for business-critical data enterprise workloads, such as AI, high-performance computing and virtualization,” said Dan McNamara, senior VP and GM, server business unit, AMD (Advanced Micro Devices, Inc. ). “As AMD advances compute with our next-gen EPYC processors, Micron’s 128GB RDIMMs will likely become one of the main memory options to deliver high-capacity and bandwidth per core capabilities to address the demands of memory-intensive applications.”

“We look forward to Micron’s 32Gb-based 128GB RDIMM for the bandwidth and performance-per-watt solution benefits available in the server and AI systems market. Intel is evaluating this 32Gb memory offering for key DDR5 server platforms based on the resulting total cost of ownership benefits to cloud, AI and enterprise customers,” said Dr. Dimitrios Ziakas, VP, memory and IO technologies, Intel Corp.

The company’s 32Gb-DRAM die enables future expansion of the memory portfolio with enhanced bandwidth and energy-efficient MCRDIMM and JEDEC standard MRDIMM products in 128, 256GB and higher capacity solutions. With process and design technology innovations, the firm offers an array of memory options across RDIMMs, MCRDIMMs, MRDIMMs, CXL and LP form factors to allow customers to integrate optimized solutions for AI and HPC applications that suit their needs for bandwidth, capacity and power optimization.

Resources:

Video: Micron DDR5: Offering More Than 2x the Effective Bandwidth

Video: Micron DDR5: Next-Gen Memory Transforming Data Into Insight

Blog: Redefining Performance With DDR5 and 4th gen Intel Xeon Scalable Processors

(1) Based on public announcements, competitor websites and internal Micron design simulations

(2) Based on competitive data sheets and JEDEC specs

(3) Based on internal Micron data center workload testing results

(4) Comparison and improvement between Micron 1β and 1α line maturity

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter