SC23: DDN Infinia Next-Gen SDS for Enterprise AI and Cloud Needs Everywhere and All at Once

Designed to maximize value in complex workflows with multi-tenancy

This is a Press Release edited by StorageNewsletter.com on November 10, 2023 at 2:02 pmDDN (DataDirect Networks Inc.) announced Infinia – a next-gen SDS platform which leverages 2 decades of firm’s engineering in file system, data orchestration and AI-based optimization, all coming together to usher the era of accelerated computing and generative AI.

Infinia combines multi-tenancy at scale, containerization and the highest levels of speed and efficiency customers have come to expect from company’s systems, with ease of management and powerful security attributes. The novel architecture accelerates and simplifies workflows for the data management demands of today and tomorrow, from generative AI and large language models (LLM) to versatile and complex movements of workflows from the edge to data centers and the cloud.

Burgeoning AI Data Challenges

Enterprises face managing diverse data sources at multiple sites – on-premises, at the edge and in multiple cloud environments – as well as different systems for varying applications and shared access to data sources. Data is created at the edge and must be moved between the edge and the data center to be actionable. The rising demand for distributed unstructured data requires metadata tagging to ensure it moves swiftly, efficiently and securely.

Additional challenges, including increasing electricity costs and a lack of data center real estate, mean enterprises must optimize and fully utilize all resources with storage operating as efficiently as possible. Infinia removes hardware dependencies with built-in core features to answer the requirements for secure enterprise-wide AI data management.

“Data management has become terribly complex, and the market has been clamoring for a new paradigm which solves the challenges of explosive AI data growth in a fully secure, multi- tenant, power efficient and cost-effective way,” said Dr. James Coomer, SVP products. “Enterprises need to aggregate varied data types collected by many sensors and sources over distributed locations and ensure that the resulting data is governable, secure and available for business and data processing needs. Infinia was built from the ground up to deliver to these needs.”

Infinia Leapfrog Architecture Designed for New Demands

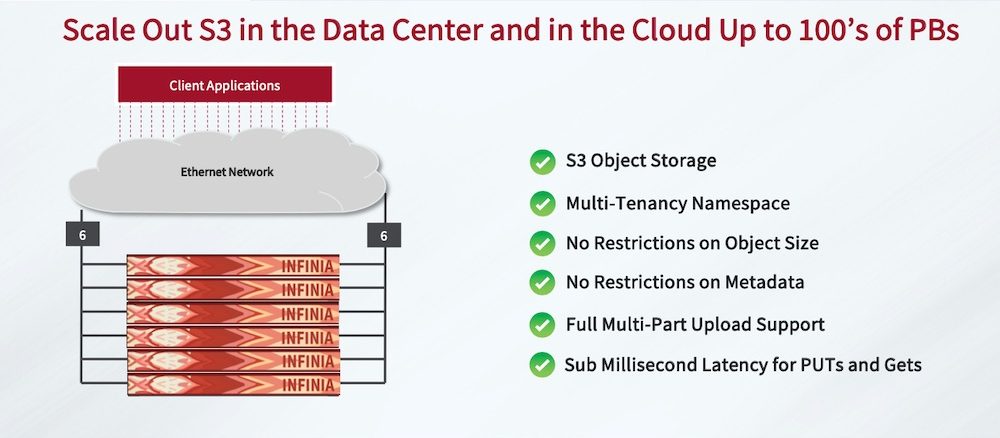

It fulfills data management requirements with a new software-defined platform that delivers high performance and is simple to deploy and scale. It is initially available for Amazon S3 object storage environments, and its core features include:

- Hyper Simplicity: Auto-configuring installation – 10mn to deploy; zero downtime via rolling upgrades and elastic expansion; hands-free storage allocation by business policy; multi-tenanted self service

- Simple Data Provisioning: Dynamic data engine creates new tenant in one command or one click; sets capacity boundaries and performance shares; fully provisioned withins

- Multi-Tenancy and QoS: 100% software-based native SLA manager; automates distributed keyspace securely for maximum resource sharing and highest efficiency

- Governance and Control: Combines fast and scalable metadata management with scalable storage; reduces complexity and duplication of data and metadata; tracks model training, tag and search, end-to-end model and governance

Energy Efficient, High-Density Appliance

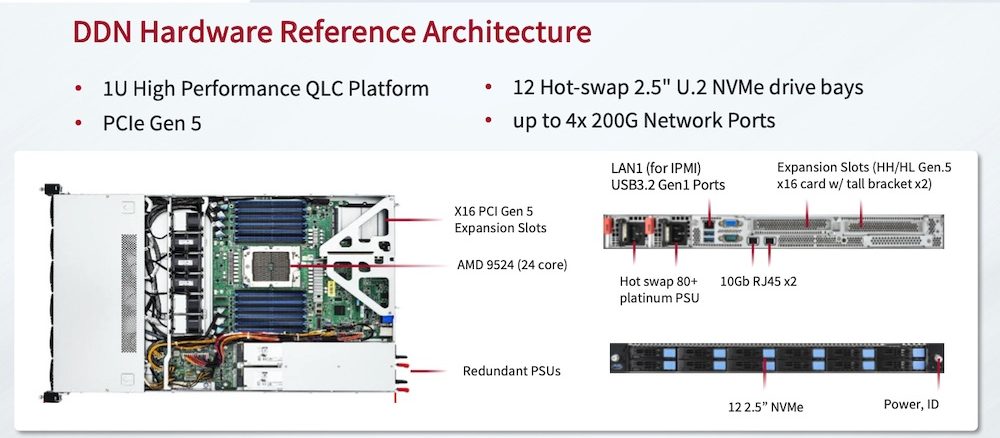

The company is also launching an appliance designed to maximize the benefits of the Infinia SDS platform. The appliance leverages a 1U, all QLC-flash system with 12 hot-swap 2.5″ U.2 NVMe drive bays and up to 4x200G/s network ports. Infinia scales out by adding servers to meet workload and performance demands.

The vendor will also support a range of processors and hardware architectures for completely software-defined deployment in future releases.

Infinia is also available for deployment on Google Cloud, with additional cloud support coming in the future.

Supporting Resources:

– DDN Infinia’s S3 object interface

– Advantages of DDN Infinia’s multi-tenancy

– DDN Infinia Data Sheet

Comments

This is a major news from DDN, well known for its high performance storage solutions - in block and file storage - dedicated to HPC for more than 2 decades and more recently AI. With Infinia, the firm clearly comes back to object storage with a big and fast product dedicated to new workloads. It has developed and promoted an object storage product in the past named WOS, later the team has introduced S3 options with Nexenta and ExaScaler.

Started 8 years ago, this approach was originally designed with flash in mind which is not the case for almost all other products in the object storage category, even WOS, having been imagined under a heavy HDD period. And it explains why object storage was essentially deployed for secondary storage need or less intensive workloads.

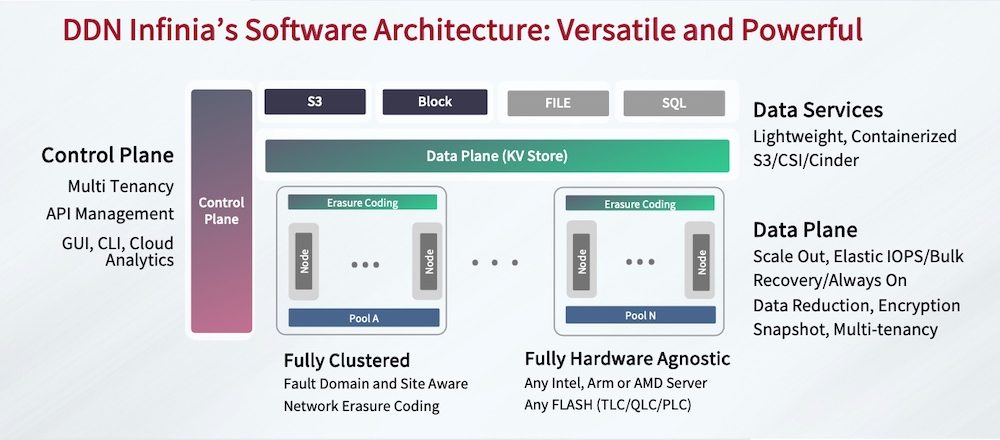

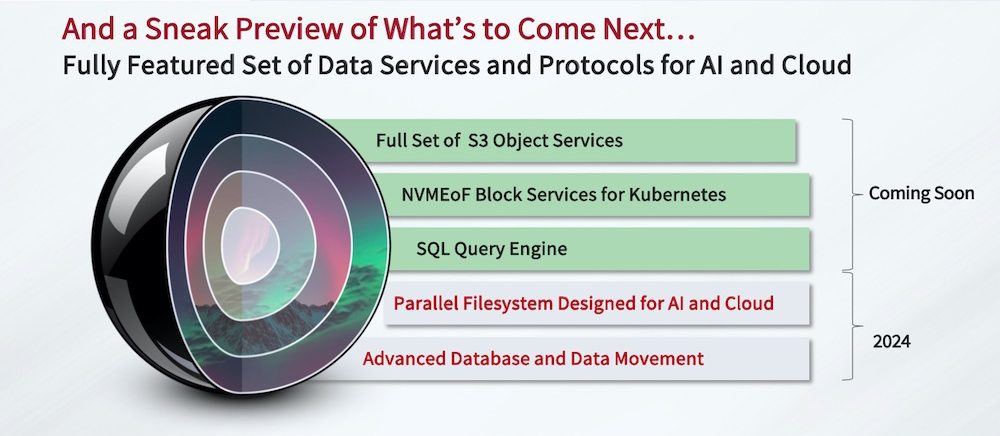

With Infinia, the vendor targets high demanding workloads for enterprise in the core, cloud and edge places being multi-tenant and coupled with various interfaces with S3, NVMe-oF block for CSI and a SQL query engine.

Reading this news, we have to understand that Infinia is not a replacement of Lustre-based ExaScaler built for very large HPC environments even if the latter offers a S3 interface as well. Clearly data is created in various places in different formats.

James Coomer, SVP products, DDN, confirmed that the team didn’t consider any open source modules, having designed and developed a radical new data platform. He insisted on the fact that no specific hardware dependencies exist like SCM (Storage Class Memory) with Optane or similar elements.

The first product iteration will be delivered as a bundle of hardware and software, a model often seen elsewhere, that helps to align requirements and needs to sustain performance levels. The current hardware platform being a 1U chassis is equipped with AMD 9524 CPUs - 24 core - coupled with 12 hot swap 2.5” U.2 NVMe QLC Flash SSD PCIe Gen4 and 4x200Gb/s network ports. DDN picks Solidigm 60TB SSDs giving 720TB raw per chassis, 60TB being the current maximum. With a clear wish to be hardware agnostic, future product iterations will offer software only with subscription with the support of Intel, AMD and ARM processors and TLC and PLC Flash devices. Around the end of 2024, Infinia will offer file-based interfaces with industry standard file sharing protocols and also a parallel file system software client. At that time, this client software will be in user space and responsible of erasure coding. Not a surprise here as a parallel file system data distribution relies on clients with the responsibility to send data chunks to all participating back-end data servers. Replication between clusters will also arrive in 2024 in 2 modes asynchronous and synchronous. Other data services such as data reduction, encryption, snapshot will also be added.

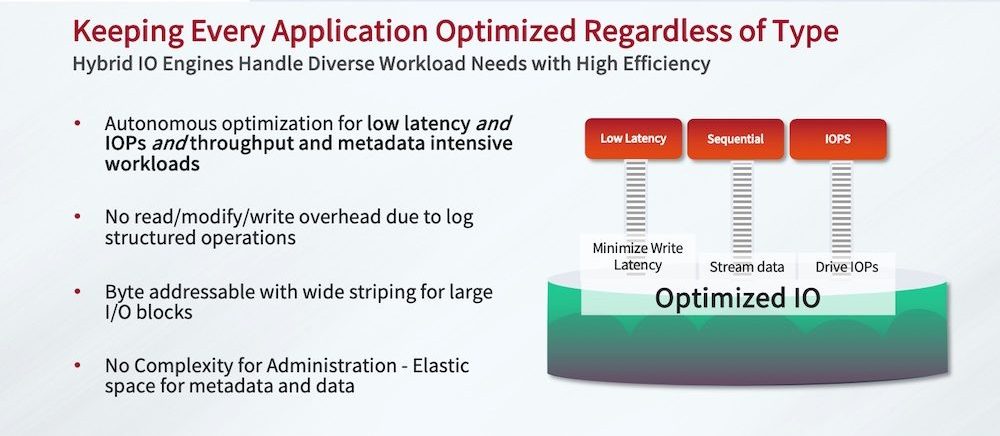

Simplicity is a key factor for Infinia especially at scale as everything is transparent. Simplicity means also rapid tasks and actions to deploy, grow and upgrade the platform. This simplicity property is a huge change for DDN and marks a pivot point for all products. Performance was a key design criteria to respect tenants and applications requirements. The philosophy is to be log-structured to avoid extra update operations, byte-addressable with wide striping for large I/O needs.

The architecture relies on a control and data plane implemented with containers. There is no file system on individual SSDs used in raw mode with a transactional key-value abstraction layer. The multi-tenancy aspect works at the keyspace level sharded across the entire infrastructure and guarantees data isolation. The first flavor delivers a minimum of 6 chassis in a cluster for a maximum of 32. The minimum configuration delivers 6x720TB for more than 4PB raw. Usable storage space is of course influenced by erasure coding (EC) here called network erasure coding. The engineering team doesn’t choose a fix N+M as it seems to be dynamic, same remark for the chunk size. We don’t know yet the family of their EC and if it’s systematic or not.

The firm confirms its SDS strategy and his goal to address consolidation of demanding usages and new applications requirements with multiple points of presence and interfaces. Being a full software approach, it will be available on public clouds such Google Cloud.

Infinia validates, once again, what we introduced more than 10 years ago with U3 - Universal, Unified and Ubiquitous - storage.

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter