Microchip Teams Up with Intelligent Hardware Korea to Develop Analog Compute Platform to Accelerate Edge AI/ML Inferencing

MemBrain nonvolatile in-memory compute technology and working with universities, IHWK is creating SoC processor for neurotechnology devices.

This is a Press Release edited by StorageNewsletter.com on September 22, 2023 at 2:02 pmTo address the rapid rise of AI computing at the edge of the network and its associated inferencing algorithms, Intelligent Hardware Korea (IHWK) is developing a neuromorphic computing platform for neurotechnology devices and field programmable neuromorphic devices.

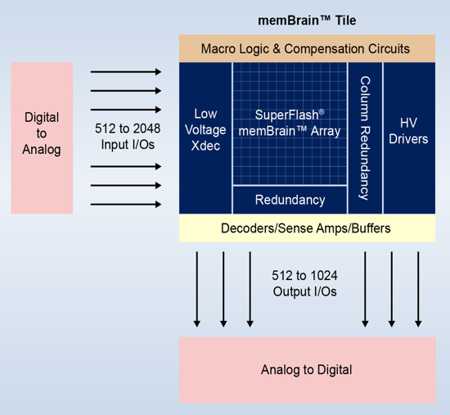

Microchip Technology, Inc., via its Silicon Storage Technology, Inc. (SST) subsidiary, is assisting with development of this platform by providing an evaluation system for its SuperFlash memBrain neuromorphic memory solution. The solution is based on the company’s NVM SuperFlash technology and is optimized to perform vector matrix multiplication (VMM) for neural networks through an analog in-memory compute approach.

The memBrain technology evaluation kit is designed to enable IHWK to demonstrate the power efficiency of its neuromorphic computing platform for running inferencing algorithms at the edge. The end goal is to create a low-power analog processing unit (APU) for applications such as generative AI models, autonomous cars, medical diagnosis, voice processing, security/surveillance and commercial drones.

As current neural net models for edge inference may require 50 million or more synapses (weights) for processing, it becomes challenging to have enough bandwidth for the off-chip DRAM required by purely digital solutions, creating a bottleneck for neural net computing that throttles overall compute power. In contrast, the memBrain solution both stores synaptic weights in the on-chip floating gate in low-power sub-threshold mode and uses the same memory cells to perform the computations – offering improvements in both power efficiency and system latency. When compared to traditional digital DSP and SRAM/DRAM based approaches, it delivers 10 to 20x lower power usage per inference decision and can reduce the overall bill of materials.

To develop the APU, IHWK is also working with Korea Advanced Institute of Science & Technology (KAIST), Daejeon, Korea, for device development and Yonsei University, Seoul, Korea, for device design assistance. The final APU is expected to optimize system-level algorithms for inferencing and operate between 20-80 TeraOPS/W, which is the best performance available for a computing-in-memory solution designed for use in battery-powered devices.

“By using proven NVM rather than alternative off-chip memory solutions to perform neural network computation and store weights, Microchip’s memBrain computing-in-memory technology is poised to eliminate the massive data communications bottlenecks otherwise associated with performing AI processing at the network’s edge,” said Mark Reiten, VP, SST, licensing business unit, Microchip. “Working with IHWK, the universities and early adopter customers is a great opportunity to further prove our technology for neural processing and advance our involvement in the AI space by engaging with a leading R&D company in Korea.”

“Korea is an important hotspot for AI semiconductor development,” said Sanghoon Yoon, branch manager, IHWK. “Our experts on nonvolatile and emerging memory have validated that Microchip’s memBrain product based on proven NVM technology is the best option when it comes to creating in-memory computing systems.”

Permanently storing neural models inside the memBrain solution’s processing element also supports instant-on functionality for real-time neural network processing. IHWK is leveraging SuperFlash memory’s floating gate cells’ nonvolatility to achieve a new benchmark in low-power edge computing devices supporting ML inference using advanced ML models.

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter