Recap of 50th IT Press Tour in Colorado and California

With 9 companies: Arcitecta, DDN, MinIO, Nyriad, Phison Electronics, Qumulo, Spectra Logic, Versity and Volumez

By Philippe Nicolas | June 20, 2023 at 2:01 pmThis article was written by Philippe Nicolas, organizer of the event.

The 50th edition of The IT Press Tour happened recently in Colorado and California and it was the right time to meet leaders and innovators playing in some various market segments with some new approaches for the cloud, IT infrastructure, data management and storage: Arcitecta, DDN, MinIO, Nyriad, Phison Electronics, Qumulo, Spectra Logic, Versity and Volumez.

Arcitecta

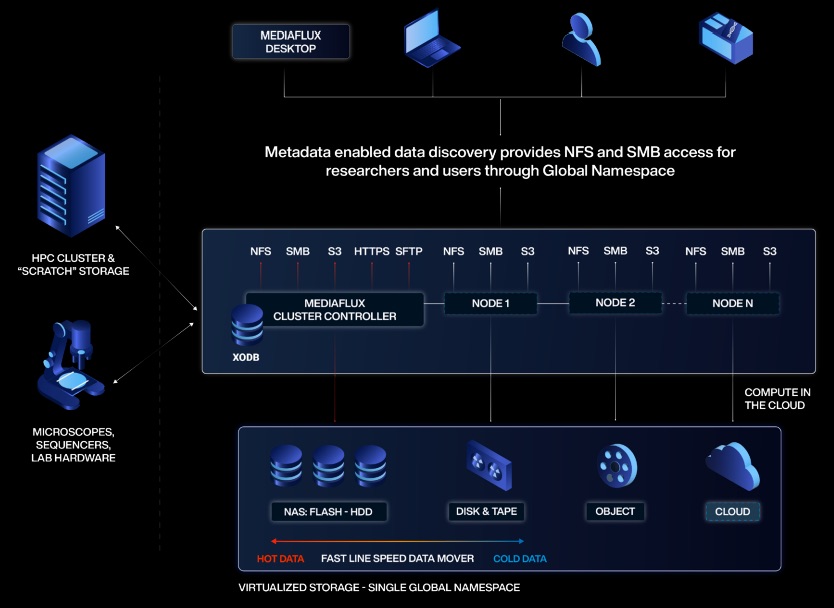

Founded in 1998, it belongs to the small group of pioneers of unstructured data management. The team has, since the inception, identified the volume of data and the variety and number of file servers and NAS as a clear contributor to the IT complexity. In other words, how is it possible to deliver an effective user experience in this moving data landscape and expose a consistent access methods and management capabilities? Arcitecta solves this by first designing and building a scalable database named XODB to store all collected and discovered metadata. And this is key as the entire data environment is managed and controlled thanks to a deep understanding of these metadata. An XODB entry consumes 1kB and 1 billion files information represents just 1TB of metadata and could fit perfectly on current market SSD. The other objective is to reduce cost with the right data tiering aligned with multi levels of storage while maintaining a transparent access to data. To make things seamless and expose a scalable approach, the company develops its own NFS and SMB protocols and S3 API. In fact, it is required as their product Mediaflux operates as out-of-band with a central XODB with extensive file metadata informations. For back-end supports, Mediaflux interacts with file servers, NAS, tape, cloud and object storage. With its global namespace capability, users access data wherever it resides even with mobility policies in place. Storage units can be added or removed without any impact on data and access. In addition to this, Arcitecta relies on versioning and offers a point-in-time function to address ransomware protection. Their go-to-market model is based on selected partners such as the recent partnership signed with Spectra Logic to extend BlackPearl NAS and S3 to address demanding secondary storage file and object storage needs.

Click to enlarge

DDN

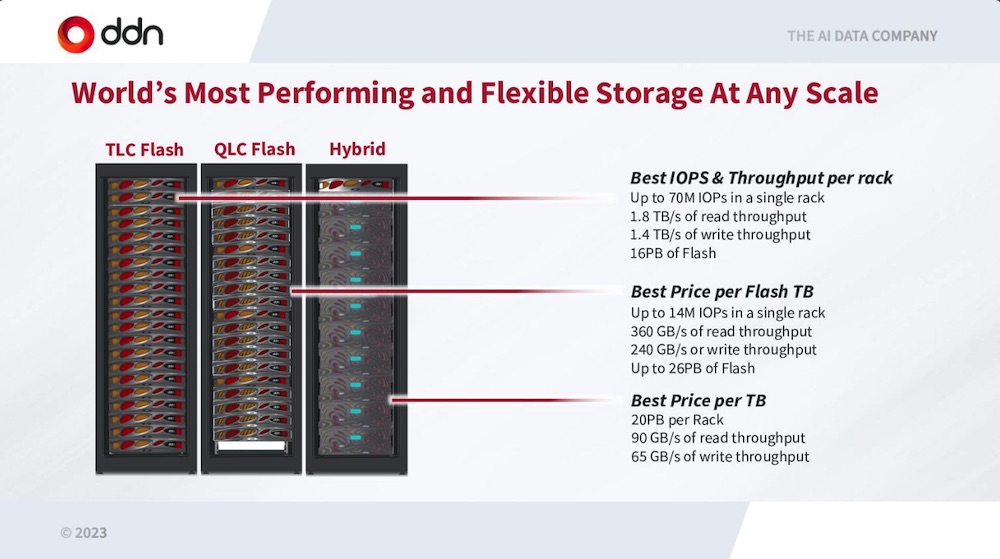

It is now the AI data company according to the new positioning of the company. It is by far confirmed by their accomplishments in the domain for many many years as an extension to their strong HPC presence. The team accelerates on this hot topic claiming that the world is living the second wave of AI generating even more data with Large Languages Models, Multi-Modal AI and Generative AI. But several IT challenges limit these developments and adoptions with an obvious need for parallel processing. And this is where DDN has a serious answer, proven for decades, with its true parallel storage approach promoted by AI400X2, leveraging optimized hardware coupled with advanced networking. Of course, we understand the limitations of parallelism with NFS, as there is no parallelism in NFS without pNFS, other things like nConnect and MultiPathing being only access techniques to reduce access time. The company follows the high demanding needs of Generative AI with model size that increased by 1,000 while GPU memory by 5 in the last 3 years. Only a few players can deliver these levels of performance and clearly DDN is one of them coupled with Nvidia BasePOD and SuperPOD. The firm also has delivered a very dense 2U system that offers 8PB of TLC and QLC flash producing 90GB/s and 3M IOPS leveraging their DDN Storage Fusion NVMe Fabric.

On the Tintri side, we understand that the product line generates 20% of global DDN revenue of $500 million. VMstore 7000 has been well adopted with new services around ransomware and more generally data protection, VMware Tanzu, NFS v4.1 and a new control plane UI. The team is targeting hybrid cloud deployment, will re-architecture its product with microservices and containers, providing a native CSI driver. Tintri should announce something new in a few days.

Click to enlarge

MinIO

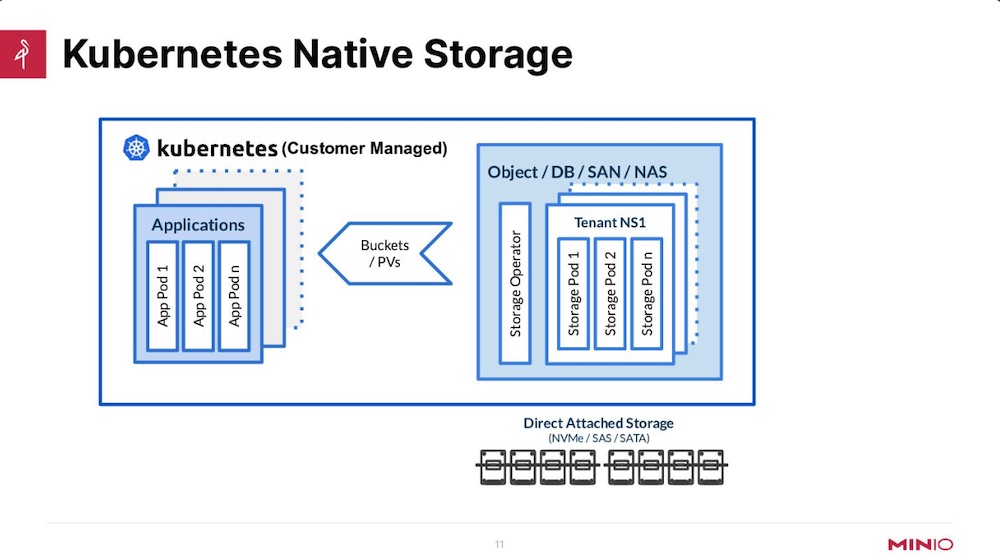

No real need to present this company as the object storage software player has become in recent years the reference in the domain beyond its classic boundaries with adoption in multiple and various domains. Coldago Research has named and recognized MinIO as a leader in the domain for the last 4 years. Originally positioned for distinct use cases, the team has chosen for a few years to align its destiny with Kubernetes. Several reasons for that, clearly Kubernetes needs something like that as object storage fits perfectly in the domain and at the same time other pure players almost disappeared. MinIO is now ubiquitous and really synonymous of object storage, a position several vendors have dreamed about for many years. The company has insisted on the Kubernetes-native definition claiming it means a complete support of Kubernetes functionality, not a minimal subset, like hardware appliance or bare-metal deployment with a CSI driver. MinIO deployments are just phenomenal with more than 72% instances being containerized and more than 33% of those managed by Kubernetes. To confirm its industrialization and adoption at scale both in terms of size of clusters but also number of them, the firm has also spent some time developing an Operator to facilitate deployments of MinIO clusters whatever is the Kubernetes engine. Beyond persistent volumes with DirectPV, security has been improved as well with a special action on key management with v5. The modern datalake represents a serious direction for MinIO as the volume of data is a limiting factor for other storage technologies. In fact, the firm has realized a strong adoption in the domain with other famous open source data services and frameworks, it is also the case for AI/ML.

Click to enlarge

Nyriad

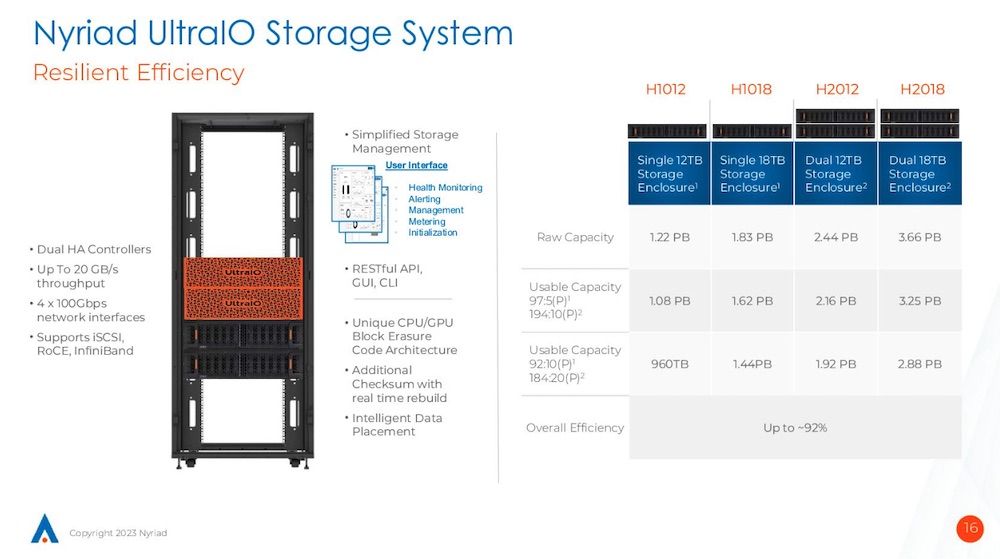

Launched in 2015 in New-Zealand with the project to build a new efficient and resilient block storage system leveraging GPU for erasure coding, the company started a second life in 2020 being now a US legal entity. The firm, now led by Derek Dicker, is on a mission to shake the industry with a new storage approach, supported by 60 employees and $63 million raised so far. It sells a block storage array named UltraIO which couples their advanced controller with HDD-based Western Digital Ultrastar Data 102. So clearly we understand the message, fast performance at a very cost effective level with strong resiliency. UltraIO is an active/passive dual HA controller approach delivering 20GB/s of read and write at the same time with 1 controller. The x86 CPU is used for read and Nvidia GPU for write as the GPU processes the intensive erasure coding code after data lands on the NVDIMM. A few elements are interesting in the design. For erasure coding, UltraIO supports from 3 to 20 parity drives that means a super efficient usable ratio around 92%. The stripe unit is 2MB and the GPU is processing one full stripe – 2 x 102 for instance – at a time to touch every drive and receive the maximum bandwidth. The controllers offer 4 x 100Gbps ports exposing iSCSI, RoCE or InfiniBand. The maximum raw capacity is 3.6PB and exists with 12 and 18TB drives. Nyriad has several deployments with BeeGFS or more classic with ZFS based environments and NAS protocols above, in HPC of course but also in M&E, backup and recovery and active archive. So a good mix of primary and secondary storage use cases. Nothing is decided yet but we can imagine that the Nyriad controller layer could be attached to JBOFs, we’ll see.

Click to enlarge

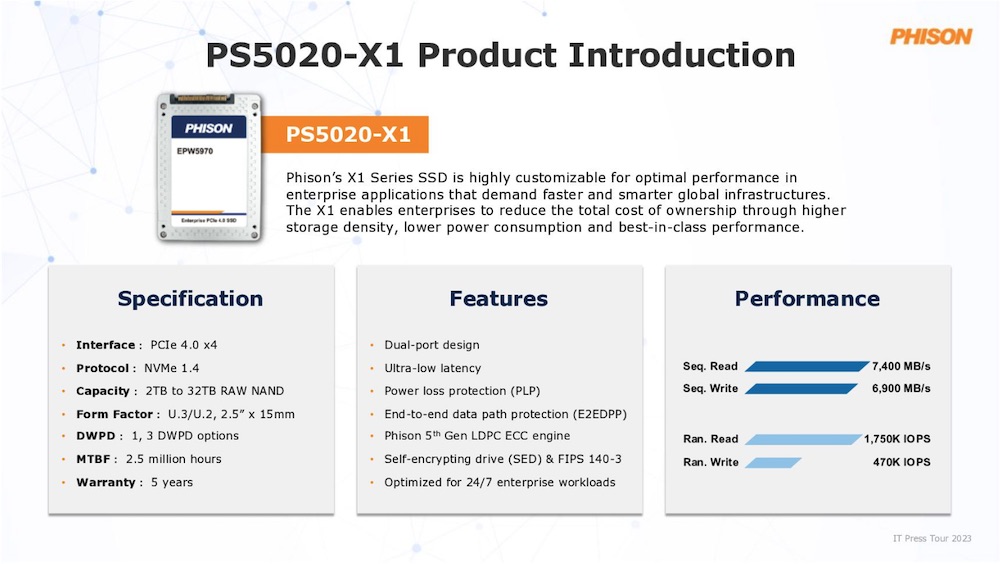

Phison

Founded more than 23 years ago, it is a hidden giant, generating $2 billion in 2022 with more than 3,800 employees. By giant, we mean the company shipped 600 million controllers annually for 16EB of NAND but nobody really knows that except market domain specialists. The company has also made some significant milestones with first Gen4 QLC in 2020 or more recently the fastest Gen5 to name a few. As said the company is a technology leader with 31% of its revenue coming from controllers if we consider 3FQ21. 70% of its revenue comes from ODM/OEM/tier 1 partners. Its expertise is recognized by several storage vendors such as Kioxia, Micron, Western Digital, Seagate, Samsung, YMTC, Intel or SK Hynix, illustrated by 1 out of every 4 SSD is shipped with Phison inside. With 20+ years of experience making NAND and SSD modules, the firm owns NAND wafers, designs controllers, develops firmware and operates manufacturing to deliver finished products like the really first PCIe Gen4 SSD in 2019. It is more recently the case for Seagate Nytro as PCIe Gen4 Dual Port X1 SSD developed by Phison. Coming from controllers, we should say key active components, moving to client SSD, the company wishes now to play a significant role in enterprise SSD and potentially data centers ones. We expect to see some models at the end of 2023 for Gen4 and beginning of 2024 for Gen5. To accelerate this move towards the enterprise segment, the manufacturer has launched Imagin+, a special design service for partners with customized controllers, features and products. The perfect illustration of that is the PS5020-X1 highly customizable with PCIe Gen4 x4, NVMe 1.4, dual port and a few other key features. We learnt that the company is also thinking about CXL. Among the long list of projects supported by Phison, the one with Skycorp with the idea to use the first NASA TRL-6 certified Phison SSD on the moon is very special. TRL-6 is low earth orbit, TRL-9 will be after the moon mission.

Click to enlarge

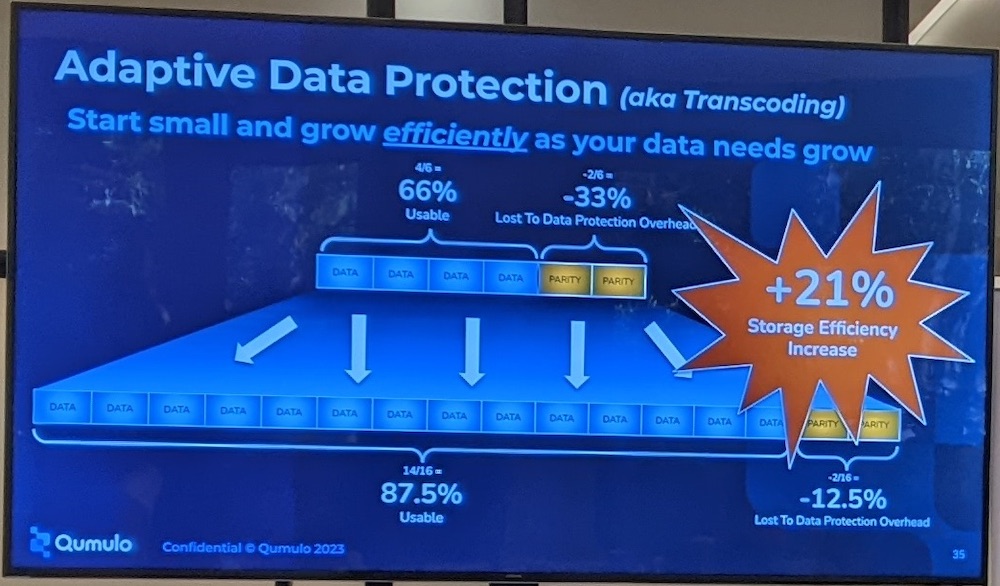

Qumulo

Reference in scale-out NAS for several years, it has made a clear shift to hybrid cloud to align their solution to users’ requirements and demands. It is supported by their Scale Anywhere approach. And even if it had some difficulties and decided to make some lay-offs to reach today approximately 350 employees, like others finally to adjust their operations, the revenue grew by 60% in the 2FH23. It is now cash positive. It also translates in market footprint with 3EB of licenses capacity in the past 12 months. Clearly the firm sees an acceleration with the exabyte trajectory that speaks for itself: it lasted 6.5 years to reach the 1EB, 1.5 years from the 1 to 2EB, and now 11 months to reach 3EB, it means 7x faster to reach 3EB from 2 than it took to touch 1EB a few years ago. The firm has 900 total customers WW in 20 countries managing 500 billion files. Multiplying verticals and use cases, this situation invites users to consider Qumulo more and more as an universal file storage solution. Cognacq-Jay case studies illustrated their outcome vs. some object storage point product. The team has made also some key developments with its own S3 API replacing the deprecated MinIO gateway, SMB multi-channel support, NFS 4.1 with Kerberos, Varonis integration for security or adaptive data protection to dynamically change the erasure coding N+M ratio. On the data protection side, the firm partner with classic vendors and picked Atempo Miria to facilitate migration and also Yuzuy, a German company expert on Qumulo. The pricing is very simple to understand and offers predictability to users. On Azure with Azure Native Qumulo, a managed service, 1TB costs $85 per month. We expect to see Nexus in the coming months for its capability to manage dispersed clusters on the edge, core and public cloud with some other interesting planned features. Following the recent HPE agreement for Greenlake with VAST Data, Ryan Farris, VP of products, confirmed that he didn’t see any impact and doesn’t expect some as HPE is still a strong ambassador of Qumulo.

Click to enlarge

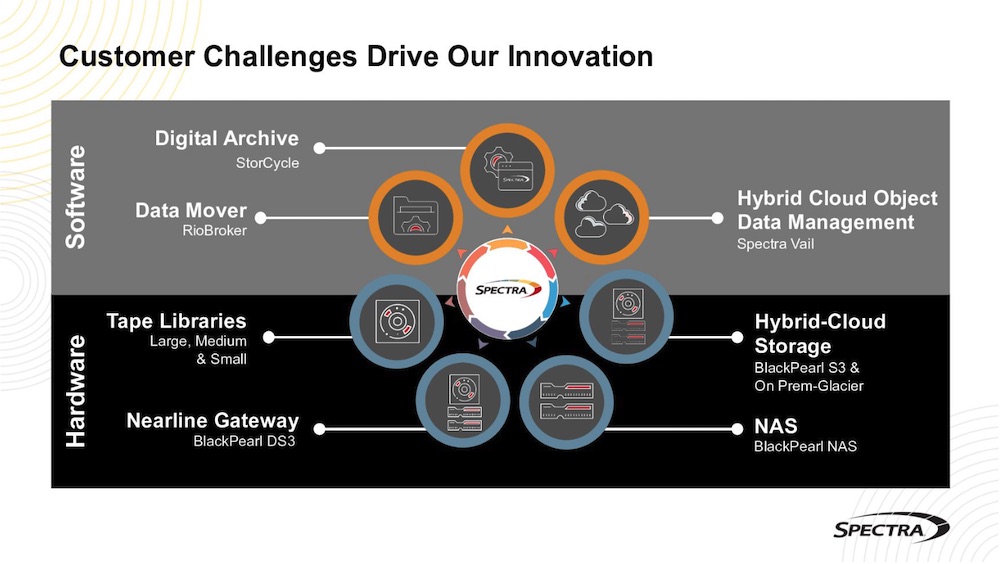

Spectra Logic

Famous for very large tape libraries Tfinity and more globally for its role in secondary storage, it has started a strategic software move a few years ago. Today these software are embedded in some offerings like BlackPearl line or available as pure software with StorCycle or Vail. The company has realized that a move to solutions should increase their market footprint and influence their adoption positively. A solution for Spectra groups software, platforms and services. And Spectra can offer the full solution like T950 with StorCycle what they name Spectra Digital Archive and associated services or partner with specific players. The recent example is the partnership with Arcitecta that couples BlackPearl NAS or S3 but it could be Rubrik, Coheity, Commvault, Veritas or Veeam with tape librairies or BlackPearl as well. The other key mission for Spectra is to deliver on-premises long term data preservation solutions aligned with the de-facto market standard, let’s say very visible, AWS S3 and Glacier. In other words, the team has made an effort to mimic AWS S3 Standard/IA, S3 Glacier Flexible Retrieval and S3 Glacier Deep Archive with their own levels, features and capabilities. With cloud costs, some users may think to move back their archives and expect a similar AWS model, what Spectra offers. The Vail software is still unique on the market and its adoption started to take off. The engineering group has also initiated a project for a new Open Standard Versioned Object Format, S3 compatible leveraging LTFS with some special attributes such as compression, encryption, hashing and tape set priority. This development comes from feedback from users and partners but also fine observability of the market. On the tape libraries side, the battle with Quantum and IBM continues fueled by a rich partners ecosystem and we should see in a few months some new models for special environments.

Click to enlarge

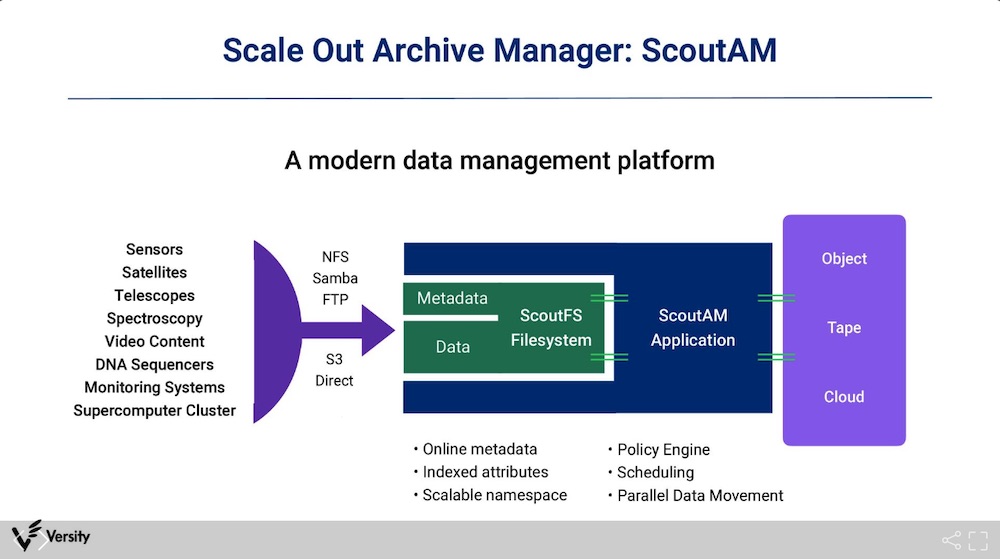

Versity

Well known for large scale archiving projects or simply for mass storage needs, it was founded in 2011 with the idea to build a modern scalable long term data preservation platform. Harriet Coverston and Bruce Gilpin, both co-founders, respectively CTO and CEO, delivered a Linux version of SAM-QFS and invited users to migrate to the new solution named ScoutAM. As of today, 2EB is controlled and managed by Versity with a 50/50 split between US and the rest of the world in terms of revenue. Clearly an acceleration has been made since the Dell OEM partnership signed in 2021. And it continues with 2 pending projects of more than 1EB each and another one with 1.5EB in phase 1 out of 5. As we said, the firm plays in secondary storage where we see lots of object and tape storage and operates as a new emerging leader for large archives. The solution relies essentially on ScoutAM, AM for Archive Manager the archiving logic, and ScoutFS, their POSIX compliant scalable cluster file system released under the GPLv2 open source license, Scout means Scale-out. ScoutFS operates as a large cache for archived data in the process controlled by ScoutAM then these data are moved to their final destination that could be tape, object storage or public cloud. Beyond file system interfaces, Versity relied like several market players on MinIO gateway but the company has notifed their users of the stop of this product edition. The team has then decided to develop its own S3 engine under Apache 2 open source license and announced this product Versity Gateway during the session. The product is available on GitHub at https://github.com/versity/versitygw/. The idea is to deploy this gateway on top of ScoutFS as a stateless translation tool with multiple instances running in parallel for both reads and writes at a high throughput.

Click to enlarge

Volumez

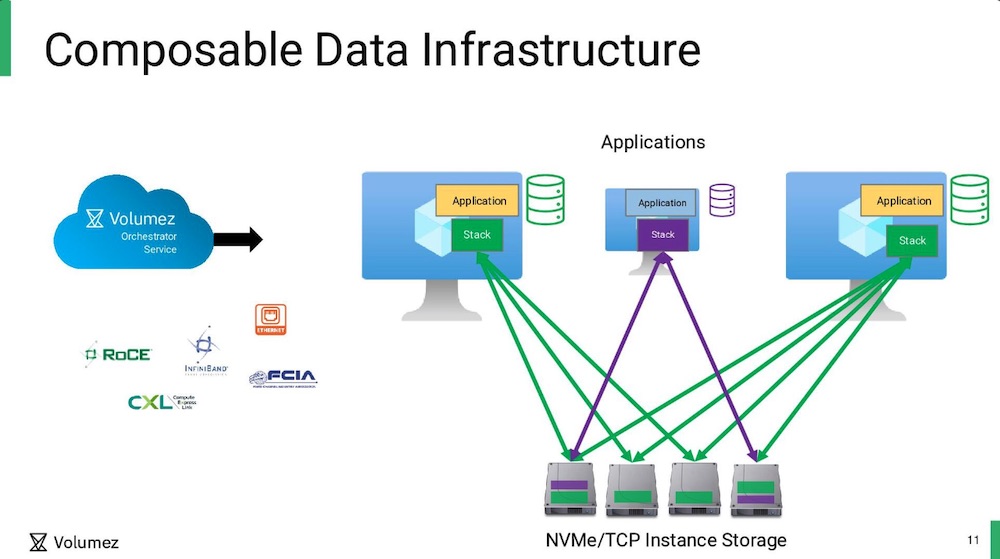

Founded in 2020 in Israel by Jonathan Amit, it is out of stealth for just a few months with already $32 million raised. It has already attracted some senior advisors such Shmuel Shottan, former CTO of BlueArc, or Iri Trashanski, former SVP of products at Hitachi Vantara, in addition to Amir Faintuch, CEO, Brian Carmody, field CTO, and John Blumenthal as CPO who joined recently. It plays in a modern storage management landscape leveraging today’s technologies such flash, NVMe, Kubernetes coupled with Linux, fast networks and open source frameworks. This is what they call CDI for Composable Data Infrastructure, a new way to build dynamically block storage units attached directly to linux machines without any pieces of software installed. This is the beauty of their approach, an out-of-band orchestrator running as a service in the cloud, what is named today a control plane, with virtual volumes connected to consumer machines acting as the data plane. The Volumez engine has the total view of the disks available in the environment with their characteristics and capabilities, especially if portions of them are already allocated. With all these profiles, the orchestrator is then able to select the right disks, or portions of them, to build the volume aligned with IO/s, latency, bandwidth and protection requirements. Volumez approach is about performance guarantee per volume – 1.5 million IO/s, 12GB/s throughput and 300μs of latency – thanks to this deep environment knowledge and information collections. The company also has announced a key endorsement with InterSystems, the firm behind Caché and now IRIS cloud, a database vendor always targeting top performance. For their cloud offering, IRIS, after a deep severe selection, InterSystems picked Volumez for their storage back-end.

Click to enlarge

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter