Recap of KubeCon+CloudNativeCon 2022

DevOps don't speak about storage but datastore.

By Philippe Nicolas | November 8, 2022 at 2:03 pmA few days ago took place in Detroit, MI, the North America KubeCon and CloudNativeCon conferences and it was once again a rush. For quite some time but Kubecon reminds us the OpenStack old days, same atmosphere, keynotes speakers don’t wear any jacket but instead a T-shirt being the costume of choice. Same remark for the average age of attendees, DevOps is essentially a job for 20 to 30 years old people with a vast majority of male.

But numbers for the CNCF are impressive with

- 140 projects,

- 176,000+ contributors,

- 850+ members,

- 1,000+ maintainers,

- 172+ end-users,

- over 7 million cloud native developers for 187 countries.

Clearly something is happening with a real ecosystem effervescence, we count around 300 exhibitors, all kind of sponsors included.

The organizer claimed 8,000 physical attendees for the conference and the exact same number for online participation. A bit strange, too good to be true and why these same and whole numbers, it creates a doubt.

We mentioned above OpenStack but there is here a major difference with it. OpenStack is an infrastructure play and Kubernetes is really workloads and applications oriented with developers in mind, thus targeting different populations. It’s true and false as Kubernetes also introduced some infrastructure services. And among these, networking and storage are key ones with the latter considered differently in the Kubernetes community.

We ran a simple exercise and tried to find storage, data management or data protection terms or categories in the agenda but we didn’t find any reference to these. We found “reliability + operational continuity” covering some storage aspects and confirming that these topics are treated differently in this ecosystem.

It explains how this community considers storage related topics, in one word, it’s just a datastore stupid! And we see database and storage companies in the same track illustrating perfectly this datastore remark. Just ask a developer about storage and he will tell you that a SQL database or a NFS share is just a datastore for different data. A developer is far from storage services, I should say DevOps developers ignore and don’t care about that domain. This population sees the infrastructure from the top from their applications writing data through a POSIX or SQL API. On the storage side, we all agree that database sits on top of storage and we can even run databases on NFS. As you can imagine, as a storage attendee, it was not an easy week as we all speak a different languages. The image below illustrates this perfectly:

But Kubernetes must address enterprises needs and requests. These entities run business and storage, some say persistent storage, is a must for them. In other words, to be adopted and deployed by enterprises, Kubernetes must seriously specify, support and develop some mechanisms to use and connect storage. And this is where the Kubernetes ecosystem split workloads considering some of them as stateless and other stateful. Once again, enterprises require stateful workloads.

And now we enter a new debate with players coming from the storage world wishing a piece of the growing cake and other pure Kubernetes vendors unveiling dedicated storage product built to run exclusively in this environment, they’re named cloud native storage (CNS) actors. Both of them understand the business opportunity. This is a new example of the industry creating its own complexity.

Persistent storage is possible with static configuration and we see some database, file storage with NFS or disk-based file systems with ext4 or xfs or block storage deployments. One of the main progress made by the CNCF foundation is CSI – Container Storage Interface – with today more than one hundred products available but there are still limits with it. It reminds what we saw with Cinder or Manilla within OpenStack. But the promise of Kubernetes to decouple components and bring agility to migrate and run potentially any workloads in any places, on-premises or in the cloud force engineers to develop dynamic storage. The key element here is the volume, it starts with PV – Persistent Volume – and PVC – Persistent Volume Claim – to dynamically create storage instances connected to applications. We also realize the presence of storage orchestrator or operator like Rook, Trident or Piraeus, wow it starts to be a bit complex. At the end 3 approaches exist, pure CSI, storage operator and full CNS.

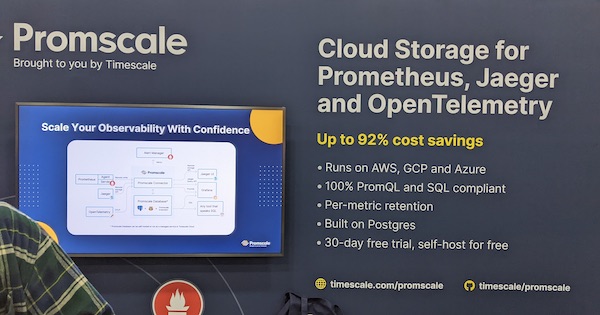

Having said that, we saw several vendors, storage related or not, like Dell EMC, NetApp, DataCore, MinIO, HPE, IBM, Red Hat, DDN with Tintri, Quobyte and of course VMware. But also cloud providers like AWS, Google, Microsoft Azure or Oracle Cloud. We were surprised to see Cloudian or Seagate but also some famous absences like Commvault or Veritas on the data protection side. Commvault made an announcement related to Kubernetes in parallel of the conference but how can they be considered seriously in the domain with such absence. But other specialized backup players had booth like Trilio, CloudCasa or Kasten and even Zerto with a dedicated one.

On the CNS part, almost all players were there like Ondat, Linbit, DataCore, Portworx, Robin.io now Symworld Cloud, and projects like Longhorn (a Kubernetes incubating project), OpenEBS or storage operators like Rook (a graduated project) or Piraeus (in sandbox state). Diamanti, a pure CNS player, was absent, not a good indicator.

Here are some storage announcements we noticed during the conference:

- DataCore with HA features for OpenEBS

- Portworx with Portworx Enterprise 3.0

- Mirantis with large scale storage support

This event gives us strange feeling but we understand that companies have to be ready if the Kubernetes tsunami will happen and it is perfectly illustrated by the driving title of the conference “building for the road ahead”. Kubernetes is considered as an enormous opportunity… but we saw lots of small companies we’ll see if many of them will survive in tough period where investors become more demanding.

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter