Recap of Fujifilm Summit 2022

Tape hot again illustrated by hyperscalers

By Philippe Nicolas | July 8, 2022 at 2:03 pmOrganized annually, the FujiFilm Summit got impacted by the Covid-19 pandemic like other conferences and just restarted face-to-face in San Diego, CA. The last one was in 2019 in San Francisco, CA, 3 years ago.

This year, the topic was around the post Covid storage optimization challenges and directions in a zettabyte era. Various presentations from users like CERN or hyperscaler like Meta, vendors like Cloudian, Coziant, IBM, Quantum, Spectra Logic, Twist Bioscience and Western Digital but also from AWS and Microsoft Azure plus some analysts like Fred Moore from Horizon Information Strategies, Brad Johns from Brad Johns Consulting and Coldago Research. We also saw other people from Google, DOE or Folio Photonics to name a few. The event, very specialized and organized by invitation, had around 100 attendees globally.

Even if the event is operated, controlled and managed by Fujifilm, one of the 2 manufacturers of LTO tape, the idea is really to cover storage as a whole covering cloud, HDD, SSD, optical, of course tape but also future technology and industry directions with DNA storage, glass, photonics or holographic. Of course the core topic is around secondary storage and its use cases such active, cold and deep archive but not limited to them.

Rich Gadomski, head of tape evangelism, Fujifilm Recording Media USA, introduced the conference and summarizes the current profile of its company as a large conglomerate generating annually more than $22 billion of revenue, with 80,000+ employees and $1.5 billion of R&D budget. He wrote a special blog post about the summit and you can find also a special interview of Gadomski from The French Storage Podcast.

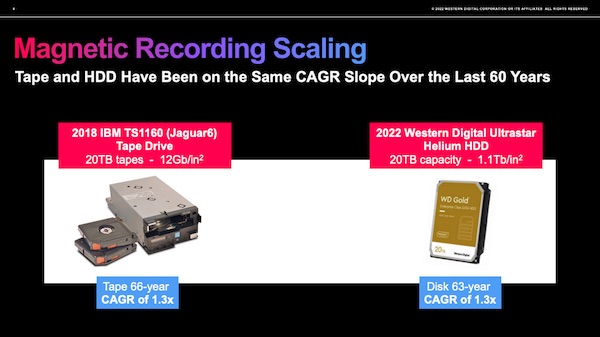

Fred Moore confirms once again that the pyramid of tiers is a reality due to the presence of various products, generations and models within an IT environment splitting the world between primary and secondary storage. This multi-level model is also justified by the data deluge we live for a few decades now and by pressure from regulators pushing compliance and new rules around data privacy, security and governance. Moore also insisted on the necessity to address the long term storage challenge by addressing the 2 dimensions – capacity and speed. Having a larger tape or disk solved the capacity, density and footprint needs but if it takes the same time writing data to a tape 10x larger than the current one vs. writing data to 10 tapes of the current size, the challenge is just half solved. Of course it’s a progress but clearly it is not enough.

I take advantage of this to insist of these 2 terms primary and secondary storage as we see lots of confusion on the market with the direct association of primary storage with block storage. Implicitly it means that file and object storage are often considered for secondary usage. Let’s be clear when we mention primary or secondary storage, it is not related to the nature of storage – block, file and object – it is based on the role of the storage within the organization. For specific workloads and use cases, some users can consider block, file or object storage for their primary usage. In others words, primary storage is where data is generated supporting the business and secondary storage is where the data is copied to protect the primary storage and indirectly protect the business.

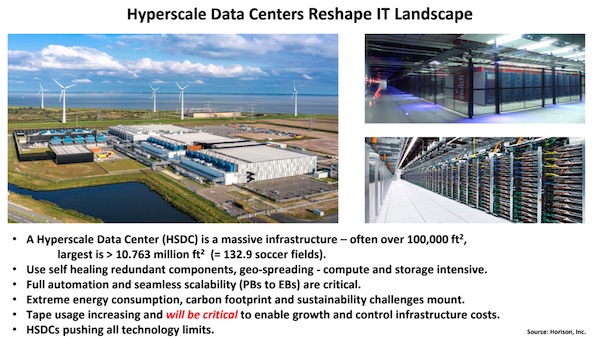

We all remember the past period where large corporations were the model to watch and learn about best practices… but we have to understand that these companies use commercial solutions. For a few years, the inspiring model became the hyperscalers one as they reinvent data centers, push limits in terms of number of devices, capacity, bandwidth, costs and needs globally. And of course, they limit their commercial usages, developing new practices and designs outside of standards. As Jamie Lerner, CEO, Quantum, said during the summit, Hyperscalers don’t care about standards, they build their own and targets resiliency, performance at the lowest cost due to the dimension of their needs.

Xiaodong Che, HDD CTO, Western Digital, talked about innovation in HDD with ePMR and especially the second generation ePMR 2 with bits density superior of 1.3Tb per square inch. HAMR delivers bits density superior of 3Tb per square inch. ePMR 2 also provides advanced write head structures to deliver CMR capacity from 24 to 30TB. He also covered the UltraSMR model delivering 20% more capacity versus CMR, the “classic” SMR giving 10% more capacity.

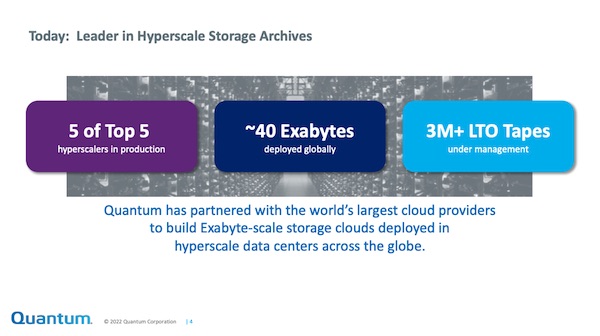

Lerner shared some interesting comments about hyperscalers adoption. The fact that these companies wish a drastic low cost for long term data storage forced Quantum to redesign its tape libraries architecture to offer new special model. The company equipped 5 hypersalers with its new tape library as the image shows below. This is what the Scalar i6H represents being super low costs, super modular, easy to replace, easy to deploy, easy to maintain and use and fast enough for hyperscalers usages. In fact, the tape library architecture changes but it’s still based on LTO tapes and tape drives. It is coupled with software and it invites us to mention that under Lerner leadership Quantum has morphed into a real software machine.

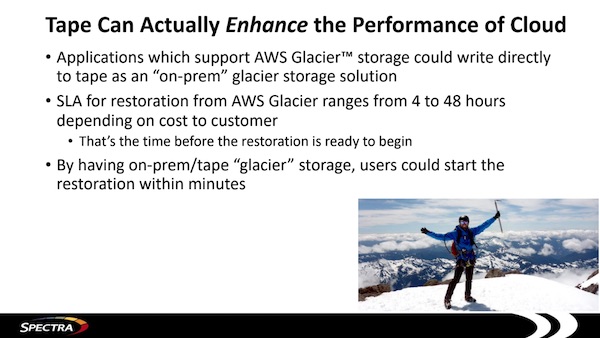

Nathan Thompson, CEO, Spectra Logic, chose to present how modern times push some new usages to tape especially promoting an object interface such S3 as the access method to tape libraries. Clearly this is different from what we used in the past with direct usages for backup, HSM or MAM applications. The new model of S3 to tape, some people can argue this is the new generation of VTL, promises to give a new life to this world providing a remote access impossible in the past. As S3 became a de-facto standard, promoting this couple makes really sense both for on-premises deployment but also in the cloud. We count at least 8 solutions around this topic illustrating a growing trend on the market. Spectra Logic also presented how they successfully escaped and existed from a ransomware attack with solid data protection discipline, processes and backup images on disks and tapes.

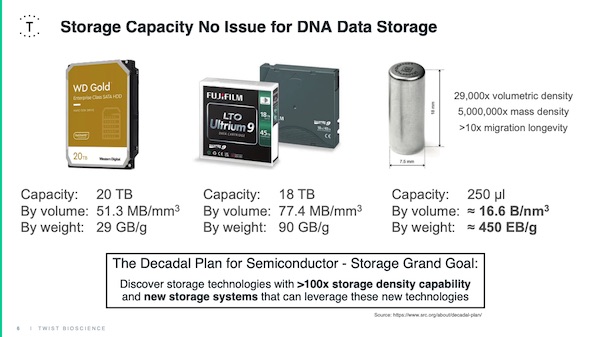

Twist Bioscience with Steffen Hellmold, gave a state of the art presentation for DNA data storage and clearly said that even if there is lots of premises solutions are not ready for real production having serious challenges to solve especially in the writing process, when DNA is generated. Having said that, Hellmold mentioned some pilot projects at various sites like Netflix, Microsoft, Unicef or Yale University. He also shared the 2 directions of solutions with a vault and library approach.

The conference, a 2 days dense program, was once again the opportunity to meet face-to-face many key players of the industry – vendors and users – promoting new developments angles to address new challenges perfectly illustrated by hyperscalers. And even if the event is organized by Fujifilm, all storage form were covered and this is great as the reality is the coexistence of all media within data centers. In others words, disk needs tape, tape needs disk and tape is hot again but new technologies are coming.

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter