Liqid Collaborates With MemVerge and Intel to Deliver Composable Memory Solutions for Big Memory Computing

Delivering software-defined, low-latency data centers with ability to pool and orchestrate for DRAM-like data speeds

This is a Press Release edited by StorageNewsletter.com on March 31, 2022 at 2:01 pmLiqid, Inc. is collaborating with big memory solutions MemVerge and Intel Corp. to deliver composable memory solutions for big memory computing.

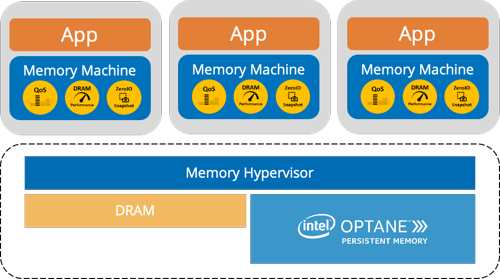

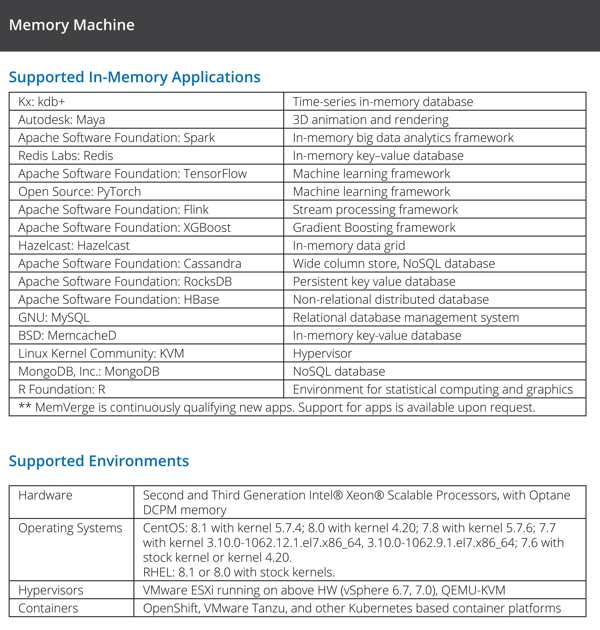

With Liqid Matrix composable disaggregated infrastructure (CDI) software and MemVerge Memory Machine software, the 2 companies can pool and orchestrate DRAM and storage-class memory (SCM) devices such as Intel Optane Persistent Memory (PMem) in flexible configurations with GPU, NVMe storage, FPGA, and other accelerators to match unique workload requirements. The joint solutions, available, deliver scale for memory intensive applications for a variety of customer use-cases, including AI/ML, HPC, in-memory database and data analytics.

“We are pleased to collaborate with technology industry leaders like MemVerge and Intel, leveraging our shared expertise in software-defined data center architectures to deliver composable, big-memory solutions today,” said Ben Bolles, executive director, product management, Liqid. “Intel Optane-based solutions from MemVerge and Liqid provide near-memory data speeds for applications that must maximize compute power to effectively extract actionable intelligence from the deluge of real-time data.”

Pool and orchestrate Optane Persistent Memory for memory hungry applications

DRAM costs and the physical limitations of the media have hampered deployment of powerful, memory-centric applications across use cases in enterprise, government, healthcare, digital media, and academia. Customers who need big memory solutions can deploy fully composable, disaggregated, software-defined big memory systems that combine MemVerge’s in-memory computing expertise with Liqid’s ability to compose for Optane PMem and other valuable accelerators.

“Our composable big memory solutions are an important part of an overall CDI architecture,” said Bernie Wu, VP, business development, MemVerge. “The solutions are also a solid platform that customers can leverage for deployment of future memory hardware from Intel, in-memory data management services from MemVerge, and composable disaggregated software from Liqid.”

Memverge Memory Machine dashboard screenshot

Click to enlarge

With joint, big memory CDI solutions from Liqid and MemVerge users can:

-

Achieve exponentially higher utilization and capacity for Optane PMem with MemVerge Memory Machine software;

-

Enable low latency, PCIe-based composability for Optane-based MemVerge solutions with Liqid Matrix CDI software;

-

Granularly configure and compose memory resources in tandem with GPUs, FPGAs, or network resources on demand to support workload requirements, release resources for use by other applications once the workload is complete;

-

Pool and deploy Optane PMem in tandem with other data center accelerators to reduce big memory analytical operations from hours to minutes;

-

Increase VM and workload density for enhanced server consolidation, resulting in reduced capital- and operational expenditures

-

Enable far more in-memory database computing for real-time data analysis and improved time-to-value.

Building bridge to future CXL performance

CXL, a next-gen, industry-supported interconnect protocol built on the physical and electrical PCIe interface, promises to disaggregate DRAM from CPU. This allows both the CPU and other accelerators to share memory resources at previously impossible scales and delivers the final disaggregated element necessary to implement truly software-defined, fully composable data centers.

“The future transformative power of CXL is difficult to overstate,” said Kristie Mann, VP, of product, Optane Group, Intel. “Collaboration between organizations like MemVerge and Liqid, whose respective expertise in big memory and PCIe-composability are well-recognized, deliver solutions that provide functionality now that CXL will bring in the future. Their solution creates a layer of composable Intel Optane-based memory for true tiered memory architectures. Solutions such as these have the potential to address today’s cost and efficiency gaps in big-memory computing, while providing the perfect platform for the seamless integration of future CXL-based technologies.”

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter