Top Companies in Distributed Cloud File Storage

Panzura, Nasuni, Ctera, then Hammerspace, LucidLink and Peer Software

This is a Press Release edited by StorageNewsletter.com on September 16, 2021 at 1:33 pmThis report was written on September 2, 2021 by analysts Enrico Signoretti and Max Mortillaro for GigaOm.

GigaOm Radar for Distributed Cloud File Storage v 2.0

An Assessment Based on the Key Criteria Report for Evaluating Cloud File Storage

1. Summary

File storage is a critical component of every hybrid cloud strategy, especially for use cases that support collaboration. Among file storage solutions, notably those that support collaboration, there is a steep increase in the popularity of distributed cloud file storage. There are several reasons for this trend: these solutions are readily available and simple to deploy; they rely on the cloud as their backbone, making them geographically available nearly everywhere; and they are simple for end users to operate.

From a cost perspective, these solutions also can help organizations shift from a CapEx cost model toward an OpEx model. They no longer need to purchase infrastructure upfront at multiple physical locations and instead can pay for the capacity they really use without making massive investments.

One of the other major advantages of distributed cloud file storage is its ubiquity, which makes it perfect for remote work. Remote work was already on the rise before the global Covid-19, but sudden, drastic measures taken to protect public health had a significant impact on everyday logistics for organizations and workers. Remote collaboration was suddenly no longer a matter of choice or policy, and organizations quickly needed to find ways to do it better.

The classic hub and spoke architecture, in which remote workers connect to their corporate network and access files on a NAS, are no longer scalable. Data must now be available everywhere, instantly, and be adequately protected vs. threats such as ransomware. Organizations also need clear insights into their data: what data is generated, by whom, and how fast the footprint is increasing. They need clear visibility into the cost impact of data growth, ways to manage and clean up stale data, and the means to observe and take action on abnormal activities.

While distributed cloud file storage tenets are well established and most solutions propose a solid distributed architecture, there are new challenges on the horizon. Regulatory constraints also impact data governance. Consumer protection laws such as GDPR, CCPA, and others give customers greater control over their data and impact how organizations manage that data.

Nation-states are also starting to understand that data is a strategic asset and to impose strict data sovereignty regulations that require data assets to be physically stored within the given country’s territories. Data classification is another challenge that has been around since even before distributed cloud file storage was available. Data needs to be tracked, classified, and treated appropriately; usually to comply with country or industry regulatory bodies.

2. Market Categories and Deployment Types

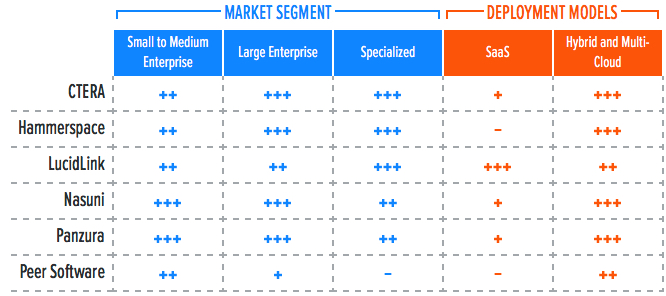

For a better understanding of the market and vendor positioning (Table 1), we assess how well solutions for distributed cloud file storage are positioned to serve specific market segments.

• Small-to-medium enterprise: In this category, we assess solutions on their ability to meet the needs of organizations ranging from small businesses to medium-sized companies. We also assess departmental use cases in large enterprises, where ease of use and deployment are more important than extensive management functionality, data mobility, and feature set.

• Large enterprise: These offerings are assessed on their ability to support large and business-critical projects. Optimal solutions in this category will have a strong focus on flexibility, performance, data services, and features to improve security and data protection. Scalability is another big differentiator, as is the ability to deploy the same service in different environments.

• Specialized: Optimal solutions are designed for specific workloads and use cases; for example, handling massively large files (M&E, healthcare verticals, and so on). When a solution fits a specialized use case, this will be mentioned in the appropriate section.

We also recognize 2 deployment models for solutions in this report: cloud-only or hybrid and multi-cloud.

• SaaS: The solution is available in the cloud as a managed service. Often designed, deployed, and managed by the service provider or the storage vendor, it is available only from that specific provider. The big advantages of this type of solution are simplicity and integration with other services offered by the cloud service provider.

• Hybrid and multi-cloud solutions: These solutions are meant to be installed both on-premises and in the cloud, fitting in with hybrid or multi-cloud storage infrastructures. Integration with a single cloud provider could be limiting compared to the other option and more complex to deploy and manage. On the other hand, they are more flexible, and the user usually has more control over resource allocation and tuning throughout the entire stack.

Table 1. Vendor Positioning

*** Exceptional: Outstanding focus and execution

** Capable: Good but with room for improvement

* Limited: Lacking in execution and use cases

– Not applicable or absent

3. Key Criteria Comparison

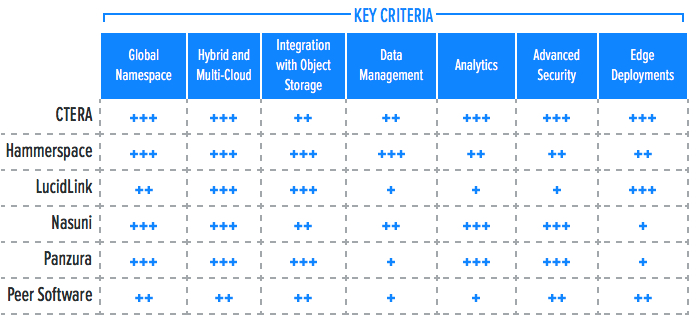

Building on the findings from the GigaOm report, “Key Criteria for Evaluating Cloud File Storage,” Table 2 summarizes how each vendor included in this research performs in the areas we consider differentiating and critical in this sector. The objective is to give the reader a snapshot of the technical capabilities of different solutions and define the perimeter of the market landscape.

Table 2. Key Criteria Comparison

*** Exceptional: Outstanding focus and execution

** Capable: Good but with room for improvement

* Limited: Lacking in execution and use cases

– Not applicable or absent

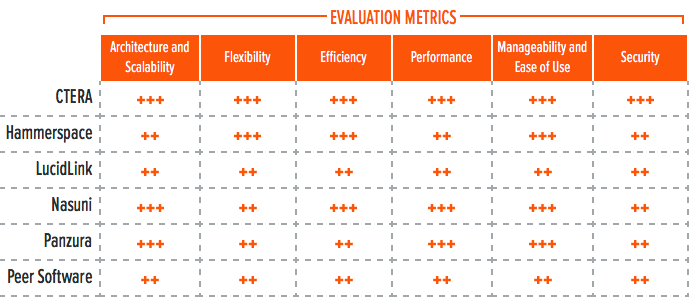

Table 3. Evaluation Metrics Comparison

*** Exceptional: Outstanding focus and execution

** Capable: Good but with room for improvement

* Limited: Lacking in execution and use cases

– Not applicable or absent

By combining the information provided in the tables above, the reader can develop a clear understanding of the technical solutions available in the market.

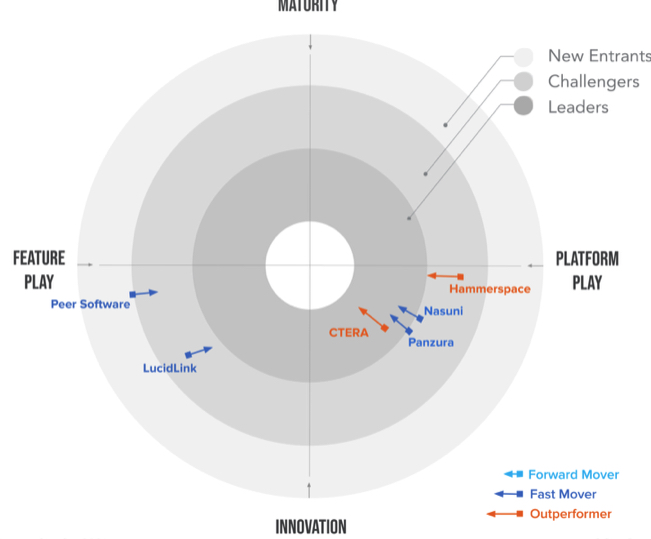

4. GigaOm Radar

This report synthesizes the analysis of key criteria and their impact on evaluation metrics to inform the GigaOm Radar graphic in Figure 1. The resulting chart is a forward-looking perspective on all the vendors in this report, based on their products’ technical capabilities and feature sets.

Figure 1. GigaOm Radar for Distributed Cloud File Storage

The GigaOm Radar plots vendor solutions across a series of concentric rings, with those set closer to center judged to be of higher overall value. The chart characterizes each vendor on two axes – Maturity vs. Innovation and Feature Play vs. Platform Play – while providing an arrow that projects each solution’s evolution over the next 12 to 18 months.

As you can see in the Radar chart in Figure 1, there are 2 groups of clearly distinguishable solutions.

The first group is located on the lower right side of the radar graph, an area that demonstrates focus on innovation and an end-to-end platform approach. This area shows 4 vendors, with 3 of them forming a leading group of aggressively competing and innovative solutions exclusively focused on delivering distributed cloud file storage solutions: Panzura, Nasuni, and CTERA.

CTERA is the leader of this radar and leads the innovation/platform-oriented solutions pack as well, thanks to a comprehensive and well-balanced approach to addressing the challenges of distributed cloud file storage and collaboration. The solution achieves outstanding ratings across a majority of key criteria and evaluation metrics. CTERA also proved to be the absolute leader in terms of edge deployment capabilities, providing organizations with the highest flexibility, enabling simplified edge data access, and offering comprehensive edge deployment options.

It is followed closely by 2 strong challengers – Nasuni and Panzura – which both demonstrate excellent capabilities. Although each solution is architected differently, they offer similar outcomes to customers. Panzura excels in integration with object storage, while Nasuni offers superior data management. Both solutions boast a solid value proposition.

Slightly behind the leaders’ pack, we find Hammerspace. The solution is suited to serve distributed cloud file storage requirements, but was built with more versatility and use cases in mind. This explains a stronger focus on building a robust architectural foundation that excels in hybrid and multi-cloud capabilities, as well as integration with object storage. While other key criteria are not yet as well developed as they are by the previously mentioned vendors, as reflected in the positioning and ratings, the comprehensive nature of this solution grants it its place among the platform players.

The lower-left side of the radar focuses on innovative solutions more focused on specific use cases or features. This includes 2 solutions: LucidLink and Peer Software.

LucidLink focuses on globally, instantly accessible data with one particularity-data is streamed as it is read. Streaming makes the solution especially well-suited for industries and use cases that rely on remote access to massive, multi-terabyte files, such as the M&E industry. LucidLink’s capabilities in this area are unmatched. The solution also shows excellent integration with object storage and great support of hybrid and multi-cloud models.

Peer Software has an interesting approach. This solution was conceived around the premise that organizations can build an abstracted distributed file service on top of existing storage infrastructure. It also supports scalability in the cloud. This makes Peer Software best in class when it comes to protecting existing storage investments and extending their usage to distributed cloud file storage.

5. Vendor Insights

CTERA

It presents a distributed cloud file storage platform built around the CTERA Global File System (GFS), which can reside in the cloud as well as in on-premises infrastructure. GFS presents file interfaces on the front end and leverages private or public S3 object storage on the back end.

Organizations can access GFS through 3 components: a CTERA Edge Filer gateway (SMB/NFS network filer, which can be deployed centrally or at edge locations with a compact HC100 Edge Filer), the CTERA Drive (a desktop/VDI agent), or the CTERA mobile application.

CTERA Direct was introduced in version 7.0. This is a new accelerated edge-to-cloud bidirectional sync protocol that enhances existing source-based de-dupe and compression by increasing parallelism. This protocol allows wire-speed cloud throughput while operating at lower latencies than before in the 200ms range. This makes the solution suitable for data-intensive workloads. The solution is managed by the CTERA Portal, which also handles the Global File System.

Since CTERA implements a global namespace, organizations can define zones dedicated to various departments or tenants. Administrators can define granular permissions around zones and authorized users. These zones also can segment data geographically for each tenant, or enforce data sovereignty requirements in specific jurisdictions.

The global namespace is fully transparent to the end users. Administrators can use CTERA Migrate-a built-in migration engine-to discover, assess, and automatically import file shares from existing NAS systems.

The solution comes with broad deployment options that cover the edge, core infrastructure, and the cloud. It concurrently supports multiple clouds, so users can transparently migrate data between clouds or from/to on-premises object stores.

When customers use AWS S3 as the backend object storage for CTERA, the solution can use AWS S3 Intelligent tiering, which allows for moving data between the S3 frequent and S3 infrequent access tiers, helping organizations achieve further cost savings. CTERA also can be deployed entirely on-premises in a fully private architecture, meeting stringent security requirements from several homeland security customers.

CTERA Insight provides data analytics capabilities. This data visualization service analyzes file assets by type, size, and usage trends; and presents the information through a well-organized, customizable user interface. Besides data insights, this interface also provides real-time usage, health, and monitoring capabilities, encompassing not only central components but also edge appliances. CTERA’s SDK allows API integrations, as well as S3 connectors to allow microservices to perform data management related tasks.

Audit trails are integrated in the solution’s security features, and it provides specific ransomware protection. The solution uses WORM snapshots as basic ransomware protection because read-only/immutable snapshots are the foundation of ransomware protection. Additional capabilities are provided through a third-party integration with Varonis to monitor and analyze logs, assist with data classification, and identify risky permission settings.

The solution’s architecture allows edge deployments for small branches or heavy-duty work-from-home (WFH) users through the remotely managed CTERA HC100 Edge appliance, a filer with 1 TB embedded NVMe cache. Mobile collaboration is also possible through the CTERA mobile appliance.

Strengths: CTERA demonstrates a broad coverage of methods to access its Global File System with multiple options, as well as solutions built for edge use cases and the recent rise of WFH, making it particularly apt for full-scale collaboration. Great migration engine and multi-tenancy capabilities. The excellent high-throughput, low-latency capabilities of the CTERA Direct transfer protocol are also worth mentioning.

Challenges: Native data management capabilities are currently limited. Customers would benefit from improvements in this area. Third-party integrations with Varonis provide excellent outcomes, but some organizations may prefer advanced security capabilities fully developed in-house by CTERA.

Hammerspace

It offers a global file system, which helps overcome the siloed nature of hybrid cloud file storage by providing a single file system regardless of the site’s geographic location or whether storage is on-premises or cloud-based. It also separates the control plane (metadata) from the data plane (where data actually resides, whether on-premises or cloud).

The solution can utilize, access, store, protect, and move data around the globe through a single global namespace. The user remains unaware of where resources are physically located. The product uses metadata across file system standards and includes telemetry data (such as IO/s, throughput, and latency) as well as user-defined and analytics-harvested metadata to rapidly view, filter, and search metadata in place, instead of relying on file names.

Companies can deploy Hammerspace on-premises or in the cloud, with support of AWS, Azure, and Google Cloud Platform. It uses share-level snapshots as well as comprehensive replication capabilities, allowing for automated file replication across different sites through the Hammerspace Policy Engine.

Manual replication activities are available on-demand as well. These options let organizations implement multi-site, active-active DR with automated failover and failback. Integration with object storage is also a core capability of Hammerspace as data cannot only be replicated/saved to the cloud, but also be automatically tiered on object storage. This reduces the on-premises data footprint and leverages cloud economics to keep storage spend under control.

One of the key capabilities of Hammerspace is “Autonomic Data Management.” This is a ML engine that runs a continuous market economy simulation. When combined with telemetry data from a customer’s environment, it helps make real-time, cross-cloud data placement decisions based on performance and cost. Although Hammerspace categorizes this feature as Data Management, the reader should be advised that in the context of the GigaOm Key Criteria for Cloud File Storage (and as displayed in Table 2 above), this capability is related more closely to two other key criteria: Integration with Object Storage and Hybrid and Multi-Cloud.

Ransomware protection is offered by Hammerspace through immutable file shares with global snapshot capabilities, as well as an undelete function.

Organizations evaluating Hammerspace should take into consideration the impact of the global snapshot feature on replication intervals when scaling up the amount of Hammerspace sites. With 8 sites, Hammerspace performs at its best with replication intervals ofs. With 24 or 32 sites, this interval will go to more than 20s with even bigger nodes.

Strengths: The Hammerspace product offers a balanced set of capabilities with its Distributed Global File System, including replication and multi- and hybrid cloud capabilities through the power of metadata.

Challenges: For heavily distributed organizations, operation of the global snapshot feature can have a detrimental impact on replication intervals. This can pose a challenge to organizations with an elevated data change rate.

LucidLink

It proposes an innovative approach to distributed cloud file storage with a solution that stores files in an S3 compatible object storage backend and streams files on demand. When a file is stored in LucidLink, the metadata is separated from the actual data and the file is split into multiple chunks, each of which consist of an individual S3 object in the backend.

Applications still see the file as a single entity, and LucidLink streams the file in the backend, presenting the data to the application as requested, without affecting performance or bandwidth. This method of handling data lets organizations work seamlessly with multi-terabyte files without having to wait for full file replication/synchronization. This shortcut makes it particularly well-suited for organizations manipulating huge files, such as the M&E industry.

From an architectural perspective, the solution consists of two components: the LucidLink Client and the LucidLink Service. The client runs at the OS level on servers, desktops, and mobile platforms, presenting files as if they are local and handling compression, prefetching, encryption, and caching.

The LucidLink service runs in the cloud and manages metadata coordination, garbage collection, file locking, snapshotting, and other optimization activities. The metadata is encrypted by the client, then synchronized across all connected clients to ensure LucidLink has zero knowledge of either the data or metadata. Data itself is stored on the object store.

Because LucidLink relies primarily on public cloud object storage providers, organizations can implement large volumes in the petabyte range with hundreds of millions of files on each volume. The solution supports any S3 object store, whether on-premises or in the cloud, as well as on Microsoft Azure Blob.

Streaming data from the cloud has an impact on egress fees. LucidLink partners with Wasabi for general purpose storage (leveraging free egress traffic) and IBM COS for high performance access (with attractive egress traffic pricing). Customers are also able to provide their own cloud storage if they wish.

The solution offers a shared global namespace and full filesystem semantics, but data management capabilities are currently lacking.

From a security perspective, LucidLink fully supports in-flight and at-rest encryption as well as multi-tenancy. Organizations bring their own key management systems and users bring their own encryption keys. Data is encrypted per tenant and protected at the filesystem level by read-only snapshots. However, advanced security capabilities such as protection vs. ransomware and auditing are not exposed through the user interface, although users can request custom reports via a support ticket.

The management interface of LucidLink is well designed, however analytics and monitoring capabilities are an area for potential improvement, with advanced capabilities mostly on the roadmap.

Strengths: LucidLink offers a compelling solution for organizations that require data to be stored globally and accessible instantly. Its architecture enables users to access huge files seamlessly through residential network connections, making collaboration and remote work completely transparent.

Challenges: The solution lacks data management capabilities. Analytics and monitoring are currently limited. There are solid foundational security capabilities, but advanced security capabilities such as ransomware and auditing are missing.

Nasuni

It offers a SaaS solution for enterprise file services, with an object-based global file system as its main engine and with many familiar file interfaces, including SMB and NFS. It is integrated with all major cloud providers and works with on-premises S3-compatible object stores.

Many Nasuni customers implement the solution to replace traditional NAS systems. Its characteristics let users replace several additional infrastructure components such as backup, archiving platforms, and more. The company recently added features for improved ransomware protection and advanced data management, making the solution even more compelling to enterprise users.

During the Covid pandemic, organizations learned that data and applications must be globally accessible, scalable, and secure. With users now spread across smaller remote offices or working from home, global access to applications and data is a requirement.

Nasuni offers its UniFS as a global file system, which provides a layer that separates files from storage resources. The system manages one master copy of data in public or private cloud object storage while distributing data access. The global file system manages all metAdata: such as versioning, access control, audit records, and locking; and provides access to files via standard protocols such as SMB and NFS.

Files in active use are cached using Nasuni’s Edge Appliances, so users benefit from high performance access through existing drive mappings and share points. All files, including files in use across multiple local caches, have their master copies stored in cloud object storage so they are globally accessible from any access point.

The Nasuni Management Console delivers centralized management of global edge appliances, volumes, snapshots, recoveries, protocols, shares, and more. The web-based interface offers point-and-click configuration, but Nasuni also offers a REST API method for automated monitoring, provisioning, and reporting across any number of sites. The Nasuni Health Monitor also reports to the Nasuni Management Console on the health of CPU, directory services, disk, file system, memory, NFS, network, SMB, services, and so on. Nasuni also integrates with tools like Grafana and Splunk for further analytics.

Nasuni’s security capabilities let customers configure file system auditing and logging for operations on volumes. Syslog Export sends notifications and auditing messages to syslog servers. Auditing volume events such as Create, Delete, Rename, and Security help customers identify and recover from ransomware attacks.

As an alternative to its own auditing options, Nasuni also supports auditing by Varonis. Nasuni protects files efficiently and quickly, and can dial back to a pre-ransomware state ins or minutes. Nasuni Continuous File Versioning allows for a nearly infinite number of snapshots as immutable copies, so customers can recover easily to any point in minutes to quickly mitigate ransomware.

Nasuni Edge Appliances are lightweight VMs or hardware appliances that cache frequently-accessed files wherever SMB or NFS access is needed. They can be deployed on-premises or in the cloud to replace legacy file servers and NAS devices. The Nasuni Edge Appliances encrypt and dedupe files, then snapshot them at frequent intervals to the cloud where they are written to object storage in read-only format. This results in files that are always available to remote users, even if specific sites or file servers go down.

Strengths: Nasuni offers an efficient distributed file system solution that provides a secure and scalable solution. The solution offers protection vs. ransomware at a fine level and with edge appliances, customers can access frequently used data rapidly and securely.

Challenges: Work-from-home users became an important asset during the pandemic. Nasuni offers a web interface to download files, but should look into a better edge solution for WFH users that is on par with the edge solution that can be used in the office.

Panzura

CloudFS is the distributed file storage offering from the company. This solution works across sites (public and private clouds) and provides a single data plane with local file operation performance, automated file locking, and immediate global data consistency.

The solution implemements a global namespace and tackles data integrity requirements through a global file locking mechanism. This file locking provides real-time data consistency regardless of the location from which a file is accessed. It also provides efficient snapshot management with version control and the ability to configure retention policies. It also provides backup and DR capabilities.

The firm relies on S3 object stores and supports a range of object storage solutions, whether in the public cloud (AWS S3, Azure Blob) or on-premises, with Cloudian or IBM Cloud Object Storage (COS). A feature called Cloud Mirroring allows for multi-backend capabilities by writing data to a second cloud storage provider to ensure data is always available, even in the event of failure at one of the cloud storage providers. Panzura also provides tiering and archiving.

Panzura Data Services provides data analytic capabilities. These advanced features provide global search, user auditing, one-click file restoration, and monitoring functions aimed at core metrics and storage consumption. These features can show, for example, frequency of access, active users, and environmental health. Panzura provides various API services that let users connect their data management tools to it. Panzura Data Services can also detect infrequently accessed data for subsequent action.

Security capabilities include user auditing (through Panzura Data Services), as well as ransomware protection. Ransomware protection is handled through a combination of immutable data (WORM S3 backend) and read-only snapshots taken every 60s at the global filer level. The solution also regularly moves data to the immutable object store, and allows seamless data recovery in case of a ransomware attack through backup services.

Key management for encryption (at rest and in flight) occurs through a bring-your-own key management system model. The solution also includes a Secure Erase feature that removes all versions of deleted files and subsequently overwrites deleted data with zeros, a feature available even with cloud-based object storage.

Edge access is provided via two methods: accessing the data through a VPN and into a corporate network, or using a mobile client. Although this second method doesn’t yet support check-in/check-out of files.

Strengths: Panzura offers comprehensive distributed file storage for local performance with global availability, data consistency, tiered storage, and multi-backend capabilities. Panzura Data Services delivers advanced analytics and data management capabilities that help organizations better understand and manage their data footprint.

Challenges: Edge access capabilities need to be revisited to provide a seamless user experience. Currently, data access is possible only through a corporate VPN, and mobile client functions do not support file check-in/check-out mechanisms.

Peer Software

It takes an interesting approach to distributed cloud file storage with its Global File Service (GFS) solution. Whereas other vendors implement a global distributed file system, Peer Software implements a distributed service on top of existing file systems across heterogeneous storage vendors. This solution’s key attributes include global file locking, active-active data replication across sites, and centralized backup.

PeerGFS architecture consists of two components: the PeerGFS Agent and the PeerGFS Hub. The PeerGFS Hub is in charge of configuration, management, and monitoring, as well as acting as a message broker that talks to other PeerGFS Hubs.

PeerGFS has several specificities. Instead of implementing a proprietary global namespace, it uses Microsoft DFS to create a namespace across hybrid and multi-cloud environments. Companies can deploy this solution on-premises or in the cloud. It also supports most hardware-based file systems with SMB support, Azure Blob, and any S3-compatible public cloud storage. NFS support is available to a limited subset of customers.

Peer Software’s solution can replicate data across data centers and clouds in an active-active model. It also supports multi-cloud implementations and replication across availability zones. Only delta changes are replicated to save bandwidth and speed up transfers.

Using another Peer Software solution called PeerSync lets companies perform mitigation functions. Data can be backed up to S3-compatible object stores and public cloud platforms. Backed up data is stored as individual files, which enables file-level restores and allows immediate data reuse for use cases relying on file-based datasets.

The product delivers monitoring and capabilities through the PeerGFS management interface. The ability to detect user/application activity on the distributed file service is built into PeerGFS through an analysis and detection engine named Peer Malicious Event Detection (MED). This engine analyzes the environment and bait files to detect malicious activity, including ransomware attacks. Pattern matching is another way to identify malicious activity by looking at certain types of behaviors. The last measure is trap folders, a series of hidden, recursive folders created by Peer MED to target malware.

Whenever one of these activities happens or files are touched, it triggers Peer MED’s action engine, which results in a variety of responses: anything from an alert to disabling an agent and stopping a job. Further ransomware protection measures, such as enabling immutable snapshots on the target S3 storage used for backups, have yet to be implemented.

Another Peer solution, PeerFSA, provides insights about an organization’s environment and creates reports about time-based metrics/usage, directories, users, and file types. Those reports are static and delivered in an Excel format.

There is no edge access model currently available, as the solution relies on its hub/agent architecture to operate. Users have to connect to the corporate network to access standard file resources monitored by PeerGFS.

Strengths: Peer Software has an interesting approach that lets organizations implement a distributed cloud file storage solution on top of existing infrastructure and make the best use of existing investments. The Peer Malicious Event Detection Engine has a sophisticated approach to mitigating malware attacks.

Challenges: The design decision to act as a distributed file service layer on top of existing hardware makes the solution dependent on underlying hardware/software capabilities. Some features such as file system analytics should be developed further to become interactive and integrated into the management interface.

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter