Storage Battle Between AWS, GCP and Azure Continues

AWS confirming goal to be considered as serious enterprise storage alternative once again

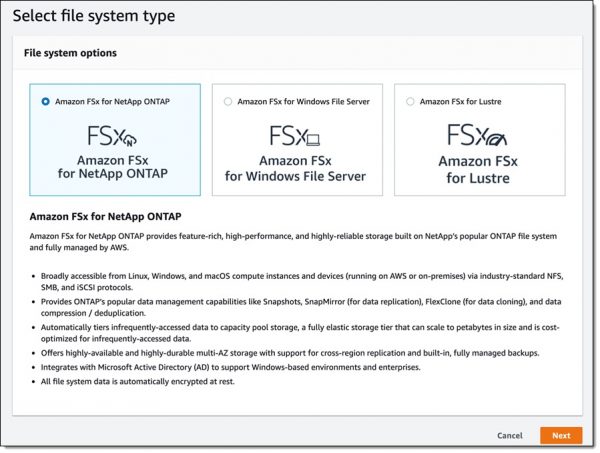

By Philippe Nicolas | September 15, 2021 at 2:02 pmThe recent AWS announcement related to FSx for NetApp ONTAP invited us to think again about the 3 gorillas’ offerings in term of storage and associated data management services.

First, we were surprised to read such announcement but we rapidly dig a bit to understand the rationale behind from AWS’ perspective but also from a NetApp’s point of view.

Vendors speak about on-premise value promoting for quite some time now a hybrid model to limit their data center footprint erosion and cloud providers claim you can do everything in the cloud. In fact users don’t really care, they wish to run their applications and workloads anywhere, on-premise or in the cloud or even in an hybrid. In other words, they’re looking for an agnostic territory where they’re able to deliver their services in a safely manner, with a real cost-effective model with enough performance and resiliency with capabilities to grow dynamically seamlessly. It also means to align the infrastructure to the workload in both direction, grow and shrink dynamically. And the cloud by its distributed and multi-geo presence seems to be adequate for such needs. The difficulty is to find the nearest solution in the cloud from what they use and run on-premise. This joint announcement is a new step in that direction at least for AWS for NetApp-based data and storage infrastructures.

We also recognize a special pattern adopting NetApp model. Users did storage consolidation and even workloads consolidation I should say consolidation of usages on global storage platform for many many years. It is always a key priority in the IT budget users trying to adapt and control the data growth to the IT needs, market technology evolution and more and more demanding applications. Associated with that market dynamic is the unified necessity of the storage proposing block and file historically but also file + object or file + object + block as a few vendors do. Remember some market approaches with block and object which seemed to be a bit weird. And with the unified proven trend on-premise for enterprise of all sizes, we understand the wish for AWS to offer a similar model with NetApp ONTAP being one of the most deployed storage platform in that domain. It again illustrates the real U3 – Universal, Unified and Ubiquitous – categorization of market products.

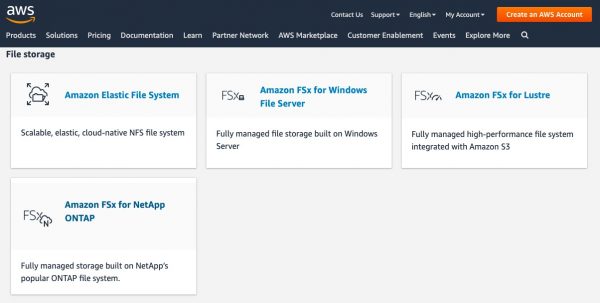

The opposite is true as well as all cloud providers offer very granular data storage and management services, this is true as well outside of their storage offering. We find a service for NFS, for SMB, for S3, for block, for transport/transfert… When you check the AWS storage choice on this page, the only promoted choice for block is EBS, omitting NetApp block model with iSCSI offered with this FSx product.

This announcement makes Cloud Volumes Service obsolete and the product disappears from NetApp for AWS and AWS pages.

The value seems to be clearly on the managed service aspect and both AWS and NetApp argues that the user won’t find any difference between a classic on-premise environment and this new one. As NetApp was/is famous for WAFL, its log-structured file system, it is surprising that neither AWS and NetApp have mentioned at all this data management fundamental layer in the press release or other related content. Having such a strong file system helps adoption, no doubt about it, WAFL has proven its value with advanced data services, but users never use and consume WAFL as for file-based application they connect these to NFS and/or SMB.

What we learn is this new FSx service uses AWS systems with EBS for a very limited volume size. By limited, we mean around 200TB.

Clearly AWS goal is to address each objection against cloud migration and this step illustrates this effort. Run on-premise or in the cloud is the same claims AWS. Sure we can answer if you rely on NetApp but globally yes if you consider NAS access or block-based environment.

But as reading the use cases list, it’s surprising once again. We see lift and shift to the cloud, business continuity and classic NAS utilization. For sure it represents tons of deployments no doubt and if some specific needs appear, AWS answers with FSx Windows or FSx Lustre or even EFS. And users can extend their search with the rich marketplace.

Here is the current AWS Marketplace offering for File Storage:

| Companies | Product(s) |

| Buurst (formerly SoftNAS) |

Cloud NAS

|

| DDN |

Cloud Edition for Lustre

|

| Hammerspace | Hammerspace |

| IBM |

Spectrum Scale (BYOL)

|

| Morro Data |

CacheDrive in Cloud

|

| Nasuni | Cloud File Storage |

| NetApp |

Cloud Volumes ONTAP

|

| Nexenta (by DDN) |

Cloud (native and BYOL)

|

| Panzura |

Freedom Hybrid-Cloud NAS, Freedom NAS (BYOL)

|

| PixitMedia |

PixStor

|

| QNAP | QuTScloud |

| Qumulo | File Storage |

| ThinkParQ | BeeGFS |

| Veritas |

InfoScale Enterprise (BYOL)

|

| WekaIO | WekaFS |

| YottaStor |

YottaDrive Cloud

|

| Zadara |

Virtual Private Storage Array

|

As AWS promotes a similar experience as on-premise, several other file storage vendors make comments about that deal. Remember that globally a AWS user can consider and use different approaches: a native AWS offering like EFS, FSx …, a partner solution available on the marketplace that could be native or via a BYOL model.

We asked a few vendors to comment this announcement, some clearly have a point and others validate the offering probably protecting their AWS partnership.

- Nasuni – Andres Rodrigues, CTO and founder

“Most data on-prem is stored in file format so it is no surprise to see both NetApp and AWS recognize the shift for file storage to the cloud. NetApp has been the de facto standard for on premises file storage for years. Amazon FSx for NetApp ONTAP is meant to change as little as possible for those loyal NetApp customers that want NetApp in the cloud. It’s comfortable. It also squanders an opportunity to leverage cloud technology in order to address the issues around scale, cost and risk that are facing organizations today. The offering has a file system capacity limit of 192 terabytes. That is hardly cloud scale. They also cost pretty much the same as an equivalent hosted NetApp box. There is no real technology leverage in that. It also has a built-in backup limit of once a day, a backup retention limit of 30 days, and the same old 255 snapshot limit, which means a separate backup solution is required to really protect the data. That not only adds to the cost but leaves customers vulnerable to ransomware attacks. It can take days or weeks to restore FSx NetApp Volumes that have been compromised by ransomware. That is a risk that no organization deploying file servers in the cloud should still be exposed to. Lastly, this doesn’t take into account that most NetApp customers have a multi-vendor file infrastructure, with other storage hardware in the data center and Windows file servers in remote/branch offices. The cloud offers an opportunity to improve how all file data is stored, shared, managed, and protected, not just what’s on NetApp. AWS offers a formidable technology stack that can be leveraged to address all of these issues. The new offering from NetApp barely scratches the surface of what is already possible.” - Pure Storage

“Amazon FSx is a validation of the importance of hybrid cloud. Customers have a broad range of needs around cloud and Pure is focused on helping them achieve their goals in challenging areas, such as lift & shift and migration of traditional apps (Cloud Block Store), as well as building cloud-native apps portability from on-prem to hybrid to multi-cloud.

Pure is seeing a strong traction and value in the file space working with customers on high-performance cloud-connected workloads such as EDA and tech computing. As a result, we’re investing more in that direction.” - Qumulo – David Chapa, technical evangelist

“The net/net is that the complexity issues that customers deal with regarding NetApp are not eased with this new offering, rather they are further complicated. This announcement really validates our approach to cloud and strengthens our value proposition of providing a simple path from on-prem to the cloud. With this announcement, there are price, performance and scalability concerns, each of which is augmented with this offering (rather than simplified). In summary, legacy vendors like NetApp are simply bringing their outdated and complex architectures to the cloud without solving any of the on prem issues their customers currently face. It’s a lose/lose for a customer to go from one of these legacy vendors on prem to the cloud unless they can solve the issues they are facing, which is why we believe (and our customers agree!) that Qumulo is the best choice.

Customers come to Qumulo with 3 main concerns: performance, scale and cost. When I look at this offering, the throughput performance is low (512MB/s and as high as 2GB/s); to achieve the “petabyte” scale customers want, they presumably migrate from an unimpressive primary volume of 192TiB to an S3 bucket, much like an old-school HSM solution; and customers will pay more than twice what they would for a similarly configured Qumulo solution on AWS. Qumulo believes customers shouldn’t settle for anything less than the golden trifecta of performance, scale, and cost.” - VAST Data – Jeff Denworth, CMO and founder

“Does lifting and shifting a legacy storage architecture to Amazon really deliver on the promise of cloud innovation? The same complexities of volume management apply… cost-wise it’s a continuation of things moving in the wrong direction for legacy products. VAST customers can acquire self-managed all-flash massively-scalable infrastructure for 5 years with what AWS is charging for just 2 months. Organizations espouse the virtues of cloud agility because ‘time is money’… but money is also money, and here the economics are just crazy.” - WekaIO – Jonathan Martin, president

“WEKA welcomes storage dinosaurs like NetApp endorsing it’s strategy for providing simple data portability between on-prem and AWS. However, NetApp’s WAFL file system didn’t meet the scale or speed requirements of next-gen workloads on-prem, and it appears to be even slower running on AWS. We believe organizations who are serious about running next-gen AI and cloud workloads on AWS will continue to look for options beyond AWS native offerings like WEKA to support their needs. After all, it is the world’s fastest storage service on AWS.”

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter