Fungible DPU: Microprocessor Powering Next Gen Data Center Infrastructure

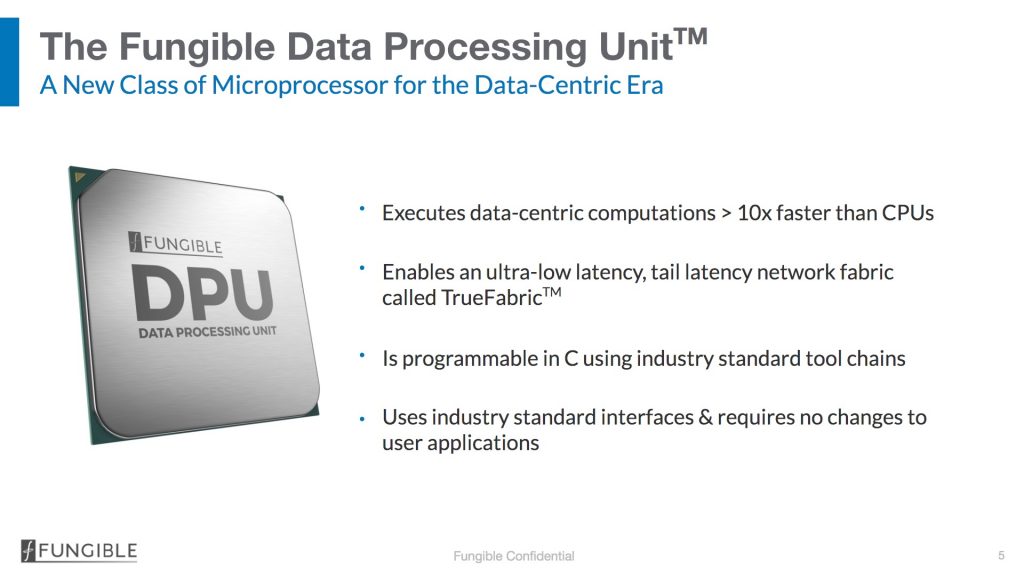

Optimized for data interchange and data-centric computations, delivers performance, footprint and cost efficiencies for next gen scale-out networking, storage, security and analytics platforms.

This is a Press Release edited by StorageNewsletter.com on August 18, 2020 at 2:10 pmFungible Inc. announced at Hot Chips 2020, its Data Processing Unit (DPU), a transformational technology that will power next-generation, high-performance, efficient and cost-optimized scale-out data centers.

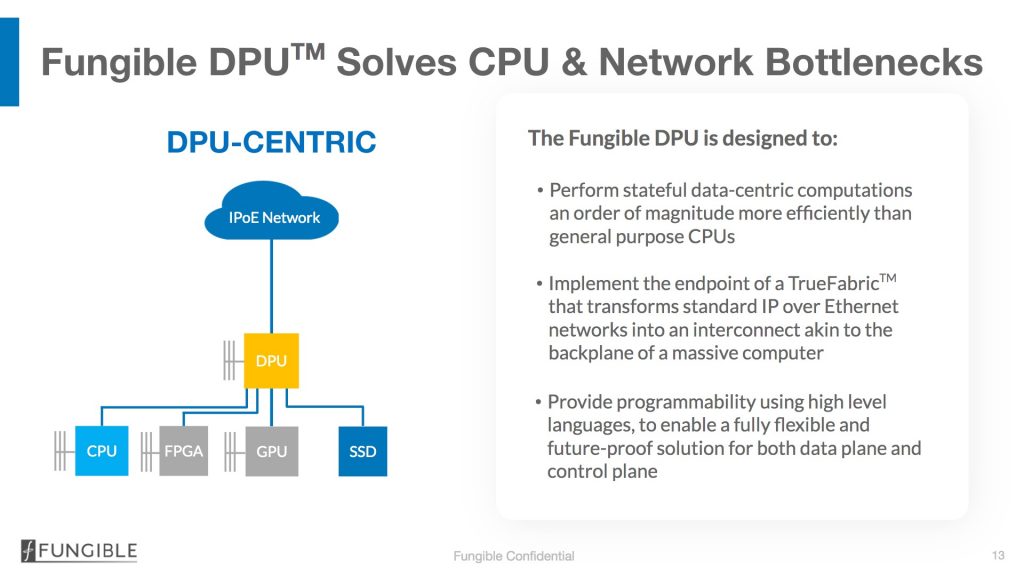

“The Fungible DPU is purpose built to address two of the biggest challenges in scale-out data centers – inefficient data interchange between nodes and inefficient execution of data-centric computations,” said Pradeep Sindhu, CEO and founder. “Data-centric computations are increasingly prevalent in data centers, with important examples being the computations performed in the network, storage, security and virtualization data-paths. Today, these computations are performed inefficiently by existing processor architectures. These inefficiencies cause over provisioning and underutilization of resources, resulting in data centers that are significantly more expensive to build and operate. Eliminating these inefficiencies will also accelerate the proliferation of modern applications, such as AI and analytics.”

“The value proposition of scale-out infrastructure solutions appears straightforward, but in reality it is hard to implement it in a consistent and linearly scalable manner,” said Ashish Nadkarni, group VP of WW Infrastructure, IDC. “Current compute technologies fall short in the vendor’s ability to create an ultra-low latency and ultra-scalable solution for high bandwidth data movement. The industry needs a holistic and end-to solution that can disaggregate and compose infrastructure resources at cloud scale.”

The Fungible DPU is the third socket in data centers, complementing the CPU and GPU, and delivering benefits not just in performance per unit power and space, but also strengthening reliability and security.

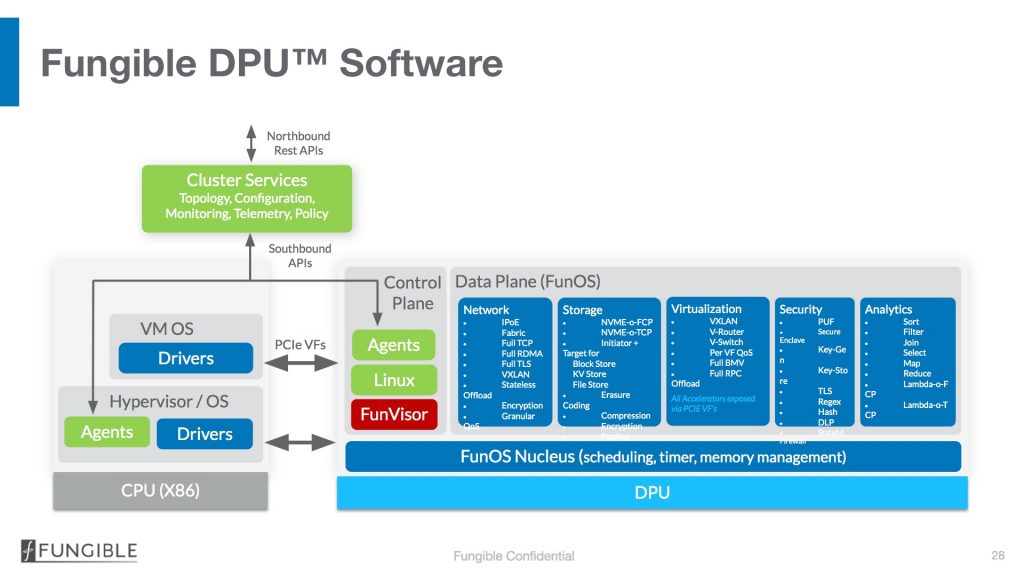

- A programmable data-path engine that executes data-centric computations at high speeds, while providing flexibility comparable to general-purpose CPUs. The engine is programmed in C using industry standard tool chains and is designed to execute many data-path computations concurrently. This innovation enables the Fungible DPU to be customized to address the stringent high-performance requirements visible today, while being future-proof against demanding new requirements that will surely appear over the next few years.

- A new network engine that implements the endpoint of a high-performance TrueFabric that provides deterministic low latency, cross-section bandwidth, congestion and error control, and security at any scale (from 100s to 100,000s of nodes). The TrueFabric protocol is standards compliant and interoperable with TCP/IP over Ethernet, ensuring that the data center leaf-spine network can be built with standard Ethernet switches and standard electro-optics and fiber infrastructure.

“Business today operates in an always-on world of massive data that needs to be moved, stored and processed efficiently in cloud-native environments,” said Young Sohn, president and chief strategy officer, Samsung Electronics Co. Ltd., and chairman of the board, Harman International. “Yet, bottlenecks in disaggregated infrastructure force users to trade off compression and encryption with storage performance. To enable an architecture in which compute resources for microservices and container architecture can be easily scaled up or down as needed, Fungible has created a resilient fabric that securely connects compute and storage. The Fungible DPU, which is optimized for data-centric compute, enables line rate storage and security data services, unleashing the full capabilities and blazing fast performance of NVMe SSDs over the network.”

-

F1 DPU – an 800Gb/s processor designed for high performance storage, analytics and security platforms.

-

S1 DPU – a 200Gb/s processor for host-side use cases including bare metal virtualization, storage initiator, NFVi/VNF applications and distributed node security.

Comments

Fungible finally unveils its chip product dedicated to data center, being waited for several months.

Based in Santa Clara, CA, and founded in 2015 by Pradeep Sindhu, founder, Juniper Networks, and Bertrand Serlet, the firm has raised more than $300 million in 6 rounds. Founders got strong track records and we're surprised to see Sindhu still listed on the Juniper leadership page as founder and chief scientist. Speaking with him recently, it seems also that his motivation to address new data center challenges is intact even if he has nothing more to prove.

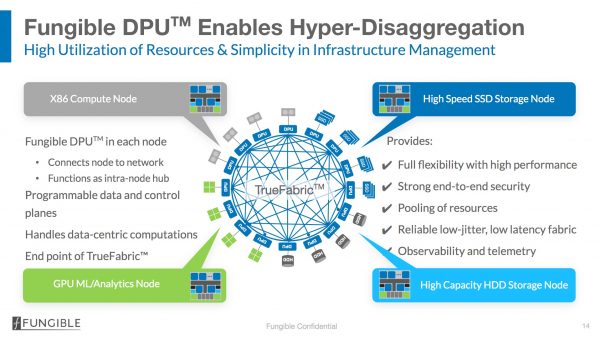

Data centers are based essentially on pools of independent resources, all connected, and some have strict links with others being installed within systems. Therefore, the power comes from the logical association of all these elements with a "divide and conquer" model. Thus the connectivity between these compute and storage elements are key to reach a new level of DC efficiency.

This announcement represents the first one from Fungible that plans to shake the industry with a few other ones. This one is related to the introduction of a new category of processing unit named DPU for Data Processing Unit establishing a reliable fast and secured network with comprehensive local data-oriented computing capabilities.

Memory and CPU were more in advance than storage during recent times. Flash and some connectivity efforts have tried to fill the performance gap. Large farms of HDDs is pretty good for bandwidth but latency is better with SSDs. The challenge was on the connectivity side so NVMe brings new capabilities and hopes and it's even more true with NVMe-oF using fast networks over TCP, FC or IB. Globally the idea is to provide shared scalable storage at a performance level very close to DAS. The result is a reduced gap with CPU and memory. DDR5 specifications were ratified in 2020 and promise to double bandwidth, same effect for PCIe Gen 4 over Gen 3 and it's even greater with Gen 5. Also persistent memory approaches like Intel Optane contribute to this new all flash data center for new coming IT and workloads challenges.

Fungible predicts that data centers with current designs will hit a wall especially on scalability as an effect of the multiplication of compute and/or storage pools, security and agility i.e its capability to dynamically adapt itself to workloads and changes.

We live in a world where CPU centric designs is strong. GPU has brought a new model and especially with GPUDirect Storage splitting the world in different groups : ASIC -> FGPA -> CPU and GPU. New intensive workloads in large DC require dedicated processing units at different places of the topology always to satisfy SLA, scalability and performance without sacrificing security. That means that disaggregated model is a must and things like consolidation, aggregation and converged even hyper-converged are not suited for such large data centers. Obviously only candid people could believe that a CPU centric approach fits all needs and the reality shows the opposite.

The any-to-any promise is still a goal with clear urgency as data flood the floor at a rapid pace. Fungible TrueFabric, the network engine, supports Ethernet and TCP/IP targeting very low latency, high bandwidth and optimized throughput from 100s to 100,000s of nodes. The company announces 2 DPU - S1 and F1 - at 200Gb/s and 800Gb/s respectively. Functions covered by DPU are networks related services plus virtualization, erasure coding, compression, encryption, pooling, storage network, security and analytics.

The adoption of such new approach requires SDK, drivers and libraries to enable DPU coupled to infrastructure services. The fundamental characteristic is to be application independent, no intrusive without any changes to applications. Even if the idea confirms a need, the ecosystem of DPU-enabled products will be key and serve as an adoption metric.

Nebulon recently, computational storage players such NGD Systems and ScaleFlux and now Fungible confirms this new technology direction to offload central CPUs with dedicated card. Also PCIe seems to be the preferred method and integration place. In fact, PCIe Gen 4 and even 5 really help here. The data path engine deports data-centric computations freeing CPUs cycles for applications related instructions.

We also anticipate the availability of a storage product to show the immediate value of DPU. Fungible initiative illustrates once again the DC direction towards disaggregated architecture with clear distinction between the compute and the storage layers. The effect allows the consideration of a massive logical compute farm, including pure compute but also storage, with a super fast reliable network named TrueFabric.

Read also:

Introducing Performance Efficiency Percentage, New Storage Performance

By Jay Menon, chief scientist at Fungible, IBM Fellow Emeritus

May 1, 2020 | In Brief

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter