Qumulo Shift: Free Service to Move File Data to AWS S3

And transforms them to object in open format.

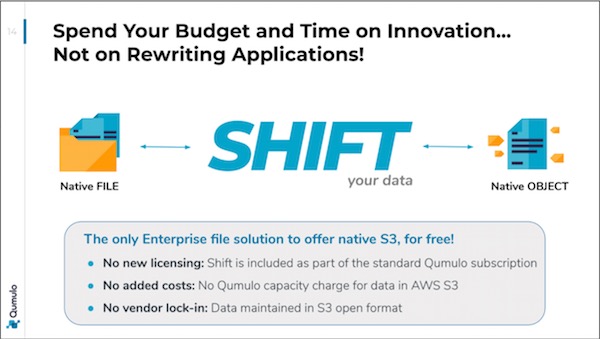

This is a Press Release edited by StorageNewsletter.com on June 25, 2020 at 9:55 amQumulo, Inc. launches Shift for Amazon Web Services (AWS) S3, a native cloud service that enables organizations to move file data from any Qumulo on-prem or public cloud cluster into Amazon Simple Storage Service (Amazon S3) and transform that data to object in an open and non-proprietary format.

With Shift, firm’s file customers can now leverage the full ecosystem of cloud-native applications and services attached to Amazon S3. Shift for AWS S3 is included for free with the Qumulo file system. The company also announced that its cloud software has achieved the Amazon Well-Architected designation, enabling organizations to run Qumulo’s validated architecture on AWS.

Migrate File Data to Cloud with Freedom

To date, enterprises have been unable to easily move their critical file data to the cloud, stranding their data from the applications and services they need to use. Complicating cloud adoption even further, enterprises have been forced to completely re-architect their application workflows in order to utilize data in the cloud. Traditional legacy file systems are limited in their ability to help customers leverage the cloud, causing expensive and time-consuming application refactoring and forcing customers into proprietary data formats. With a few clicks, organizations can now move their file data to Amazon S3, providing absolute data freedom.

“For Shell, Qumulo is a key component for the migration of subsurface applications and related data from on-premise to a cloud-based set-up,” said Johan Krebbers, GM digital and emerging technologies, and VP innovation, Shell. “Using Qumulo, we have been able to accelerate the processing of large file data sets with their highly performant NFS and SMB support.”

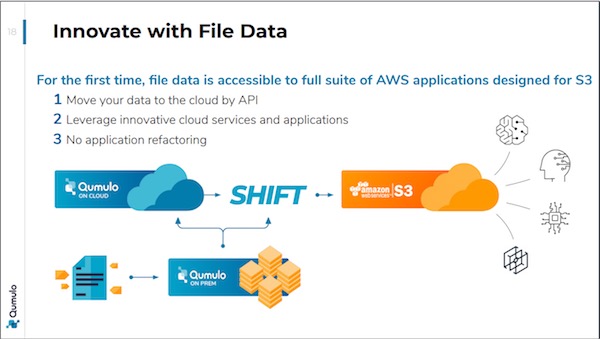

With Shift for AWS S3, enterprise organizations can now take advantage of their valuable and growing file data and create faster business outcomes leveraging the cloud. Users can migrate and store data from thousands of sources to Amazon S3, making it easier for that data to natively interact with AWS services such as Amazon Sagemaker, AWS IoT Greengrass, and more.

“Customers are already using Qumulo to drive more than one trillion data transactions weekly and have asked to migrate file data quickly and still leverage applications to utilize the innovation they are driving in the public cloud,” said Barry Russell, SVP and GM of cloud, Qumulo. “With Shift for AWS S3, customers can now move data fast and no longer worry about being stuck in legacy proprietary file data formats that slow them down and stall digital transformation. Leveraging our work with AWS, we are now able to integrate with Amazon S3 natively and enable high-value data workloads to use cloud applications and services at any scale in Qumulo or S3.”

Cloud-Native File Services

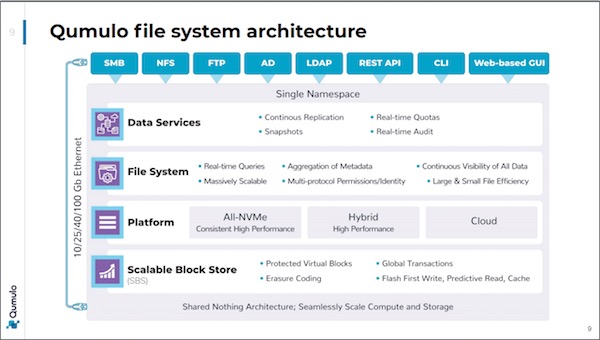

The Qumulo file system was designed to run as a cloud-native service on the public cloud. This architecture provides users with complete enterprise file system capabilities coupled with the scale, flexibility and power of the cloud. This cloud-native approach enables users with large file data projects to take advantage of workloads requiring thousands of compute nodes and enables enterprises to move large datasets that would otherwise exceed the scale capabilities of other file system options.

Build Applications in Cloud with File Data

Leveraging firm’s API and cloud-native architecture, developers now can quickly connect applications to file data and integrate with native cloud services, giving developers control to choose how they want applications and services to process file data at any scale.

“As a national leader in the NIPT (Non-Invasive Prenatal Testing) and Hereditary Cancer testing market, Progenity has significant regulatory retention requirements. Amazon S3 with Bucket Lifecycle Policies is our top choice for a cost-effective, secure and compliant environment,” said David Meiser, solutions architect, Progenity, Inc. “Qumulo is the best choice for managing our data as it enables us to innovate with cloud-native services and applications while also providing the ability to securely store our data in a non-proprietary format while in S3.”

Qumulo and Qumulo Shift for AWS S3 enable organizations to use new applications and services as well as migrate existing file-based workloads to the cloud easily. For example, customers in the manufacturing sector use dense image data captured on the factory floor in Qumulo, shift that data to S3 and then leverage Amazon Rekognition to build an application workflow that analyzes errors in the manufacturing process.

“Object stores and data lakes are good at storing large quantities of unstructured file data at rest, but customers need the ability to process massive file data across applications and cloud services to accelerate tangible business results,” said Ben Gitenstein, VP product , Qumulo. Shift for AWS S3 enables high-performance file data solutions to be used while also providing the ability to move data to S3 for sharing, integration to other services, and cost optimization. Most importantly for customers, Qumulo does not hold their data ‘hostage’ with proprietary data formats. When customers use Shift for AWS S3, the data in S3 is stored as natively accessible objects and buckets.”

“Cloud file data services are a natural evolution of data center technology and an important aspect of the cloud delivery model,” said Shawn Fitzgerald, research director, digital transformation strategies, IDC. “Qumulo’s intelligent, cloud-native architecture is a great example of how an organization’s shift to the cloud simplifies and better leverages cloud services and applications.”

Shift for AWS S3 service will be available as a software upgrade, free of charge, to Qumulo customers in July 2020.

Resources:

Qumulo File Software

Qumulo Cloud File Data Services

Comments

Qumulo continues to innovate illustrating that the battle is pretty hot in the file storage area with 4 blitzscalers that push innovation: Pure Storage, Qumulo, Vast Data and WekaIO. It is also a storage unicorn like Vast Data.

The genesis of Qumulo is on-premise with a product designed with flash in mind and a shared-nothing model to deliver a highly scalable NAS. It represents a perfect example of a pure software approach delivered as an appliance on commodity hardware from Qumulo or its partners like HPE, with Apollo and GreenLake, and more recently Fujitsu. This new instance of SDS rapidly evolved to embrace cloud with first AWS then GCP flavor, and Azure soon expected, to offer multi-cloud clusters with 1 or N cluster instances per site. This idea here is to build a connected file storage presence in different world places to enable local processing.

Leader in highly scalable NAS, Qumulo offers a rich file storage exposing multiple protocols and especially S3 thanks a MinIO gateway embedded within the cluster. This design choice allows to access the same content via multiple protocols not only file-based access methods like NFS and SMB but also with S3. It means you can upload/write a file locally via NFS and immediately access this file remotely 1,000 miles away with a simple GET S3 command. Among top NAS vendors, Vast Data and more recently Dell EMC with PowerScale can also do that. This is a critical aspect as it reduces storage space avoiding multi copies therefore cost and of course data divergence between data areas.

This iteration has been well received by the market and invited the team to go fast beyond this model with the ability to copy file data from on-prem or AWS or even GCP Qumulo cluster into AWS S3. This new service named Shift for AWS S3 means exactly what if does. It could be seen as a surprise at the beginning but with the tons of existing AWS applications and services, it allows a native direct data utilization from AWS subscribers. Even if file sharing protocols are standard for a few decades with the myriad of applications, AWS services leverages obviously one of the most successful service AWS ever made, S3. AWS grants Qumulo of the Amazon Well-Architected designation contributing to give guarantees to users.

We also learned during a recent online session that the feature relies on the existing replication mechanism coupled with the S3 API. To boost such transfer and facilitate large transfer, the S3 multipart upload feature was invented by AWS. The copy of data sent to AWS S3 is read-only and serves as a source to run AWS applications. The company confirmed that they don't address the potential data divergence that could occur between 2 independent processing on Qumulo cluster and AWS S3. The goal is not to build a reliable multi-site cluster but to simply offer a rapid, easy and cost effective method to transfer data, from any Qumulo clusters, to S3 to run AWS services. Clever move again with the tons of AWS applications available.

AWS S3 has a limit of 5TB that imposes a limit of files Qumulo can transfer. To that question, Qumulo answered that on average the maximum file size their customers use is around 3 or 4TB. OK, but for larger files some customer can use, what is the answer? Is there any method to split files into multiple 5TB objects? Probably in the near future. It immediately confirms that the historical limit Isilon had with 4TB and more recently with 16TB is a good fit finally for certain use cases of course.

Of course there is a metadata action in this move but the main idea is to offer access to content with a new protocol natively stored in AWS S3. There is no transformation as files are just a series of byte and interpreted by the application that can apply some structure or format on it. Of course, internally, content are organized and stored with the service that exposes it, file system are hierarchical by nature even if some developments design things differently like a key-value store for internal organization and object storage is essentially a flat namespace as a design attribute but here the goal is on the data presentation and residence to leverage AWS S3 and associated AWS applications.

Shift is free and available for any customers under maintenance by a simple software upgrade starting in July 2020.

Interesting approach and we'll see how competition with react to this announcement.

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter