Radian Memory Systems Announced ‘Twelve x Twelve’ Open-Channel SSD

Combining up to 12GB of user NVRAM with up to 12TB of flash

This is a Press Release edited by StorageNewsletter.com on August 8, 2016 at 7:26 pmRadian Memory Systems, Inc. announced an hybrid NVRAM/flash SSD to combine up to 12GB of user NVRAM with up to 12TB of flash, all under host control utilizing either Open-Channel (*) or the Symphonic Cooperative Flash Management (CFM) technology.

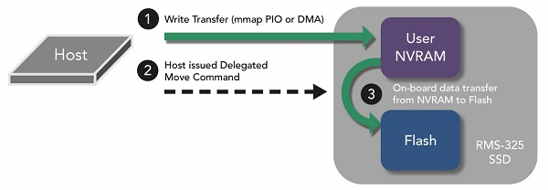

The RMS-325 NVRAM/flash hybrid SSD provides host systems with direct control over both its byte addressable User NVRAM, high capacity flash storage, and transferring data between the two different types of memory. All of the storage and power backup is contained in one compact, standard low profile card without requiring external cabling or power packs.

The RMS-325 NVRAM/flash hybrid SSD provides host systems with direct control over both its byte addressable User NVRAM, high capacity flash storage, and transferring data between the two different types of memory. All of the storage and power backup is contained in one compact, standard low profile card without requiring external cabling or power packs.

-

Optimal for efficient tiering of different classes of memory storage

-

Unique advantages over NVDIMMs

-

Scale NVRAM and flash tiers proportionately as SSDs are added to a system

-

Special delegated move capability reduces traffic across the system bus and host overhead

-

Simplifies low latency support for new NVMe-over-Fabric, RDMA/RoCE, and NVMe Direct implementations

Applications

The RMS-325 and company’s other products target the advanced data center and cloud storage requirements involving virtualization, big data analytics, web services, and data warehousing. Environments are often based on software-defined frameworks, distributed scale out and converged infrastructure.

Click to enlarge

Flash management: Symphonic CFM or Open-Channel mode

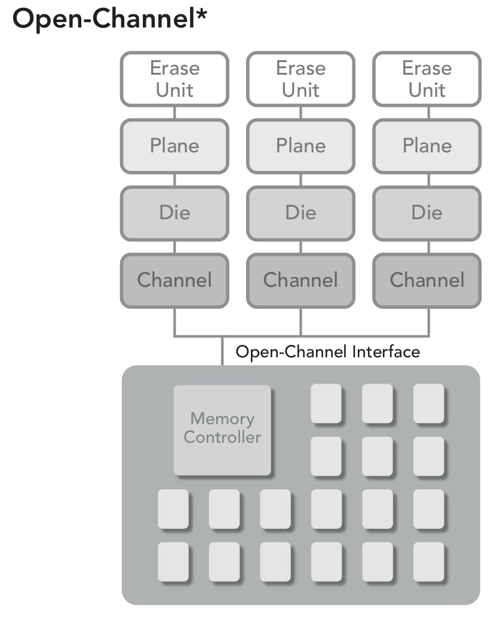

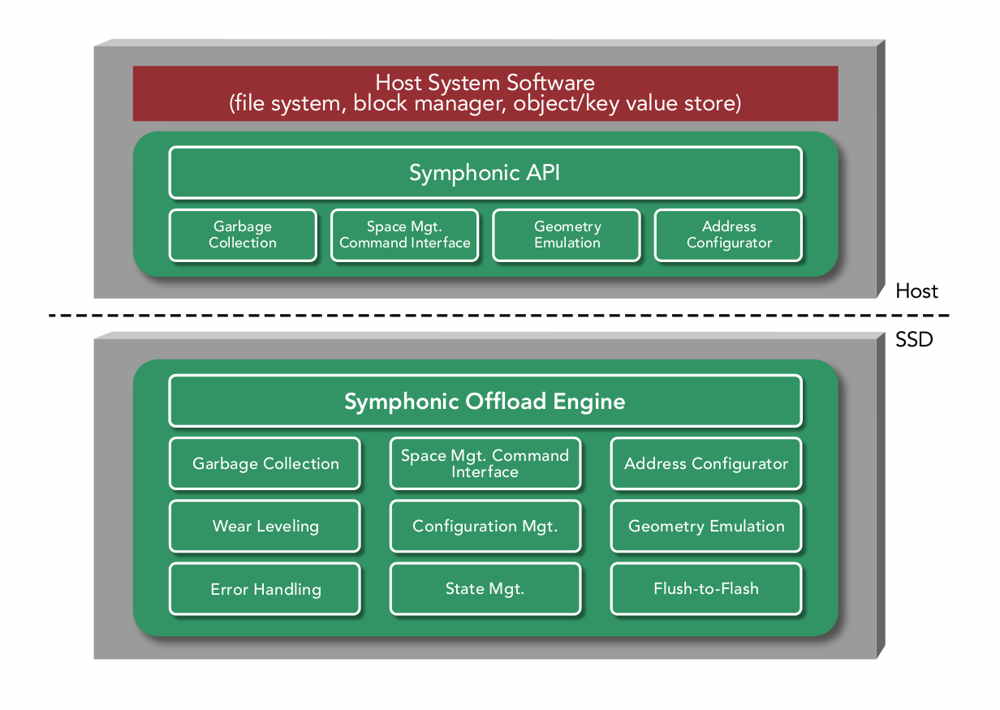

Today’s SSDs are encumbered by a legacy abstraction known as the Flash Translation Layer (FTL) that incurs expensive overhead, unpredictable latency spikes, and sub-optimal performance that prematurely wears out the flash media. Alternatively, the RMS-325 provides options for Symphonic Cooperative Flash Management or the Open-Channel mode (Spec. Revision 1.2, April 2016) of flash management that overcome the limitations of FTLs.

Click to enlarge

Host controlled memory tiering

Host controlled memory tiering

Hosts possess more intelligence than SSDs when it comes to data, how it should be coalesced, prioritized, and scheduling when it should be transferred between different types of memory. The RMS-325 provides host systems with control over its up to 12GB of user NVRAM, enabling more deterministic system-level tiering and efficient utilization of capacity that translates into overall cost reductions.

Advantages over NVDIMM

When located on the flash SSD, PCIe NVRAM has several advantages over many NVDIMM implementations:

-

Cost Savings

Many high end flash SSDs already have capacitor backed NV-DRAM utilized for internal mapping tables and write-caching. The component costs of incrementally expanding this capacity with larger DRAM and capacitors generally costs less in material than adding an entire new NVDIMM and its remotely cabled capacitor pack. But that’s only the obvious cost savings. -

Flash for free (almost)

One of the most expensive parts of a NVDIMM BoM is the NAND flash required for backup. Because of the high bandwidth transfer requirement to the flash (due to limited capacitor power), designers ironically often find that more expensive SLC NAND is the cheapest alternative for a NVDIMM. The reason is that meeting the bandwidth requirement with slower MLC flash takes far more dies, and even the smallest MLC dies have more capacity than the application requires. So for example an 8GB NVDIMM may require 16GB of SLC for backup, but 128GB of MLC, where $/GB is a secondary cost factor to bandwidth/GB.

Alternatively, flash SSDs typically have dozens or even hundreds of dies, with terabytes of capacity. Reserving a very small portion of capacity across dies enables utilizing the cheaper MLC/TLC but without huge overprovisioning as is required when using this memory with a NVDIMM. It also reduces the capacitor backup requirement as the parallel transfer rate across so many dies is that much faster. -

Performance

-

DMA

Many OS and host software applications are unequipped to handle persistent storage on the memory bus. The answer for NVDIMM persistence is often to create a RAM disk, requiring a path through the kernel’s block layer. But NVDIMMs do not possess DMA engines, and without DMA engines the additional overhead of the block layer can have a significant impact on performance and CPU utilization. -

Overhead

One of the most common applications for NVRAM is write-ahead logging/caching. But utilizing a NVDIMM requires an additional data path, copying, and CPU overhead to transfer the data from the NVDIMM to a SSD that the RMS-325 avoids because it uniquely combines User NVRAM, flash, and a DMA engine with a Delegated Move function all on,e same device

-

-

No cabling to a remote capacitor in a tight chassis is required as is the case with a NVDIMM.

-

Scalability – NVRAM capacity requirements are often proportional to the backend flash capacities that they support. It’s challenging to incrementally add new NVDIMMs every time another SSD is added to a system, or replaced by a larger SSD, and flash densities have been increasing at an unprecedented rate. This becomes especially impractical when trying to find new repositories and cabling for additional NVDIM capacitor packs inside an existing cassis.

NVMe-over-Fabric, RDMA/RoCE, and NVMe Direct

Creating new fabrics over PCIe and utilizing existing network device drivers can each be simplified and made more performant by having low latency, deterministic, byte addressable memory accessible on the PCIe endpoints. The RMS-325 architecture is suited for this application as it combines this byte addressable NVRAM on the same device as the high capacity flash storage, providing a NVMe DMA engine and the Delegated Move function.

RMS-325 early access units will be available to select customers in October with production availability in Q1.

(*) Open-Channel mode Spec. Revision 1.2, April 2016

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter